A Comprehensive Guide to Evaluating On-Target and Off-Target Prediction Tools for CRISPR Genome Editing

This article provides researchers, scientists, and drug development professionals with a structured framework for evaluating computational tools that predict on-target and off-target effects in CRISPR genome editing.

A Comprehensive Guide to Evaluating On-Target and Off-Target Prediction Tools for CRISPR Genome Editing

Abstract

This article provides researchers, scientists, and drug development professionals with a structured framework for evaluating computational tools that predict on-target and off-target effects in CRISPR genome editing. With the first CRISPR-based therapies now approved and regulatory scrutiny intensifying, the ability to accurately forecast editing outcomes is critical for both research reproducibility and clinical safety. We explore the foundational principles of off-target effects, survey the latest methodological advancements including deep learning models like CCLMoff and CRISPR-Embedding, address common troubleshooting and optimization challenges, and provide a comparative analysis of validation strategies. This guide synthesizes current best practices to empower scientists in selecting and applying the most robust prediction tools for their specific applications, from basic research to therapeutic development.

Understanding CRISPR Off-Target Effects: The Foundation for Accurate Prediction

Core Concepts and Definitions

In both therapeutic drug development and genome editing, the concepts of "on-target" and "off-target" effects are fundamental to evaluating efficacy and safety. These terms describe the intended versus unintended biological activities of an intervention, with critical implications for research and clinical applications.

On-target effects refer to the intended biological activity at the desired site of action. In pharmacology, this represents the expected therapeutic effect resulting from modulation of the primary drug target [1]. In CRISPR/Cas9 genome editing, on-target activity is the precise modification at the intended genomic locus [2].

Off-target effects constitute unintended consequences occurring at sites other than the primary target. In toxicology, these are adverse effects resulting from modulation of biologically related or unrelated targets [1]. In genome editing, off-target effects include non-specific cleavage at genomic sites with sequence similarity to the target [3] [2]. A third category, chemical-based toxicity, describes effects related to a compound's physicochemical properties rather than specific target interactions [1].

On-Target and Off-Target Effects in Drug Development

Characterization and Consequences

Drug off-target effects represent a major challenge in pharmaceutical development, often discovered late in clinical trials or during post-marketing surveillance. The hypertensive side effect of torcetrapib, a cholesteryl ester transfer protein (CETP) inhibitor, exemplifies this problem. Despite its intended beneficial effect on cholesterol levels, torcetrapib was withdrawn from phase III clinical trials due to fatal hypertension in some patients—an effect subsequently attributed to off-target activity rather than its primary mechanism [4].

Off-target drug effects can be identified through systematic approaches that compare transcriptional responses between drug treatment and specific target inhibition. One framework combines promoter expression profiling after drug treatment with gene perturbation of the primary drug target, allowing researchers to distinguish between on-target and off-target transcriptional responses [5].

Experimental Approaches for Identification

Table 1: Experimental Methods for Drug On-Target and Off-Target Identification

| Method | Application | Key Features | References |

|---|---|---|---|

| Transcriptional Profiling | Identification of on/off-target pathways | Combines drug treatment with target knockdown; uses Cap Analysis of Gene Expression (CAGE) | [5] |

| Structural Bioinformatics | Prediction of protein-drug off-targets | Based on ligand binding site similarity; enables proteome-wide off-target prediction | [4] |

| Metabolomics with Machine Learning | Identification of intracellular drug targets | Analyzes global metabolic perturbations; uses multi-class logistic regression models | [6] |

| Interactome-Based Deep Learning | Prediction of transcriptional drug responses | Infers drug-target interactions and downstream signaling effects | [7] |

Advanced computational approaches now integrate structural bioinformatics with systems biology. One methodology applied to torcetrapib combined prediction of protein off-targets based on structural analysis with metabolic network modeling to simulate drug treatment effects in human renal function [4]. This approach identified prostaglandin I2 synthase (PTGIS) and acyl-CoA oxidase 1 (ACOX1) as potential causal off-targets contributing to hypertensive side effects.

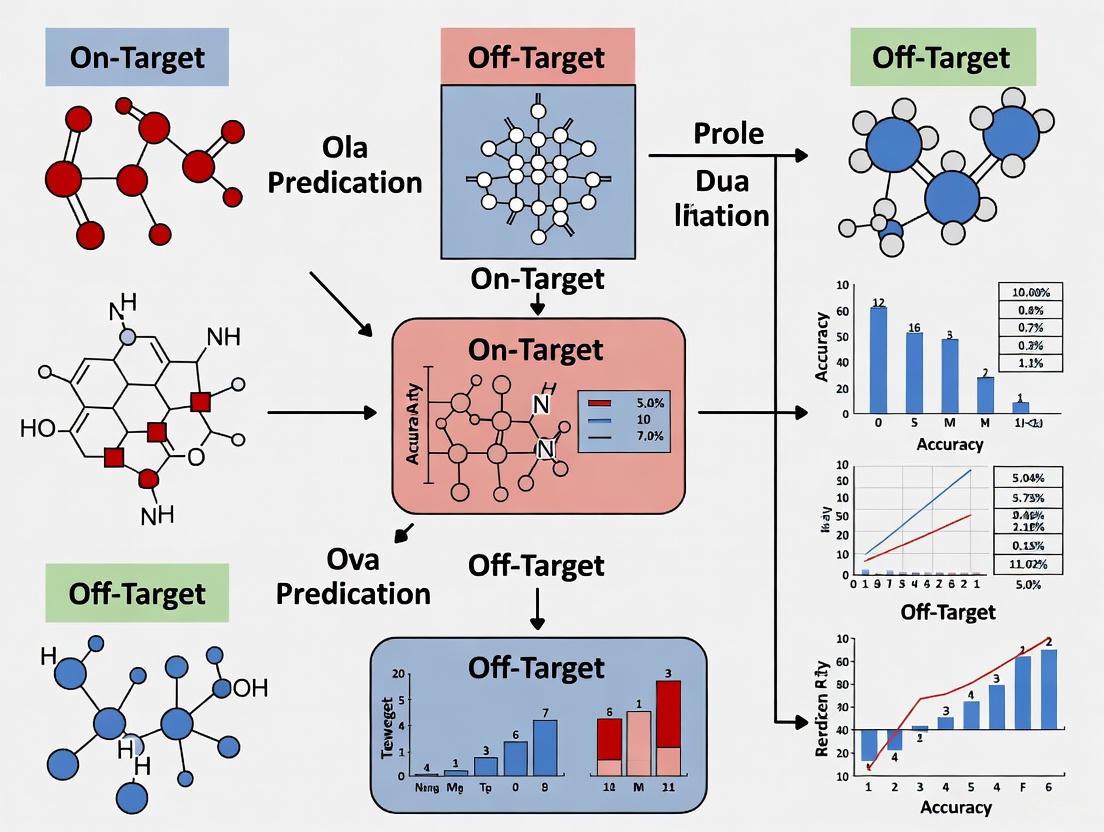

Diagram 1: Drug Action Pathways. This diagram illustrates the three primary categories of drug effects: on-target therapeutic effects, off-target adverse effects, and chemical-based toxicity.

Integrated Workflow for Drug Target Identification

Diagram 2: Drug Off-Target Identification Workflow. This integrated framework combines metabolomics, machine learning, metabolic modeling, and structural analysis to identify unknown drug targets, as demonstrated for antibiotic CD15-3 [6].

On-Target and Off-Target Effects in Genome Editing

Mechanisms and Implications

CRISPR/Cas9 systems have revolutionized genome editing but face significant challenges with off-target effects. The wild-type Cas9 from Streptococcus pyogenes (SpCas9) can tolerate between three and five base pair mismatches, potentially creating double-stranded breaks at multiple genomic sites with sequence similarity to the intended target [2]. These off-target edits present particular concern for clinical applications, where unintended modifications in oncogenes or tumor suppressor genes could have serious consequences [2].

The evaluation of adeno-associated virus (AAV) vector-mediated gene editing in mouse livers demonstrated efficient on-target editing (36.45% ± 18.29% at the F9 locus) while off-target events were rare or below whole-genome sequencing detection limits [8]. This suggests that with careful design, specific editing with minimal off-target effects is achievable.

Prediction Tools and Evaluation

Table 2: Comparison of CRISPR Off-Target Prediction Algorithms

| Algorithm Type | Examples | Key Principles | Performance Notes | |

|---|---|---|---|---|

| Alignment-Based | Cas-OFFinder, CHOPCHOP, GT-Scan | Employs mismatch patterns and genome-wide scanning | Foundation for early prediction tools | [3] [9] |

| Formula-Based | CCTop, MIT | Assigns different weights to PAM-distal and PAM-proximal mismatches | MIT specificity score ranges 0-100 (100=best) | [9] |

| Energy-Based | CRISPRoff | Approximates binding energy for Cas9-gRNA-DNA complex | Based on thermodynamic properties | [3] |

| Learning-Based | DeepCRISPR, CRISPR-Net, CCLMoff | Uses deep learning to extract sequence patterns | Superior performance; state-of-the-art | [3] |

Independent evaluation of CRISPR/Cas9 predictions has revealed that sequence-based off-target predictions are highly reliable when properly implemented. The Cutting Frequency Determination (CFD) score demonstrates the best performance with an area under the curve (AUC) of 0.91 for distinguishing validated off-targets from false positives [9]. Tools using the BWA sequence search algorithm, such as CRISPOR, can identify all validated off-targets, while some earlier implementations missed certain off-target sites, including those with only two mismatches [9].

Detection Methods and Validation

Table 3: Experimental Methods for CRISPR Off-Target Detection

| Method Category | Examples | Detection Principle | Sensitivity | |

|---|---|---|---|---|

| Cas9 Binding Detection | CHIP-seq, SELEX | Identifies Cas9 binding sites | Varies by protocol | |

| DSB Detection | Digenome-seq, CIRCLE-seq, DISCOVER-seq | Detects double-strand breaks | ~0.1-0.2% for whole-genome assays | [3] [9] |

| Repair Product Detection | GUIDE-seq, IDLV | Identifies repair products from DSBs | High sensitivity for targeted sites | [3] |

| Comprehensive Analysis | Whole Genome Sequencing (WGS) | Sequences entire genome | Most comprehensive but expensive | [2] |

The sensitivity of off-target detection assays varies significantly. Targeted sequencing approaches can detect off-targets with modification frequencies lower than 0.001%, while whole-genome assays typically have sensitivities around 0.1-0.2% [9]. Most validated off-targets (88.4%) contain up to four mismatches relative to the guide sequence, with decreasing cleavage frequencies as mismatch count increases [9].

The Scientist's Toolkit: Essential Research Reagents and Methods

Table 4: Key Research Reagents and Methods for On/Off-Target Studies

| Reagent/Method | Application | Function/Purpose | References |

|---|---|---|---|

| CCLMoff | CRISPR off-target prediction | Deep learning framework incorporating RNA language model | [3] |

| CRISPOR | Guide RNA selection | Predicts off-targets and helps select efficient guides | [9] |

| CIRCLE-seq | CRISPR off-target detection | In vitro method for identifying Cas9-induced double-strand breaks | [3] |

| GUIDE-seq | CRISPR off-target detection | In vivo method detecting repair products from DSBs | [3] |

| Traffic Light Reporter (TLR) | Genome editing quantification | Simultaneously measures NHEJ and HR events | [10] |

| CEL-I / T7E1 assay | Mutation detection | Gel-based detection of nuclease-induced mutations (~1-2% sensitivity) | [10] |

| Metabolic Network Models | Drug off-target prediction | Context-specific modeling (e.g., renal function) | [4] |

| RNA-FM Model | Sequence analysis | Pretrained on 23 million RNA sequences for feature extraction | [3] |

The systematic evaluation of on-target and off-target effects represents a critical component of therapeutic development and genome editing applications. While significant progress has been made in prediction algorithms and detection methodologies, challenges remain in comprehensively identifying off-target activities, particularly in clinical contexts. The continuing refinement of computational tools, combined with increasingly sensitive experimental methods, provides a pathway toward safer, more precise interventions. As these technologies evolve, standardized evaluation frameworks and validation protocols will be essential for advancing both basic research and clinical applications.

CRISPR-Cas9 technology has revolutionized genetic research and therapeutic development by enabling precise genome editing. However, its potential is constrained by off-target effects—unintended modifications at sites other than the intended target. These inaccuracies can compromise experimental results and pose significant safety risks in clinical applications. Understanding the key factors governing CRISPR specificity is therefore essential for advancing both basic research and therapeutic applications. This guide provides a comprehensive analysis of three primary determinants of CRISPR specificity: protospacer adjacent motif (PAM) sequences, seed regions, and mismatch tolerance, with supporting experimental data and methodological protocols for their evaluation.

PAM Sequences: The Gateway to DNA Cleavage

Biological Function and Specificity Implications

The protospacer adjacent motif (PAM) is a short DNA sequence (typically 2-6 base pairs) adjacent to the target DNA region that must be recognized by the Cas nuclease for successful cleavage [11]. This sequence serves as a critical "gatekeeper" in CRISPR systems, originally evolving in bacterial immune systems to distinguish between self and non-self DNA, thus preventing autoimmunity by ensuring the Cas nuclease does not target the bacterium's own CRISPR arrays [11].

The PAM's location is generally found 3-4 nucleotides downstream from the Cas9 cut site [11]. For the most commonly used Streptococcus pyogenes Cas9 (SpCas9), the canonical PAM sequence is 5'-NGG-3', where "N" represents any nucleotide base [11] [12]. The requirement for this specific sequence immediately constrains the genomic loci accessible to CRISPR editing, as cleavage can only occur at sites flanked by a compatible PAM.

PAM Diversity Across Cas Nucleases

Different Cas nucleases recognize distinct PAM sequences, providing researchers with options to target different genomic regions (Table 1) [11]. The length and specificity of these PAM sequences directly influence targeting range and potential off-target effects. Cas9 from Staphylococcus aureus (SaCas9), for instance, recognizes the longer NNGRR(N) PAM, which reduces its potential target sites but may improve specificity [12]. Similarly, Cas12a (Cpf1) orthologs typically recognize T-rich PAMs (TTTV, where V is A, C, or G) [11].

Table 1: PAM Sequences and Properties of Selected CRISPR Nucleases

| CRISPR Nuclease | Organism Source | PAM Sequence (5' to 3') | Targeting Range | Specificity Considerations |

|---|---|---|---|---|

| SpCas9 | Streptococcus pyogenes | NGG | Broad | Standard choice; moderate specificity |

| SaCas9 | Staphylococcus aureus | NNGRRT or NNGRRN | Reduced | Longer PAM may improve specificity |

| NmeCas9 | Neisseria meningitidis | NNNNGATT | Reduced | Longer PAM reduces off-target potential |

| CjCas9 | Campylobacter jejuni | NNNNRYAC | Reduced | Intermediate PAM length |

| LbCas12a (Cpf1) | Lachnospiraceae bacterium | TTTV | T-rich regions | Distinct cleavage pattern (staggered cuts) |

| AacCas12b | Alicyclobacillus acidiphilus | TTN | Reduced | Thermostable variant |

| Sc++ (engineered) | Streptococcus canis | NNG | Expanded | Engineered for broader PAM recognition |

| SpRY | Engineered SpCas9 | NRN > NYN | Near-PAMless | Maximizes targeting range with reduced specificity |

Engineering PAM Specificity

Protein engineering approaches have created Cas variants with altered PAM specificities to expand targeting capabilities. For example, Sc++ and HiFi-Sc++ were engineered from Streptococcus canis Cas9 to recognize 5'-NNG-3' PAMs while maintaining robust cleavage activity and minimal off-target effects [13]. Similarly, SpCas9-NG and SpRY variants recognize NG and NR (R = A/G) or NY (Y = C/T) PAMs respectively, substantially expanding the targetable genome [12].

However, a fundamental trade-off exists between PAM compatibility and editing efficiency. Recent biochemical studies reveal that reduced PAM specificity can cause persistent non-selective DNA binding and recurrent failures to engage the target sequence through stable guide RNA hybridization, ultimately reducing genome-editing efficiency in cells [14]. Efficient editing appears to rely on an optimized two-step target capture process where selective but low-affinity PAM binding precedes rapid DNA unwinding [14].

Seed Regions: The Precision Core of Target Recognition

Definition and Mechanistic Role

The seed region refers to the PAM-proximal 10-12 nucleotide segment of the guide RNA that is crucial for specific recognition and cleavage of target DNA [12]. This region requires nearly perfect complementarity for stable Cas9 binding and subsequent DNA cleavage. The seed region's importance stems from its role in the initial steps of DNA interrogation—after PAM recognition, Cas9 begins unwinding the DNA duplex from the PAM-proximal end, with the seed region nucleotides forming the first stable base pairs with the target DNA [12].

Position-Dependent Specificity

Mismatches between the guide RNA and target DNA within the seed region are significantly less tolerated than mismatches in the PAM-distal region [12]. Even single nucleotide mismatches in the seed region can dramatically reduce cleavage efficiency, while multiple mismatches in this region typically abolish cleavage entirely. This position-dependent effect creates a gradient of tolerance, with the nucleotides immediately adjacent to the PAM being the most sensitive to mismatches.

Mismatch Tolerance: Balancing Flexibility and Specificity

Mechanisms of Off-Target Effects

CRISPR-Cas9 can tolerate imperfect complementarity between the guide RNA and target DNA, leading to off-target effects at sites with partial sequence similarity to the intended target. The system can accommodate various types of imperfections:

- Single-base mismatches: Non-complementary base pairs at various positions [15] [12]

- DNA/RNA bulges: Extra nucleotide insertions resulting from imperfect complementarity [12]

- Non-canonical PAM recognition: Cas9 can occasionally recognize suboptimal PAM sequences such as NAG or NGA, albeit with reduced efficiency [12]

The 3' end of the sgRNA (distal from the PAM) demonstrates greater tolerance for mismatches, with studies showing that CRISPR-Cas9 can induce off-target cleavage even with up to six base mismatches in this distal region [12].

Position-Specific Tolerance Patterns

Mismatch tolerance is highly dependent on both the position within the guide sequence and the specific nucleotide involved [15]. Recent research using bioluminescence resonance energy transfer (BRET)-based reporter systems has demonstrated that mismatch tolerance is both nucleotide- and position-specific, enabling more accurate prediction of off-target sites [15].

Experimental Methods for Assessing Specificity

In Vitro Detection Methods

Table 2: Experimental Methods for Detecting Off-Target Effects

| Method | Category | Principle | Sensitivity | Throughput | Key Applications |

|---|---|---|---|---|---|

| Digenome-seq | In vitro | In vitro Cas9 digestion of genomic DNA followed by whole-genome sequencing | High | Medium | Genome-wide off-target identification without cellular context |

| CIRCLE-seq | In vitro | Circularization and amplification of genomic DNA before in vitro Cas9 cleavage | Very High | High | Sensitive detection of rare off-target sites |

| SITE-seq | In vitro | Capture and sequencing of Cas9-bound DNA fragments | Medium | Medium | Identification of Cas9 binding sites |

| BLESS | In situ | Direct in situ labeling of DNA breaks followed by enrichment and sequencing | Medium | Low | Snapshots of DSBs in fixed cells |

| GUIDE-seq | In vivo | Capture of double-strand break sites using oligonucleotide tags | High | Medium | Genome-wide profiling in living cells |

| DISCOVER-seq | In vivo | Identification of DNA repair factors recruited to break sites | Medium | Medium | In vivo off-target detection in various tissues |

| BRET-based reporter | Cellular reporter | Bioluminescence resonance energy transfer to detect cleavage events | High for subtle changes | High | Quantifying mismatch tolerance and characterizing cleavage |

Detailed Protocol: BRET-Based Reporter Assay

The BRET (Bioluminescence Resonance Energy Transfer) reporter system offers a sensitive method for quantifying subtle changes in gRNA binding and mismatch tolerance [15].

Principle: BRET relies on energy transfer between a bioluminescent donor (typically luciferase) and a fluorescent acceptor when in close proximity. Cleavage of the DNA target separates the donor and acceptor, reducing energy transfer.

Workflow:

- Reporter Construction: Create a vector containing the target DNA sequence flanked by BRET donor and acceptor molecules.

- Cell Transfection: Co-transfect cells with the BRET reporter construct and CRISPR-Cas9/sgRNA components.

- Treatment and Measurement: Treat cells with the substrate for the bioluminescent donor and measure both donor and acceptor emission signals.

- Data Analysis: Calculate the BRET ratio (acceptor emission/donor emission). Reduced BRET ratios indicate successful cleavage and separation of donor and acceptor molecules.

Applications: This sensitive system is particularly suitable for high-throughput screening of mismatch tolerance and characterizing cleavage events in mismatched sgRNA-Cas9/DNA interactions [15].

Figure 1: BRET-Based Reporter Assay Workflow for Assessing CRISPR Specificity

Detailed Protocol: GUIDE-seq

GUIDE-seq (Genome-wide Unbiased Identification of DSBs Enabled by Sequencing) is a highly sensitive method for profiling off-target cleavage in living cells [12] [16].

Principle: This method uses short, double-stranded oligonucleotides that are incorporated into double-strand breaks (DSBs) through the cellular repair machinery, followed by enrichment and sequencing of these tagged sites.

Step-by-Step Procedure:

- Oligonucleotide Tag Design: Design a blunt-ended, double-stranded oligonucleotide tag with phosphorothioate modifications for stability.

- Cell Transfection: Co-deliver the CRISPR-Cas9 components (Cas9 and sgRNA) along with the oligonucleotide tag into cells.

- Genomic DNA Extraction: Harvest cells 72 hours post-transfection and extract genomic DNA.

- Library Preparation and Sequencing:

- Fragment genomic DNA

- Ligate sequencing adapters

- Enrich tag-integrated fragments using PCR with tag-specific primers

- Sequence using next-generation sequencing platforms

- Bioinformatic Analysis:

- Map sequenced reads to the reference genome

- Identify genomic sites with oligonucleotide tag integration

- Filter and annotate off-target sites

Advantages: GUIDE-seq can detect off-target sites with frequencies as low as 0.1% and identifies both known and novel off-target sites without prior sequence bias [16].

Bioinformatics Tools for Specificity Prediction

Evolution of Prediction Algorithms

Computational tools for predicting CRISPR off-target effects have evolved from simple alignment-based approaches to sophisticated machine learning models (Table 3). Early tools like Cas-OFFinder used genome-wide scanning with specific mismatch patterns to identify potential off-target sites [16]. Subsequent formula-based methods such as MIT CRISPR design assigned different weights to mismatches based on their position relative to the PAM [16].

Table 3: Comparison of CRISPR Specificity Prediction Tools

| Tool | Algorithm Type | Key Features | PAM Flexibility | Mismatch/Bulge Consideration | Limitations |

|---|---|---|---|---|---|

| Cas-OFFinder | Alignment-based | Genome-wide scanning with user-defined mismatches/indels | Customizable | Yes (mismatches and DNA bulges) | No efficiency prediction |

| CCTop | Formula-based | Position-specific mismatch weighting | Fixed PAM | Mismatches only | Limited to predefined PAMs |

| DeepCRISPR | Deep learning | Simultaneous on/off-target prediction using neural networks | Fixed PAM | Limited bulge consideration | Training data dependent |

| CCLMoff | Transformer-based language model | Pretrained on RNAcentral; handles diverse off-target patterns | Flexible | Mismatches and bulges | Computational resource intensive |

| GuideScan2 | Burrows-Wheeler transform | Memory-efficient genome indexing; specificity analysis | Customizable | Mismatches and bulges | Command-line expertise needed |

| CRISPRon | Machine learning | Incorporates gRNA-DNA binding energy features | Fixed PAM | Mismatches primarily | Focus on efficiency prediction |

Advanced Deep Learning Approaches

Recent advances incorporate deep learning and language models for improved off-target prediction. CCLMoff, a transformer-based framework, incorporates a pretrained RNA language model from RNAcentral to capture mutual sequence information between sgRNAs and target sites [16]. This approach demonstrates strong generalization across diverse next-generation sequencing-based detection datasets and successfully captures the biological importance of the seed region [16].

GuideScan2 represents another significant advancement, using a Burrows-Wheeler transform for memory-efficient, parallelizable construction of high-specificity CRISPR guide RNA databases [17]. Its novel search algorithm based on simulated reverse-prefix trie traversals enables comprehensive off-target enumeration without pre-specifying targeting rules, accommodating different gRNA lengths, PAM sequences, and off-target definitions including mismatches or bulges [17].

Figure 2: Evolution of Bioinformatics Tools for CRISPR Off-Target Prediction

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents for CRISPR Specificity Analysis

| Reagent/Material | Function | Specific Examples | Application Context |

|---|---|---|---|

| Cas9 Nuclease Variants | DNA cleavage enzyme | SpCas9, SaCas9, HiFi-Sc++ | Core editing component; choice affects PAM recognition and specificity |

| Guide RNA Components | Target recognition | sgRNA, crRNA:tracrRNA complex | Specificity determined by sequence complementarity |

| Reporter Plasmids | Detection of editing efficiency | BRET reporters, GFP-based systems | Quantifying on-target and off-target activity |

| Oligonucleotide Tags | Capture of DSB sites | GUIDE-seq tags | Genome-wide identification of off-target sites |

| Cell Lines | Experimental context | HEK293, HCT116, iPSCs | Validation in relevant biological systems |

| Next-Generation Sequencing Platforms | Off-target identification | Illumina, PacBio | Comprehensive mapping of editing outcomes |

| Bioinformatics Software | Specificity prediction | GuideScan2, CCLMoff, Cas-OFFinder | Computational assessment of gRNA designs |

The specificity of CRISPR-Cas9 editing is governed by a complex interplay between PAM recognition, seed region complementarity, and position-dependent mismatch tolerance. Understanding these factors enables researchers to design more precise genome editing experiments and develop strategies to minimize off-target effects. Experimental methods such as GUIDE-seq and BRET-based reporters provide robust empirical data on cleavage specificity, while advanced computational tools like CCLMoff and GuideScan2 leverage machine learning to predict potential off-target sites during the design phase. As CRISPR technology advances toward therapeutic applications, continued refinement of both experimental and computational approaches for assessing specificity will be essential for ensuring efficacy and safety. Future directions include the development of more sophisticated prediction algorithms that incorporate epigenetic factors and cellular context, along with continued engineering of Cas nucleases with improved specificity profiles.

In the development of CRISPR-based therapies, accurately predicting and minimizing off-target effects is a critical safety requirement. Regulatory bodies like the U.S. Food and Drug Administration (FDA) now expect a thorough characterization of these unintended edits, making the choice of computational prediction tools a fundamental step in the therapeutic development pipeline [18]. This guide provides an objective comparison of state-of-the-art prediction tools, framing their evaluation within the context of evolving FDA guidelines that encourage the use of advanced, human-relevant computational models [19] [20].

The FDA's Evolving Regulatory Framework for Advanced Tools

The FDA has recognized the increasing role of artificial intelligence (AI) and computational models in drug development. A key draft guidance, "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products," issued in 2025, outlines the Agency's current thinking on this matter [19] [21]. This document was informed by extensive experience, including the review of over 500 submissions containing AI components from 2016 to 2023 [19].

This shift signifies a broader move toward modernizing regulatory science. The FDA has explicitly announced plans to phase out animal testing requirements for certain drugs, including monoclonal antibodies, and to replace them with more human-relevant methods, such as AI-based computational models of toxicity and lab-grown human organoids [20]. This creates a direct regulatory imperative for adopting sophisticated in silico tools.

For CRISPR-based products, this means that demonstrating safety involves not just experimental validation but also leveraging the best available computational methods to predict and screen for potential off-target effects during the design phase [18]. The FDA's focus on this was evident during the review of Casgevy (exa-cel), the first approved CRISPR-based medicine, where a key focus was the potential for off-target edits in patients with rare genetic variants [18].

Comparative Evaluation of On-Target & Off-Target Prediction Tools

Evaluation of Foundational Tools

The first independent evaluation of CRISPR/Cas9 prediction algorithms, conducted by Haeussler et al., established a baseline for tool performance. The study led to the development of CRISPOR, a guide RNA selection tool that integrates multiple scoring systems [22] [9].

| Tool | Primary Function | Key Algorithm/Feature | Evaluated Performance |

|---|---|---|---|

| CRISPOR [22] [9] | Guide RNA selection & off-target prediction | Integrates multiple scoring systems (e.g., MIT, CFD), uses BWA for genome search | Reliably identified most off-targets with >0.1% mutation rate; CFD score showed best discrimination (AUC=0.91) [9]. |

| MIT Specificity Score [9] | Ranking guides by specificity | Heuristic based on position and number of mismatches | Correlated with off-target counts and modification frequencies; less discriminative than CFD (AUC=0.87) [9]. |

| CFD Score [9] | Off-target site scoring | Based on a large dataset of mismatch tolerance | Best performance in distinguishing validated off-targets (AUC=0.91); cutoff of 0.023 reduced false positives by 57% with minimal true positive loss [9]. |

The study found that sequence-based off-target predictions were reliable for identifying most off-targets with mutation rates above 0.1%. It also highlighted that the performance of on-target efficiency prediction algorithms varied significantly across different biological models, such as zebrafish, and depended on how the guide RNA was produced [22] [9].

Benchmarking Next-Generation Deep Learning Models

A 2025 study by Kimata and Satou introduced DNABERT-Epi, a novel model integrating a pre-trained DNA foundation model with epigenetic features. The study provided a comprehensive benchmark against five state-of-the-art methods [23].

| Model | Core Methodology | Key Differentiating Features | Reported Advantage |

|---|---|---|---|

| DNABERT-Epi [23] | Transformer architecture pre-trained on human genome, integrated with epigenetic features. | Uses DNABERT; incorporates H3K4me3, H3K27ac, and ATAC-seq data. | Achieved competitive/superior performance; ablation studies confirmed that both pre-training and epigenetic data significantly enhance accuracy [23]. |

| CRISPR-BERT [23] | Transformer architecture for bioinformatics. | Task-specific deep learning. | Promising results, but outperformed by DNABERT-Epi in benchmark [23]. |

| CrisprBERT [23] | Transformer architecture for bioinformatics. | Task-specific deep learning. | Promising results, but outperformed by DNABERT-Epi in benchmark [23]. |

The benchmark was conducted under a unified cross-validation framework using seven distinct off-target datasets, including both in vitro (CHANGE-seq) and in cellula (GUIDE-seq, TTISS) data. Performance was measured by how well models predicted active versus inactive off-target sites [23].

Diagram 1: FDA AI Regulatory Framework Evolution

Experimental Protocols for Tool Validation

Dataset Curation and Preprocessing

The benchmark for DNABERT-Epi utilized one in vitro and six in cellula off-target datasets [23]. To ensure a fair comparison, datasets were curated from a shared repository. A critical preprocessing step involved addressing severe class imbalance between active (positive) and inactive (negative) off-target sites. This was managed by random downsampling of the negative class in the training data to 20% of its original size, using a fixed random seed for reproducibility. Test data remained unaltered for unbiased evaluation [23].

Epigenetic Feature Integration Workflow

For the DNABERT-Epi model, epigenetic features (H3K4me3, H3K27ac, ATAC-seq) were processed as follows [23]:

- Signal Extraction: A 1000 bp window (±500 bp from the cleavage site) was analyzed.

- Outlier Handling: Signal values beyond Q1 - 1.5IQR or Q3 + 1.5IQR were capped.

- Normalization: A Z-score transformation was applied across the dataset.

- Binning: The normalized signal was divided into 100 bins (10 bp each), and the average signal per bin was calculated.

- Concatenation: The three 100-dimensional vectors were combined into a final 300-dimensional input vector for the model.

Model Training and Interpretation

The DNABERT model underwent a two-stage fine-tuning process [23]. Advanced interpretability techniques, including SHAP (SHapley Additive exPlanations) and Integrated Gradients, were applied to the trained model. This provided insights into the specific epigenetic marks and sequence-level patterns that most influenced its predictions, making the model's decision-making process more transparent [23].

Diagram 2: DNABERT-Epi Model Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful prediction and validation of CRISPR edits rely on a combination of computational and experimental reagents.

| Tool or Reagent | Function in Research |

|---|---|

| CRISPOR Website [22] [9] | A publicly available web tool that assists in guide RNA selection by predicting off-targets and scoring on-target efficiency for over 120 genomes. |

| High-Fidelity Cas9 Variants [18] | Engineered versions of the Cas9 nuclease (e.g., eSpCas9, SpCas9-HF1) designed to have reduced off-target cleavage activity, though sometimes with a trade-off in on-target efficiency. |

| Chemically Modified gRNAs [18] | Synthetic guide RNAs with modifications (e.g., 2'-O-methyl analogs, 3' phosphorothioate bonds) that can increase stability, enhance on-target efficiency, and reduce off-target effects. |

| GUIDE-seq [23] [18] | An experimental method (Guide-directed In-Vitro Evolution Sequencing) that detects off-target cleavage sites genome-wide in a cellular context by capturing double-stranded breaks. |

| CHANGE-seq [23] | An in vitro method for identifying off-target sites, often used for generating large training datasets for computational models. |

| Inference of CRISPR Edits (ICE) [18] | A popular, free software tool for analyzing Sanger sequencing data from CRISPR experiments to determine editing efficiency and identify off-target edits. |

Discussion and Future Directions

The progression from heuristic scoring algorithms to deep learning models like DNABERT-Epi demonstrates a significant leap in predictive accuracy. The integration of epigenetic features is a crucial advance, as chromatin accessibility directly influences Cas9 activity [23]. Furthermore, the use of models pre-trained on the entire human genome allows them to understand the contextual "language" of DNA, leading to more robust predictions [23].

This evolution aligns perfectly with the FDA's push for sophisticated computational tools. As the agency moves to accept and even encourage New Approach Methodologies (NAMs), including AI models, the bar for demonstrating CRISPR therapy safety will rise [19] [20]. Researchers must therefore not only use these tools but also understand their inner workings. The application of explainable AI (XAI) techniques, such as SHAP, will be vital for building regulatory confidence and for researchers to interpret predictions meaningfully [23].

The standardization of tool evaluation, as seen in the cross-validation benchmarks, is essential for the field to objectively compare methods and for regulators to assess their validity. Future developments will likely involve even more integrated models that combine genomic context, epigenetic states, and cellular environment data to provide the comprehensive safety profile demanded for clinical applications.

In the field of CRISPR-Cas9 genome editing, the precise assessment of off-target effects is a critical determinant of both research validity and therapeutic safety. The methods for detecting these unintended cleavages fall into two distinct categories: biased and unbiased detection. Biased methods, also known as in silico prediction, rely on algorithms to predict potential off-target sites based on sequence similarity to the guide RNA (gRNA). In contrast, unbiased methods employ experimental techniques to identify off-target effects in a genome-wide manner without pre-selection, directly within living cells [24]. This guide provides an objective comparison of these approaches, detailing their methodologies, performance, and appropriate applications for researchers and drug development professionals.

Core Concepts: Biased vs. Unbiased Detection

Biased detection refers to a targeted approach where potential off-target sites are first identified computationally based on their similarity to the intended target sequence. These predicted sites are then empirically validated using methods like PCR amplification and sequencing [24] [25]. This approach is termed "biased" because it can only detect off-target effects at pre-defined locations, potentially missing unexpected cleavage sites.

Unbiased detection encompasses experimental methods designed to identify off-target cleavage sites across the entire genome without prior assumptions. These techniques operate directly in target cells and capture the physiological consequences of CRISPR-Cas9 activity, such as double-strand breaks (DSBs) or the resulting repair products [24]. The primary advantage of unbiased methods is their ability to discover off-target effects at locations that do not necessarily resemble the on-target site.

The table below summarizes the fundamental distinctions between these two paradigms.

Table 1: Fundamental Differences Between Biased and Unbiased Detection Approaches

| Feature | Biased (In Silico) Detection | Unbiased (Genome-Wide) Detection |

|---|---|---|

| Core Principle | Prediction of off-target sites based on sequence alignment and algorithms [25] | Experimental, genome-wide screening for DSBs or their repair products without pre-selection [24] |

| Methodology | Computational simulation followed by targeted validation (e.g., PCR, sequencing) [25] | Various techniques to capture Cas9 binding, DSBs, or repair outcomes (e.g., GUIDE-seq, CIRCLE-seq) [24] [3] |

| Key Assumption | Off-target sites have sequence similarity to the gRNA [24] | Cas9 can cleave at genomic sites with little or no sequence similarity to the target [24] |

| Scope of Detection | Limited to computationally predicted sites [24] | Genome-wide, capable of discovering novel, unexpected off-target sites [24] |

| Typical Workflow | gRNA input → Algorithmic prediction → Targeted validation | Treat cells → Genome-wide DSB capture & enrichment → Sequencing & analysis |

Experimental Protocols and Workflows

Biased Detection Protocol

The workflow for biased, or in silico, off-target detection is a sequential process:

- gRNA Input: The process begins with the researcher providing the specific gRNA sequence of interest.

- In Silico Prediction: This sequence is processed by a prediction tool or algorithm (e.g., Cas-OFFinder, CCTop, or CCLMoff). These tools scan the reference genome to identify all loci with a Protospacer Adjacent Motif (PAM) and a user-defined number of base mismatches or bulges relative to the gRNA [24] [25] [9].

- Targeted Validation: The list of potential off-target sites generated by the algorithm is then examined empirically. This typically involves PCR amplification of each predicted genomic locus from the edited cells, followed by deep sequencing to quantify the frequency of insertions or deletions (indels) at each site [24].

Unbiased Detection Protocols

Unbiased methods rely on capturing the physical evidence of CRISPR activity in cells. The following diagram illustrates the three main strategies based on what they detect: Cas9 binding, Double-Strand Breaks (DSBs), or repair products.

The three primary strategies for unbiased detection are [24] [3] [25]:

- Detection of Cas9 Binding: Techniques like ChIP-seq (Chromatin Immunoprecipitation followed by sequencing) use catalytically inactive Cas9 (dCas9) to bind DNA without cutting. An antibody pulls down dCas9 and its bound DNA fragments, which are sequenced to map all binding sites. A limitation is that not all binding events result in cleavage [24].

- Detection of DSBs: Methods such as BLESS and CIRCLE-seq directly identify the location of DNA breaks. BLESS uses biotinylated linkers that are captured at DSB sites in fixed cells. CIRCLE-seq performs Cas9 cleavage on purified genomic DNA that is circularized in vitro, followed by adapter ligation and sequencing of the broken ends, making it highly sensitive for in vitro applications [25] [26].

- Detection of DSB Repair Products: These methods detect how cells repair the breaks. GUIDE-seq involves transfecting cells with a short, double-stranded oligodeoxynucleotide that integrates into DSBs via the non-homologous end joining (NHEJ) repair pathway. These integrated oligos then serve as tags for PCR amplification and sequencing of the flanking genomic regions [24] [3]. IDLV (Integrase-Deficient Lentiviral Vector) capture uses a similar principle, where an IDLV particle integrates into DSBs, and the virus-genome junctions are sequenced [24].

Performance and Comparative Analysis

Independent evaluations have helped quantify the performance of these different approaches. A 2016 study that collected data from eight off-target studies found that sequence-based biased predictors could reliably identify most off-targets with mutation rates above 0.1% [9]. The cutting frequency determination (CFD) score was shown to be particularly discriminative, with an Area Under the Curve (AUC) of 0.91 for distinguishing validated off-targets from false positives [9].

However, the same analysis revealed that the guide RNAs tested in published studies often had relatively low specificity scores compared to the genome-wide average, meaning the field has limited data on the off-target profiles of highly specific guides [9]. This highlights a potential blind spot that unbiased methods can help address.

The table below provides a detailed comparison of major unbiased detection methods.

Table 2: Comparison of Major Unbiased, Genome-Wide Off-Target Detection Methods

| Method | Detection Principle | Key Advantage | Key Limitation | Reported Sensitivity |

|---|---|---|---|---|

| GUIDE-seq [3] [25] | DSB repair product capture | High efficiency in detecting in vivo off-targets; does not require specific antibodies | Relies on oligonucleotide uptake and NHEJ efficiency; potential for false positives from random integration | High (detects low-frequency events) |

| CIRCLE-seq [3] [25] | In vitro DSB enrichment | Extremely sensitive; works on purified DNA without cellular constraints | An in vitro method; may detect biologically irrelevant sites due to absence of cellular context (e.g., chromatin) | Very High |

| DISCOVER-seq (MRE11 ChIP-seq) [3] [25] | DSB recruitment of repair protein MRE11 | Detects breaks in native cellular and in vivo contexts; uses endogenous repair machinery | Requires specific antibodies for MRE11; temporal resolution is critical as recruitment is transient | ~0.1–0.2% (similar to WGS assays) [9] |

| BLESS [25] | Direct DSB capture with biotinylated linkers | A "snapshot" of active DSBs at a fixed time point | Does not capture already repaired DSBs; efficiency can be influenced by chromatin accessibility | N/A |

| IDLV Capture [24] [25] | DSB repair product capture via viral vector integration | Highly efficient at entering hard-to-transfect cells (e.g., primary cells) | Potential for false positives from the random integration of the lentivirus | N/A |

| ChIP-seq (dCas9) [24] | Cas9 protein-DNA binding | Maps all potential binding sites of a gRNA-Cas9 pair | Binding does not always result in cleavage; can over-predict functional off-target sites [24] | N/A |

| AID-seq [26] | Adapter-mediated DSB identification | High sensitivity and specificity; can be run in a high-throughput, pooled manner for many gRNAs | An in vitro method | Reported as highly sensitive and specific [26] |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful off-target assessment requires specific reagents and tools. The following table lists key solutions utilized in the featured experiments.

Table 3: Key Research Reagent Solutions for Off-Target Detection

| Reagent / Solution | Function in Experiment | Example Use Case |

|---|---|---|

| Catalytically Inactive Cas9 (dCas9) | Binds DNA at gRNA-specified sites without cleaving it, allowing for mapping of binding sites. | ChIP-seq for unbiased detection of Cas9 binding loci [24]. |

| Integrase-Deficient Lentiviral Vector (IDLV) | Integrates into DSBs via NHEJ, serving as a molecular tag for the break site. | IDLV capture for unbiased detection of DSB repair products in target cells [24] [25]. |

| Double-Stranded Oligodeoxynucleotide (dsODN) | A short, defined DNA molecule that integrates into DSBs during repair. | Serves as the tag in GUIDE-seq for genome-wide amplification and sequencing of off-target sites [3]. |

| MRE11 Antibody | Binds to the MRE11 protein, a key early responder in the DNA damage repair pathway. | Immunoprecipitation of Cas9-induced DSBs in the DISCOVER-seq method [25]. |

| High-Fidelity PCR Kit | Amplifies specific genomic regions or captured DNA fragments with low error rates. | Validation of predicted off-target sites in biased methods; amplification of integrated tags in GUIDE-seq and IDLV [24]. |

| Cas9 Nuclease (Wild-type) | Generates DSBs at targeted and off-target genomic sites. | The core effector enzyme in all CRISPR-Cas9 editing and subsequent off-target detection experiments [24]. |

| Next-Generation Sequencing (NGS) Library Prep Kit | Prepares DNA fragments for high-throughput sequencing. | Essential for all unbiased methods and for deep sequencing of targeted amplicons in biased methods [25] [9]. |

The choice between biased and unbiased detection methods is not a matter of which is universally superior, but which is most appropriate for the specific research or development stage.

Biased, in silico prediction is highly efficient, cost-effective, and an indispensable first step during the guide RNA design phase. It allows researchers to rapidly screen and select gRNAs with the fewest predicted off-targets, significantly reducing the time spent on guide screening [9]. Its limitations must be acknowledged, as it may miss biologically relevant but sequence-dissimilar off-targets.

Unbiased, genome-wide assays are crucial for comprehensive safety profiling, especially in therapeutic development. Before clinical translation, it is imperative to employ methods like GUIDE-seq, DISCOVER-seq, or AID-seq to identify all potential off-target effects, including those that would be missed by computational tools alone [24] [26].

A robust strategy for critical applications, particularly in drug development, involves a complementary approach: using in silico tools to design the best possible guide RNAs, followed by thorough experimental validation with a sensitive, unbiased method to build a complete safety profile. The ongoing development of deep learning frameworks like CCLMoff, which are trained on diverse datasets from multiple unbiased detection technologies, promises to further enhance the accuracy of in silico predictions, bridging the gap between these two pivotal paradigms [3].

The Critical Role of Prediction Tools in gRNA Design and Therapeutic Safety

The advent of CRISPR-Cas systems has revolutionized life sciences and therapeutic development, particularly for monogenic genetic diseases where it promises long-term therapeutic effects from a single intervention [3]. However, the clinical application of this powerful technology faces a significant bottleneck: the CRISPR-Cas9 system can tolerate mismatches and DNA/RNA bulges at target sites, leading to unintended off-target effects that pose substantial challenges for gene-editing therapy development [3]. These unintended edits can disrupt essential genes or activate oncogenes, creating critical safety risks for patients [2]. The precision of guide RNA (gRNA) design consequently emerges as the fundamental determinant of therapeutic safety and efficacy, driving the urgent need for advanced computational prediction tools that can accurately forecast both on-target efficiency and off-target activity prior to experimental validation.

The Evolution of CRISPR Prediction Tools

Early computational approaches for gRNA design relied primarily on alignment-based methods (e.g., Cas-OFFinder) and formula-based scoring systems (e.g., MIT CRISPR design tool) that incorporated mismatch patterns and positional weights [3]. While pioneering, these methods demonstrated limited accuracy in predicting the complex biological behavior of CRISPR systems. The field has since evolved through several generations of increasingly sophisticated approaches:

- Energy-based methods (e.g., CRISPRoff) introduced approximate binding energy models for the Cas9-gRNA-DNA chimeric complex [3]

- Traditional machine learning models began extracting patterns from growing experimental datasets

- Deep learning frameworks now represent the state-of-the-art, automatically learning genomic patterns from comprehensive training data [3]

The most recent transformation has been the integration of foundation models pre-trained on vast genomic datasets, enabling unprecedented prediction accuracy by leveraging fundamental knowledge of nucleic acid sequences and their biological properties [27] [28].

Cutting-Edge Tools: A Comparative Analysis

Next-Generation Off-Target Prediction Platforms

Table 1: Comparative Analysis of Advanced Off-Target Prediction Tools

| Tool Name | Core Methodology | Key Innovation | Training Data Scope | Performance Advantages |

|---|---|---|---|---|

| CCLMoff | Transformer-based RNA language model | Incorporates pretrained RNA-FM from RNAcentral | 13 genome-wide detection technologies; comprehensive, updated dataset | Superior cross-dataset generalization; captures seed region importance [3] |

| DNABERT-Epi | DNA foundation model + epigenetic features | Pre-trained on human genome; multi-modal integration | 7 off-target datasets; integrates H3K4me3, H3K27ac, ATAC-seq [28] | Competitive/superior performance vs. state-of-the-art; enhanced by epigenetics [28] |

| CRISPR-BERT/CrisprBERT | Transformer architecture | Applies natural language processing to DNA sequences | Various off-target datasets | Promising results in off-target prediction [28] |

Base Editing Prediction Tools

Table 2: Base Editing Prediction Tools Comparison

| Tool Name | Editor Specificity | Core Innovation | Training Data Strategy | Key Capability |

|---|---|---|---|---|

| CRISPRon-ABE | Adenine base editors (ABE7.10, ABE8e) | Deep CNN; dataset-aware training | Multiple datasets with origin labeling; SURRO-seq data (~11,500 gRNAs) [29] | Predicts efficiency and full spectrum of outcomes simultaneously [29] |

| CRISPRon-CBE | Cytosine base editors (BE4-Gam) | Incorporates molecular features | SURRO-seq, Song, Arbab datasets; HEK293T cells [29] | Addresses bystander edits; joint efficiency/outcome prediction [29] |

Emerging AI-Driven Design Assistants

Beyond specialized prediction tools, comprehensive AI assistants are emerging to streamline the entire experimental design process. CRISPR-GPT exemplifies this trend, functioning as a gene-editing "copilot" that helps researchers generate designs, analyze data, and troubleshoot flaws [30]. Trained on 11 years of expert discussions and scientific publications, this AI agent "thinks" like a scientist and can significantly reduce the trial-and-error typically required for CRISPR experimentation [30].

Experimental Protocols and Validation Methodologies

High-Quality Training Data Generation

The performance of predictive models depends critically on the quality and scope of their training data. Modern approaches employ several sophisticated experimental techniques:

SURRO-seq for Base Editing Analysis: This technology creates libraries pairing gRNAs with their target sequences integrated into the genome, enabling precise measurement of base-editing efficiency for thousands of gRNAs [29]. The protocol involves:

- Library construction with paired gRNA-target sequences

- Delivery to target cells (e.g., HEK293T)

- Sequencing and quality filtering to obtain robust efficiency measurements

- Specificity validation (ABE7.10 showed 97% adenine-to-guanine specificity; BE4 showed 92% cytosine-to-thymine specificity) [29]

Comprehensive Off-Target Detection Integration: CCLMoff was trained on 13 genome-wide deep sequencing techniques categorized into three methodological groups [3]:

- DNA binding detection: Extru-seq, SITE-seq

- DSB detection: CIRCLE-seq, DISCOVER-seq, CHANGE-seq, BLESS

- Repair product detection: GUIDE-seq, Digenome-seq, DIG-seq, IDLV, HTGTS, SURRO-seq

BreakTag for Nuclease Characterization: This recently developed scalable next-generation sequencing approach characterizes CRISPR-Cas9 nucleases and guide RNAs by enriching DNA double-strand breaks at on- and off-target sequences [31]. The complete protocol requires approximately 3 days and enables:

- Off-target nomination

- Nuclease activity assessment

- Scission profile characterization

- Companion tool BreakInspectoR for data analysis [31]

Model Architecture and Training Specifications

CCLMoff Framework:

- Adopts a question-answering framework where sgRNA sequence is the "question" and target site is the "answer" [3]

- Uses 12 transformer blocks initialized with RNA-FM model pretrained on 23 million RNA sequences [3]

- Incorporates epigenetic data (CTCF binding, H3K4me3, DNA methylation) via CNN encoding [3]

- Employs binary cross-entropy loss with AdamW optimizer and learning rate warm-up strategy [3]

DNABERT-Epi Architecture:

- Builds on DNABERT foundation model pre-trained on human genome [28]

- Integrates 300-dimensional epigenetic feature vector (H3K4me3, H3K27ac, ATAC-seq) [28]

- Processes epigenetic signals within 1000bp window centered on cleavage site [28]

- Uses random negative class downsampling to address data imbalance [28]

Performance Benchmarking and Clinical Validation

Quantitative Performance Metrics

Rigorous benchmarking demonstrates the superior performance of modern AI-driven tools:

CCLMoff demonstrated "strong cross-dataset generalization ability" across various next-generation sequencing-based detection datasets, accurately identifying off-target sites while capturing the biological importance of the seed region [3].

DNABERT-Epi achieved "competitive or even superior performance" compared to five state-of-the-art methods across seven distinct off-target datasets [28]. Ablation studies quantitatively confirmed that both genomic pre-training and epigenetic integration significantly enhance predictive accuracy.

CRISPRon-ABE/CBE demonstrated "consistent superiority" over existing methods including DeepABE/CBE, BE-HIVE, BE-DICT, BE_Endo, and BEDICT2.0 when tested on independent datasets [29]. The dataset-aware training approach provided approximately 10% performance improvement compared to non-labeled training.

Clinical Workflow Integration

AI-Enhanced gRNA Design Workflow for Therapeutic Development

Table 3: Key Research Reagent Solutions for gRNA Design and Validation

| Resource Category | Specific Tools/Platforms | Function and Application |

|---|---|---|

| Engineered Nucleases | hfCas12Max, eSpOT-ON (ePsCas9), SaCas9 variants [32] | High-specificity editing; reduced off-target activity; tailored PAM recognition |

| Off-Target Detection | GUIDE-seq, CIRCLE-seq, DISCOVER-seq, CHANGE-seq [3] [2] | Genome-wide identification of off-target sites; different detection principles |

| Analysis Software | ICE (Inference of CRISPR Edits), BreakInspectoR [2] [31] | Analysis of editing efficiencies; off-target nomination; data interpretation |

| AI Design Platforms | CRISPR-GPT, Agent4Genomics website [30] | AI-assisted experimental design; troubleshooting; knowledge integration |

| Data Resources | RNAcentral, Gene Expression Omnibus (GEO) [3] [28] | Source of pre-training data; epigenetic information (e.g., H3K4me3, ATAC-seq) |

Future Directions and Implementation Recommendations

The integration of artificial intelligence with CRISPR technology represents a paradigm shift in therapeutic development. Foundation models pre-trained on genomic sequences demonstrate that large-scale biological knowledge significantly enhances prediction accuracy [28]. The emerging trend of multi-modal integration combining sequence information with epigenetic context further refines these predictions [3] [28]. For therapeutic applications, we recommend:

- Tool Selection Strategy: Employ DNABERT-Epi or CCLMoff for off-target prediction in protein-coding regions, complemented by CRISPRon-ABE/CBE for base editing applications

- Experimental Validation: Utilize CHANGE-seq or GUIDE-seq for comprehensive off-target profiling in clinically relevant cell types

- Risk Mitigation: Implement multiple prediction tools to cross-validate results before proceeding to costly clinical development stages

- Emerging Solutions: Monitor developments in novel delivery systems (e.g., LNPs) that enable re-dosing and potentially alter risk-benefit calculations for off-target editing [33]

As AI-driven tools continue to evolve, they promise to transform CRISPR-based therapeutic development from a trial-and-error process to a precise, predictable engineering discipline, ultimately accelerating the delivery of safe, effective genetic therapies to patients.

A Landscape of Prediction Methodologies: From Alignment-Based to AI-Driven Tools

The advent of CRISPR/Cas9 genome editing has revolutionized biological research and therapeutic development. However, its clinical application is hindered by off-target effects, where the Cas9 enzyme cleaves unintended sites in the genome. Accurately predicting these effects is crucial for designing safe and effective guide RNAs (sgRNAs). Computational methods for off-target prediction have evolved significantly, forming a distinct taxonomy that reflects broader patterns in computational biology. This guide provides a systematic comparison of these methods—alignment-based, formula-based, energy-based, and learning-based—framed within the context of evaluating on-target and off-target prediction tools for researchers and drug development professionals.

A Taxonomy of Computational Methods

Computational approaches for off-target prediction can be categorized into four distinct groups based on their underlying principles and operational mechanisms [3].

Alignment-Based Methods: These were among the first computational techniques developed for off-target prediction. They function by identifying genomic sequences similar to the intended target site of the sgRNA. Tools like Cas-OFFinder, CHOPCHOP, and GT-Scan employ various alignment algorithms to efficiently scan the entire genome for potential off-target sites, primarily focusing on mismatch patterns between the sgRNA and DNA [3].

Formula-Based Methods: This category improves upon simple alignment by incorporating weighted scoring schemes. Tools such as CCTop and MIT assign different penalty weights to mismatches occurring in the PAM-distal region versus the PAM-proximal region, aggregating these contributions to calculate a final off-target score [3].

Energy-Based Methods: These approaches, including CRISPRoff, model the physical interactions within the Cas9-gRNA-DNA complex. They present an approximate binding energy model for the chimeric complex, using thermodynamic principles to predict the likelihood of cleavage at off-target sites [3].

Learning-Based Methods: Representing the state-of-the-art, these methods use machine learning to automatically extract sequence patterns and features from training data. DeepCRISPR, CRISPR-Net, and the more recent CCLMoff and DNABERT-Epi fall into this category. They typically demonstrate superior performance by learning complex, non-linear relationships from comprehensive datasets [3] [23].

Comparative Performance Analysis

The table below summarizes the key characteristics and reported performance of major off-target prediction tools across different methodological categories.

Table 1: Performance Comparison of Off-Target Prediction Methods

| Method Name | Category | Key Features | Reported Performance | Limitations |

|---|---|---|---|---|

| Cas-OFFinder [3] | Alignment-based | Genome-wide scanning, considers mismatches & bulges | Foundational for candidate site identification | Limited predictive accuracy, no integrated scoring |

| CCTop [3] | Formula-based | Position-specific mismatch weighting | Improved over basic alignment | Lacks complex sequence context understanding |

| CRISPRoff [3] | Energy-based | Approximates Cas9-gRNA-DNA binding energy | Incorporates biophysical principles | Model may be an oversimplification of complex biology |

| CCLMoff [3] | Learning-based | Transformer architecture, pre-trained RNA language model (RNA-FM), trains on 13 detection techniques | Strong generalization across diverse NGS datasets, captures seed region importance | Model interpretation can be complex |

| DNABERT-Epi [34] [23] | Learning-based | Pre-trained DNA foundation model (DNABERT), integrates epigenetic features (H3K4me3, H3K27ac, ATAC-seq) | Competitive/superior to state-of-the-art; ablation studies confirm value of pre-training and epigenetics | Requires more computational resources and data preprocessing |

Insights from Benchmarking Studies

Benchmarking reveals that learning-based methods, particularly those leveraging pre-trained models and epigenetic data, consistently achieve superior performance. For instance, DNABERT-Epi was benchmarked against five state-of-the-art methods across seven distinct off-target datasets. Rigorous ablation studies quantitatively confirmed that both genomic pre-training and the integration of epigenetic features are critical factors that significantly enhance predictive accuracy [23]. Similarly, CCLMoff demonstrated strong cross-dataset generalization, a common challenge for models trained on limited datasets [3].

Experimental Protocols and Methodologies

Dataset Curation and Preprocessing

A critical factor in developing robust learning-based models is the use of comprehensive, high-quality datasets. The following protocol is representative of modern approaches [3] [23]:

- Data Source Integration: Curate a comprehensive off-target dataset from multiple genome-wide deep sequencing techniques (e.g., GUIDE-seq, CIRCLE-seq, CHANGE-seq). This forces the model to learn general off-target patterns rather than features specific to a single detection assay.

- Negative Sample Construction: Use a tool like Cas-OFFinder to generate negative samples (non-off-target sites) by imposing constraints on the number of mismatches and bulges. This provides challenging negative examples and reduces the sampling space.

- Data Imbalance Handling: Address severe class imbalance (few positive off-target sites vs. many negatives) through techniques like random downsampling of the majority class in the training data. Test sets should remain unaltered for unbiased evaluation.

- Epigenetic Data Integration (for multi-modal models): For models like DNABERT-Epi, epigenetic data (e.g., H3K4me3, H3K27ac, ATAC-seq) must be processed [23]:

- Extraction: Obtain signal values within a 1000 bp window centered on the potential cleavage site.

- Normalization: Cap outlier signals and apply Z-score transformation across the dataset.

- Binning: Divide the window into bins (e.g., 100 bins of 10 bp) and average the signal per bin to create a fixed-length feature vector.

Model Training and Evaluation

- Architecture Design: For a transformer-based model like CCLMoff, the input consists of the sgRNA sequence and a candidate DNA site (converted to pseudo-RNA). These are tokenized and fed into an encoder of transformer blocks, initialized with a pre-trained model like RNA-FM [3]. The final hidden state of a

[CLS]token is used for the final prediction via a Multilayer Perceptron (MLP). - Training Strategy: Employ a two-stage fine-tuning process, especially for foundation models. Use a small learning rate for the pre-trained transformer blocks and a larger one for the task-specific MLP head. Use a binary cross-entropy loss function.

- Rigorous Evaluation: Implement a strict cross-validation scheme where no perturbation condition (sgRNA) is shared between training and test sets. Performance should be evaluated on multiple held-out datasets to truly assess generalization ability.

Visualization of Workflows

Taxonomy and Model Architecture

The following diagram illustrates the logical relationship between the four methodological categories and the typical architecture of an advanced learning-based model.

The development and application of modern off-target prediction tools rely on a suite of key datasets, software, and genomic resources.

Table 2: Key Research Reagents and Resources for Off-Target Tool Development

| Resource Name | Type | Primary Function in Research | Relevance |

|---|---|---|---|

| GUIDE-seq [3] | Experimental Dataset | Genome-wide, in cellula detection of DSB repair products. | Provides high-quality, biologically relevant training and validation data. |

| CIRCLE-seq [3] | Experimental Dataset | In vitro, high-sensitivity detection of DSBs. | Useful for comprehensive profiling of potential off-target sites without cellular context. |

| Change-seq [23] | Experimental Dataset | In vitro detection method for DSBs. | Often used as a large-scale dataset for initial model training. |

| RNA-FM [3] | Pre-trained Model | A foundation model pre-trained on 23 million RNA sequences from RNAcentral. | Provides robust sequence feature extraction for models like CCLMoff. |

| DNABERT [23] | Pre-trained Model | A BERT-based model pre-trained on the human genome. | Enables understanding of fundamental DNA "language" for sequence-based prediction. |

| Cas-OFFinder [3] | Software Tool | Genome-wide search for potential off-target sites. | Used for generating candidate sites and constructing negative datasets for training. |

| Epigenetic Marks (H3K4me3, H3K27ac) [23] | Genomic Data | Histone modification marks indicating active promoters and enhancers. | Integrated into multi-modal models (DNABERT-Epi) to improve in cellula prediction. |

| ATAC-seq Data [23] | Genomic Data | Assay for Transposase-Accessible Chromatin, measuring open chromatin regions. | Provides critical information on chromatin accessibility, a key factor influencing Cas9 activity. |

The taxonomy of computational methods for off-target prediction showcases a clear trajectory from simple pattern matching (alignment-based) towards increasingly sophisticated, context-aware artificial intelligence (learning-based). Current state-of-the-art approaches, such as CCLMoff and DNABERT-Epi, leverage pre-trained foundation models on vast genomic corpora and integrate multi-modal data like epigenetic features. Benchmarking studies confirm that these advanced learning-based methods offer superior accuracy and, crucially, better generalization across diverse experimental conditions. For researchers and drug developers, this evolution means that modern tools are becoming increasingly reliable for the critical task of designing safer CRISPR/Cas9-based therapeutics, though careful attention must be paid to their experimental validation and application context.

The CRISPR/Cas9 system has revolutionized life and medical sciences, particularly for treating monogenic genetic diseases by enabling long-term therapeutic effects from a single intervention [3]. However, the clinical application of this powerful genome-editing tool is hampered by off-target effects, where the Cas9 nuclease cleaves unintended genomic sites with sequence similarity to the intended target [23]. These unintended edits can disrupt normal cellular functions, confound experimental results, and pose significant safety concerns in therapeutic contexts, potentially leading to the disruption of essential genes or activation of oncogenes [2]. The need for precise off-target prediction has become increasingly urgent with the recent FDA approval of the first CRISPR-based therapy, exa-cel (CASGEVY), for sickle cell disease, as regulatory agencies now emphasize thorough off-target characterization in preclinical and clinical studies [35].

Traditional computational methods for predicting off-target effects have evolved from simple alignment-based approaches to more sophisticated hypothesis-driven and energy-based models [36]. While these tools provided valuable initial frameworks, they often demonstrated limited generalization capability and performed poorly on unseen guide RNA (gRNA) sequences [3] [37]. The emergence of deep learning has marked a significant paradigm shift, with models like CCLMoff and CRISPR-Embedding leveraging advanced neural network architectures to achieve unprecedented prediction accuracy and generalization across diverse datasets. This comparison guide objectively evaluates these innovative deep learning approaches against traditional methods and each other, providing researchers and drug development professionals with critical insights for selecting appropriate tools for their therapeutic genome editing pipelines.

Traditional Approaches to CRISPR Off-Target Prediction: Establishing the Baseline

Before the advent of deep learning, computational methods for CRISPR off-target prediction primarily fell into four categories: alignment-based, hypothesis-driven, energy-based, and early learning-based approaches [36]. Alignment-based tools like Cas-OFFinder employed genome-wide scanning with constraints on mismatch numbers and positions to identify potential off-target sites [3] [36]. Hypothesis-driven methods such as Cutting Frequency Determination (CFD) and MIT scoring assigned position-specific weights to mismatches based on experimental data, aggregating these contributions to generate off-target propensity scores [9]. Energy-based approaches like CRISPR-OFF approximated the binding energy of the Cas9-gRNA-DNA complex to predict cleavage likelihood [36].

While these traditional methods established the foundation for off-target prediction, they faced significant limitations. Their performance often degraded when applied to gRNAs with high GC content or unusual mismatch patterns not well-represented in their training data [9]. Additionally, many early tools struggled to capture the complex interplay between sequence features, epigenetic factors, and cellular context that influence Cas9 binding and cleavage efficiency [23]. Comprehensive benchmarking studies revealed that while sequence-based off-target predictions could identify most off-targets with mutation rates above 0.1%, they generated substantial false positives that required additional filtering through score cutoffs [9].

Table 1: Categories of Traditional CRISPR Off-Target Prediction Tools

| Category | Representative Tools | Underlying Principle | Key Limitations |

|---|---|---|---|

| Alignment-based | Cas-OFFinder, CHOPCHOP, GT-Scan | Genome-wide search with mismatch constraints | Limited ranking capability; no cleavage likelihood prediction |

| Hypothesis-driven | CFD, MIT, CCTop | Position-specific mismatch weights based on experimental data | Limited generalization to unseen gRNA patterns |

| Energy-based | CRISPR-OFF, uCRISPR | Binding energy approximation of Cas9-gRNA-DNA complex | Computational intensity; simplified energy models |

| Early Learning-based | DeepCRISPR, CRISPR-Net | Feature extraction from training data using deep learning | Limited by training data scope and size |

Deep Learning Revolution: Architectural Breakthroughs in CCLMoff and CRISPR-Embedding

CCLMoff: Leveraging RNA Language Models for Enhanced Generalization

CCLMoff represents a significant architectural advancement by incorporating a pretrained RNA language model initialized from RNA-FM, which was pretrained on 23 million RNA sequences from RNAcentral [3] [38]. This approach allows the model to capture mutual sequence information between single-guide RNAs (sgRNAs) and target sites by understanding the "language" of RNA sequences. The framework formulates off-target prediction as a question-answering task, where the sgRNA sequence serves as the question stem and the candidate target site acts as the answer [3].

The model architecture employs 12 transformer blocks with a multi-head attention mechanism that enables effective information processing and contextual feature extraction between sgRNAs and target sites [3]. The input embeddings of the sgRNA and the pseudo-RNA candidate (DNA sequence with thymine replaced by uracil) are processed through these transformer blocks, with a special [SEP] token delimiting their discontinuity. For the final classification, the hidden state of the [CLS] token from the final layer is fed into a Multilayer Perceptron (MLP) to generate the off-target likelihood score [3]. An enhanced version, CCLMoff-Epi, further incorporates epigenetic features including CTCF binding information, H3K4me3 histone modification, chromatin accessibility, and DNA methylation using a convolutional neural network (CNN), with the resulting representation concatenated with the language model output [3].

CRISPR-Embedding: DNA k-mer Embeddings with Convolutional Neural Networks

CRISPR-Embedding employs a different deep learning strategy based on a 9-layer Convolutional Neural Network (CNN) that utilizes DNA k-mer embeddings for effective sequence representation [39]. This approach treats DNA sequences as textual data, where k-mers (subsequences of length k) are analogous to words in natural language processing. The model learns meaningful vector representations of these k-mers through an embedding layer, which are then processed by convolutional layers to detect relevant motifs and patterns indicative of off-target activity [39].

To address the significant class imbalance inherent in off-target datasets (where positive off-target sites are vastly outnumbered by negative sites), CRISPR-Embedding implements data augmentation and under-sampling strategies, resulting in a cleaner, more balanced dataset for training [39]. The CNN architecture progressively learns hierarchical features from the embedded k-mer sequences, with lower layers detecting simple nucleotide patterns and higher layers combining these into more complex representations predictive of Cas9 binding and cleavage. Through 5-fold cross-validation, this approach achieved a notable average accuracy of 94.07%, demonstrating superior performance over existing state-of-the-art methods available at the time of its publication [39].

Comparative Performance Analysis: Benchmarking Deep Learning Against Traditional Methods

Quantitative Performance Metrics Across Diverse Datasets

Comprehensive benchmarking studies demonstrate the superior performance of deep learning models compared to traditional approaches across multiple evaluation metrics. CCLMoff showed strong generalization capabilities across diverse next-generation sequencing (NGS)-based detection datasets, outperforming existing models in various scenarios [3] [37]. The incorporation of pretrained language models and epigenetic features provided significant enhancements in predictive accuracy, with CCLMoff accurately identifying off-target sites and demonstrating robust cross-dataset performance [3].

Independent evaluations of CRISPR-Embedding revealed its exceptional performance, achieving 94.07% accuracy through 5-fold cross-validation, surpassing contemporary state-of-the-art methods in off-target activity prediction [39]. The model's use of DNA k-mer embeddings and strategic handling of class imbalance contributed to this enhanced performance, allowing it to effectively capture sequence determinants of off-target activity while mitigating biases from unbalanced training data.

Table 2: Performance Comparison of Off-Target Prediction Tools

| Tool | Underlying Architecture | Reported Accuracy | Key Advantages | Limitations |

|---|---|---|---|---|

| CCLMoff | Transformer with pretrained RNA language model | Superior generalization across NGS datasets [3] | Captures mutual sequence information; strong cross-dataset performance | Computational intensity for training |

| CRISPR-Embedding | 9-layer CNN with DNA k-mer embeddings | 94.07% (5-fold cross-validation) [39] | Effective handling of class imbalance; hierarchical feature learning | Limited incorporation of epigenetic context |

| DNABERT-Epi | BERT-based DNA model with epigenetic features | Competitive/superior to state-of-the-art [23] | Integrates sequence and epigenetic features; model interpretability | Complex feature processing pipeline |

| CFD (Traditional) | Hypothesis-driven scoring | AUC: 0.91 [9] | Simple implementation; proven reliability | Limited to sequence features only |

| MIT (Traditional) | Hypothesis-driven scoring | AUC: 0.87 [9] | Established benchmark; widely adopted | Misses many off-target alignments |

Cross-Dataset Generalization and Robustness