Advances in Low-Abundance RNA Detection: From Foundational Concepts to Clinical Applications

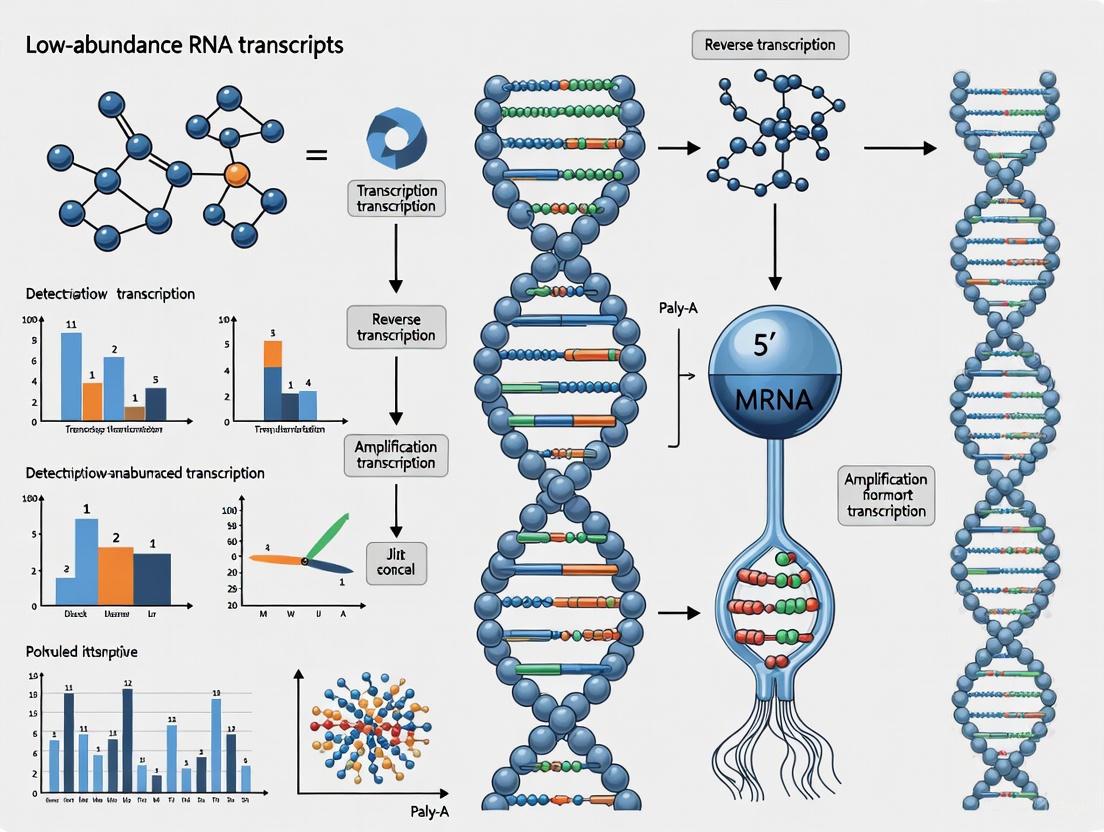

Accurate detection and quantification of low-abundance RNA transcripts are pivotal for advancing molecular diagnostics, understanding complex diseases like cancer, and driving drug discovery.

Advances in Low-Abundance RNA Detection: From Foundational Concepts to Clinical Applications

Abstract

Accurate detection and quantification of low-abundance RNA transcripts are pivotal for advancing molecular diagnostics, understanding complex diseases like cancer, and driving drug discovery. This article provides a comprehensive resource for researchers and drug development professionals, exploring the foundational challenges of low-abundance RNA, cutting-edge methodological solutions from ultra-deep sequencing to targeted amplification, critical optimization strategies for robust results, and frameworks for rigorous validation. By synthesizing the latest technological breakthroughs and comparative analyses, this review serves as a strategic guide for navigating the complexities of the low-abundance transcriptome.

The Critical Challenge and Expanding Universe of Low-Abundance RNAs

Low-abundance transcripts represent a functionally significant yet technically challenging class of RNA molecules that include sparse messenger RNAs (mRNAs) and regulatory long non-coding RNAs (lncRNAs). Their accurate detection and quantification is paramount for advancing our understanding of gene regulation in development, disease, and cellular response mechanisms. These transcripts, often characterized by quantification cycle (Cq) values above 30 in RT-qPCR assays or limited read counts in RNA-seq data, play disproportional roles in critical biological processes despite their sparse expression [1] [2].

The technical definition of low-abundance transcripts varies by detection platform. In reverse transcription-quantitative real-time PCR (RT-qPCR), they typically yield Cq values exceeding 30-35, approaching the lower limit of reliable quantification [1] [3]. In RNA sequencing, they are characterized by low read counts, with one study defining them as transcripts below the 60th percentile of relative abundance, accounting for only 3% of total read counts despite comprising over 60% of detected transcripts [2]. These transcripts include key regulatory molecules such as transcription factors, alternative splicing isoforms, and lncRNAs that function as master regulators of downstream gene expression networks [2] [4].

This technical guide examines the detection challenges, methodological innovations, and experimental considerations for studying these elusive transcripts, providing researchers with a comprehensive framework for advancing research in this critical area of molecular biology.

Characteristics and Detection Challenges

Defining Features of Low-Abundance Transcripts

Low-abundance transcripts share several distinguishing characteristics that contribute to both their functional significance and detection challenges. Long non-coding RNAs, a major category of low-abundance transcripts, are defined as RNA transcripts longer than 200 nucleotides that lack protein-coding capacity [5]. Unlike mRNAs, lncRNAs exhibit distinct molecular properties including fewer exons, shorter sequence length, lower GC content, and reduced evolutionary conservation [5] [4]. They are predominantly transcribed by RNA polymerase II, and while many are capped and polyadenylated, a subset are stabilized through secondary structures such as triple-helical formations at their 3' ends rather than polyadenylation [5].

These transcripts display stronger tissue-specific and cell-type-specific expression patterns compared to protein-coding genes, suggesting specialized roles in cellular processes [5] [6]. The advent of single-cell omics technologies has further highlighted the expression heterogeneity of lncRNAs and their importance in cellular identity and function [6]. Additionally, lncRNAs undergo extensive alternative splicing, dramatically increasing their potential isoform diversity and functional complexity beyond current annotations [5].

Technical Limitations in Detection

Conventional detection methods face significant challenges in accurately quantifying low-abundance transcripts. Standard RT-qPCR encounters sensitivity limitations as Cq values above 30-35 are often considered unreliable due to poor reproducibility and precision issues [1] [3]. This is particularly problematic for isoform-specific quantification where differential primer efficiency introduces amplification bias when comparing similar transcript variants [1] [3].

Table 1: Challenges in Detecting Low-Abundance Transcripts by Method

| Method | Primary Challenges | Impact on Low-Abundance Detection |

|---|---|---|

| RT-qPCR | Cq values >30-35 become unreliable; primer efficiency bias for isoforms [1] [3] | Limited sensitivity for transcripts <10 copies/cell; inaccurate isoform quantification |

| RNA-seq | Low read counts show high variability; requires deep sequencing [2] | 60% of transcripts may be low-count; high false-negative rate without sufficient depth |

| dPCR | Requires specialized instrumentation and reagents [3] | Improved sensitivity but limited accessibility and higher cost per sample |

| NanoString | Narrower dynamic range than RNA-seq [7] | Reduced sensitivity for very low-expressing genes |

For transcriptome-wide approaches, RNA sequencing struggles with the inherently noisy behavior of low-count transcripts, which exhibit large variability in logarithmic fold change estimates [2]. While methods like DESeq2 and edgeR robust attempt to address this through statistical moderation, accurate quantification of low-abundance isoforms still typically requires costly deep sequencing and complex bioinformatic analysis [3] [2]. Digital PCR improves sensitivity but requires specialized instrumentation and reagents, limiting its accessibility [3]. The NanoString nCounter system, while avoiding amplification bias through direct molecular barcoding, has a narrower dynamic range than RNA-seq, reducing its sensitivity for extremely low-expressing genes [7].

Methodological Approaches for Detection and Analysis

Targeted Amplification and Enrichment Strategies

STALARD: Selective Target Amplification

The STALARD method provides a targeted approach for detecting low-abundance polyadenylated transcripts that share a known 5'-end sequence. This two-step RT-PCR technique uses standard laboratory reagents to achieve rapid (<2 hours) pre-amplification specifically designed to overcome both sensitivity limitations and primer-induced bias in conventional RT-qPCR [1] [3].

The STALARD workflow employs a gene-specific primer (GSP) tailored to the 5'-end of the target RNA (with thymine replacing uracil) and a GSP-tailed oligo(dT) primer for reverse transcription. Following cDNA synthesis, limited-cycle PCR (<12 cycles) is performed using only the GSP, which anneals to both ends of the cDNA, specifically amplifying the target transcript without requiring a separate reverse primer [3]. This approach minimizes amplification bias while significantly enhancing detection sensitivity for low-abundance isoforms.

When applied to Arabidopsis thaliana, STALARD successfully amplified the low-abundance VIN3 transcript to reliably quantifiable levels and detected known splicing changes in FLM, MAF2, EIN4, and ATX2 isoforms during vernalization, including cases where conventional RT-qPCR failed [3]. The method also enabled consistent quantification of the extremely low-abundance antisense transcript COOLAIR and revealed novel COOLAIR polyadenylation sites when combined with nanopore sequencing [3].

Capture Sequencing and RNAscope

CaptureSeq represents a targeted RNA sequencing approach that uses hybridization-based enrichment to improve detection of low-abundance transcripts. This method employs custom capture probes to enrich for specific transcripts of interest prior to sequencing, significantly enhancing sensitivity compared to standard RNA-seq [8]. A recent application designed 565,878 capture probes for 49,372 human lncRNA genes, enabling detection of a more diverse repertoire of lncRNAs with better reproducibility and higher coverage across various sample types including formalin-fixed paraffin-embedded (FFPE) tissue and biofluids [9].

RNAscope represents an alternative non-PCR-based approach that utilizes a series of amplification steps to detect low-abundance RNAs with improved signal-to-noise ratio. This multiplexed RNA-FISH method is particularly valuable for investigating the regulation of low-abundance lncRNAs in situ and is suitable for high-throughput screening in 96-well plate formats [10]. The technique provides spatial context for RNA localization, which is critical for understanding lncRNA function, as their subcellular localization often determines their mechanistic roles [5].

Advanced Statistical Methods for RNA-seq Data

Statistical advances in processing RNA-seq data have provided alternative approaches for analyzing low-count transcripts without arbitrary filtering. Methods such as DESeq2 and edgeR robust employ sophisticated statistical frameworks to address the high variability inherent in low-count transcripts [2].

DESeq2 utilizes a generalized linear model based on the negative binomial distribution and implements information sharing across transcripts to moderate transcript-specific dispersion estimates. Crucially, it applies shrinkage to logarithmic fold change (LFC) estimates in a manner inversely proportional to the amount of information available for a transcript, preventing overinterpretation of variable estimates from low-count genes [2].

edgeR robust employs a similar negative binomial framework but incorporates differential weighting of observations that deviate from the model fit, thereby dampening the effect of extreme expression values on parameter estimates. This approach requires careful specification of the degrees of freedom parameter that controls the amount of shrinkage, which has non-trivial impacts on inference [2].

Table 2: Performance Comparison of Statistical Methods for Low-Count RNA-seq Transcripts

| Method | Key Features | Performance on Low-Count Transcripts |

|---|---|---|

| DESeq2 | Shrinks LFC estimates toward zero; shares information across genes [2] | Greater precision and accuracy; proper type 1 error control |

| edgeR robust | Down-weights observations deviating from model fit [2] | Greater power; proper type 1 error control when properly specified |

| Data Filtering | Removes transcripts below arbitrary expression thresholds [2] | Excludes 60% of transcripts; may remove biologically relevant signals |

Plasmode-based validation studies have demonstrated that both methods properly control family-wise type 1 error rates for low-count transcripts, with DESeq2 showing greater precision and accuracy, while edgeR robust exhibits greater power for differential expression detection [2]. These approaches enable researchers to retain biologically relevant low-count transcripts that would typically be excluded by standard filtering practices at arbitrary expression thresholds.

Experimental Protocols and Workflows

STALARD Protocol for Low-Abundance Isoform Detection

The STALARD protocol provides a detailed methodology for targeted amplification of low-abundance transcripts [3]:

Primer Design:

- Design a gene-specific primer (GSP) matching the 5'-end sequence of the target RNA (substituting T for U)

- Parameters: Tm = 62°C, GC content = 40-60%, avoid hairpin or self-dimer structures

- Prepare GSP-tailed oligo(dT)24VN primer (GSoligo(dT)) where V = A, G, or C and N = any base

cDNA Synthesis:

- Use 1 µg of total RNA and 1 µL of 50 µM GSoligo(dT) primer

- Perform first-strand cDNA synthesis using HiScript IV 1st Strand cDNA Synthesis Kit

- The resulting cDNA carries the GSP sequence at its 5' end

Targeted Pre-amplification:

- Perform PCR using 1 µL of 10 µM GSP and SeqAmp DNA Polymerase in a 50 µL reaction

- Thermal cycling: 95°C for 1 min; 9-18 cycles of 98°C for 10s, 62°C for 30s, 68°C for 1 min/kb; final extension at 72°C for 10 min

Purification and Analysis:

- Purify PCR products using AMPure XP beads at 1.0:0.7 product:beads ratio

- Elute in RNase-free water for subsequent qPCR or sequencing analysis

This protocol has been successfully applied to quantify splicing changes in response to environmental stimuli such as vernalization in Arabidopsis thaliana, demonstrating its utility for capturing biologically relevant expression changes in low-abundance isoforms [3].

RNA-FISH Protocol for lncRNA Localization

RNAscope provides a robust method for multiplexed detection of low-abundance long noncoding RNAs in cultured cells [10]:

Sample Preparation:

- Culture cells on appropriate chambered slides or coverslips

- Fix cells with 4% paraformaldehyde

- Permeabilize cells to allow probe access

Hybridization:

- Design target-specific probes that hybridize to the RNA of interest

- Perform sequential hybridization and amplification steps to enhance signal-to-noise ratio

- Incubate with label probes for detection

Detection and Imaging:

- Detect signals using fluorescence microscopy

- For multiplexing, use different fluorophores for distinct RNA targets

- Image using high-content imaging systems such as Operetta for 96-well plate formats

This method is particularly valuable for studying the subcellular localization of lncRNAs, which provides critical insights into their function, as localization directly impacts interaction partners and regulatory mechanisms [10] [5].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Low-Abundance RNA Detection

| Reagent/Kit | Function | Application Examples |

|---|---|---|

| GSP-tailed oligo(dT) primers | Target-specific reverse transcription with adapter sequence | STALARD method for selective amplification [3] |

| HiScript IV 1st Strand cDNA Synthesis Kit | High-efficiency cDNA synthesis with high sensitivity | STALARD first-strand synthesis [3] |

| SeqAmp DNA Polymerase | High-fidelity PCR amplification | Targeted pre-amplification in STALARD [3] |

| AMPure XP beads | PCR product purification and size selection | Post-amplification clean-up [3] |

| RNAscope probes | Target-specific hybridization for RNA-FISH | Multiplexed detection of low-abundance lncRNAs [10] |

| Custom capture probes | Hybridization-based enrichment for targeted RNA-seq | CaptureSeq for sensitive lncRNA detection [9] |

Subcellular Localization and Functional Implications

The subcellular localization of low-abundance transcripts, particularly lncRNAs, is a critical determinant of their function. Research has revealed that lncRNAs exhibit specific localization patterns that define their mechanistic roles [5]. Nuclear-enriched lncRNAs frequently function in transcriptional regulation through chromatin modification or as nuclear organization scaffolds, while cytoplasmic lncRNAs often participate in post-transcriptional processes including mRNA stability, translation regulation, and signaling pathways [5].

Techniques such as RNA-FISH have been instrumental in mapping these localization patterns, revealing that lncRNAs are often enriched in specific subcellular compartments including nuclear speckles, paraspeckles, P-bodies, and stress granules [5]. These phase-separated bodies represent specialized environments where lncRNAs nucleate functional complexes through interactions with RNAs, proteins, and DNA elements [5].

The functional significance of localization is exemplified by lncRNAs such as COOLAIR in Arabidopsis thaliana, which plays a role in epigenetic silencing of the FLC locus during vernalization [3]. The detection and accurate quantification of such extremely low-abundance transcripts has been challenging with conventional methods, leading to inconsistencies in reported expression patterns that newer targeted approaches are now resolving [3].

Method Selection Guidelines and Future Perspectives

Choosing the Appropriate Detection Method

Selection of optimal detection strategies for low-abundance transcripts depends on research goals, sample characteristics, and available resources [7]:

RNA-seq excels in discovery-phase research where comprehensive transcriptome characterization is needed. Transcriptome-wide RNA-seq enables discovery of novel transcripts, splice variants, and non-coding RNAs, while targeted RNA-seq panels provide deeper coverage of predefined gene sets at lower cost [8] [7].

NanoString nCounter offers advantages for degraded or FFPE-preserved samples where amplification-based methods may fail. Its direct digital counting without reverse transcription or PCR minimizes bias, and the simple workflow delivers results rapidly with minimal bioinformatics requirements [7].

qPCR remains the gold standard for targeted validation of small gene sets, offering exceptional sensitivity, speed, and precision for hypothesis-driven studies [7].

STALARD and CaptureSeq provide intermediate solutions that bridge the gap between targeted and discovery-based approaches, offering enhanced sensitivity for specific transcript classes while maintaining more accessible workflow requirements than comprehensive RNA-seq [1] [3] [9].

Emerging Trends and Future Directions

The field of low-abundance transcript research is rapidly evolving with several promising developments. Single-cell omics technologies are revealing unprecedented heterogeneity in lncRNA expression and function, enabling the construction of single-cell gene regulatory networks (scGRNs) that incorporate non-coding RNAs [6]. The integration of long-read sequencing with targeted enrichment methods is improving isoform-level characterization, as demonstrated by STALARD combined with nanopore sequencing revealing previously unannotated polyadenylation sites [3]. Additionally, spatial transcriptomics approaches are advancing our understanding of how subcellular localization impacts lncRNA function in different cellular contexts [5] [6].

As these technologies mature, they promise to illuminate the complex roles of low-abundance transcripts in development, disease, and cellular regulation, ultimately enabling researchers to fully characterize these elusive but biologically critical molecules.

Rare transcripts, including low-abundance isoforms and non-coding RNAs, represent a critical yet under-explored layer of biological regulation. While traditionally overlooked due to technical limitations in detection, these molecules exert disproportionate functional influence on developmental processes and disease pathogenesis. Advances in RNA sequencing technologies, particularly long-read platforms and sophisticated bioinformatics tools, are now enabling researchers to systematically identify and characterize these rare transcriptional events. This technical guide synthesizes current methodologies for rare transcript detection, validation, and functional interpretation, providing researchers with a comprehensive framework for investigating the full transcriptional complexity of biological systems. The emerging paradigm suggests that rare transcripts often serve key regulatory functions, with important implications for understanding disease mechanisms and developing targeted therapeutic interventions.

The transcriptional output of eukaryotic genomes is remarkably complex, encompassing not only abundant messenger RNAs but also a diverse array of rare transcripts that often escape conventional detection methods. These rare transcripts include low-abundance alternative isoforms, tissue-specific transcripts, non-coding RNAs, and transcripts from poorly annotated genes. Their scarcity belies their significant biological impact, as they frequently play outsized roles in critical processes such as cellular differentiation, immune response, and disease progression.

The study of rare transcripts presents distinct technical challenges. Traditional short-read RNA sequencing approaches often struggle to detect transcripts expressed at low levels, particularly when they share exonic sequences with more abundant isoforms. Furthermore, standard bioinformatics pipelines frequently filter out rarely observed transcripts as potential artifacts. However, as we will demonstrate, emerging methodologies are overcoming these limitations, revealing a previously hidden layer of transcriptional regulation with profound implications for basic biology and clinical applications.

Technical Approaches for Rare Transcript Detection

Sequencing Platform Considerations

The choice of sequencing platform significantly impacts the ability to detect and accurately characterize rare transcripts. Each technology offers distinct advantages and limitations for rare transcript research, as summarized in Table 1.

Table 1: Sequencing Platform Comparison for Rare Transcript Detection

| Platform Type | Key Advantages | Limitations | Ideal Applications |

|---|---|---|---|

| Short-read (Illumina) | High throughput, low error rate, low cost per base | Limited ability to resolve full-length isoforms, mapping ambiguity for repetitive regions | Quantifying known transcripts, splicing analysis in well-annotated regions |

| Long-read (PacBio) | Full-length transcript sequencing, no assembly required | Higher error rate, lower throughput, higher input requirements | Discovery of novel isoforms, complex splicing patterns, fusion genes |

| Long-read (Oxford Nanopore) | Real-time sequencing, direct RNA detection, long read lengths | Higher error rate, throughput limitations | Detection of RNA modifications, extremely long transcripts |

| Single-cell RNA-seq | Resolution of cellular heterogeneity, identification of rare cell populations | Low sequencing depth per cell, high cost | Identifying rare cell types, cell-to-cell variation in transcript expression |

Recent systematic assessments of long-read RNA-seq methods demonstrate that libraries with longer, more accurate sequences produce more accurate transcript identifications than those with increased read depth alone [11]. However, greater read depth remains important for accurate quantification of detected transcripts. For de novo transcript detection in genomes lacking high-quality references, the integration of additional orthogonal data and replicate samples is strongly recommended [11].

Experimental Design and Quality Control

Robust experimental design is paramount for successful rare transcript detection. Key considerations include:

Biological Replicates: Biological replicates are essential for distinguishing true rare transcripts from technical artifacts. The number of replicates has a greater impact on detection power than sequencing depth [12]. At least 3-6 biological replicates per condition are recommended, with more replicates providing greater power to detect statistically significant rare expression events.

RNA Quality and Integrity: RNA quality directly impacts transcript detection capability. While traditional mRNA sequencing requires high-quality RNA (RIN > 7), newer total RNA approaches with ribosomal depletion can successfully sequence degraded samples (RIN > 3.5) [13] [14]. This is particularly valuable for clinical samples where RNA integrity may be compromised.

Library Preparation Strategy: The choice between poly-A enrichment and ribosomal depletion significantly affects rare transcript detection. Poly-A selection captures only polyadenylated transcripts, while ribosomal depletion preserves non-polyadenylated RNA species, providing a more comprehensive view of the transcriptome [13] [14]. Stranded library protocols are preferred as they preserve transcript orientation information, which is crucial for identifying antisense transcripts and overlapping genes [13].

Spike-in Controls: Artificial spike-in controls, such as SIRVs, are valuable tools for quality control in rare transcript studies. They enable measurement of assay performance, including dynamic range, sensitivity, and reproducibility, providing an internal standard for normalizing data and assessing technical variability [15].

Bioinformatics and Computational Approaches

Specialized computational methods are required to distinguish true rare transcripts from sequencing artifacts and background noise.

Expression-Aware Annotation: The "proportion expression across transcripts" (pext) metric quantifies isoform expression for variants using large transcriptome datasets like GTEx [16]. This approach helps differentiate functional exons from non-functional ones, with rare variants in lowly-expressed exons showing significantly different effect sizes compared to those in highly expressed exons.

Variant Interpretation Tools: Tools like InfoScan enable comprehensive analysis of full-length single-cell RNA sequencing data, facilitating identification of unannotated transcripts and rare cell populations [17]. In glioblastoma research, InfoScan identified a rare "neoplastic-stemness" subpopulation with cancer stem cell-like features that would be missed by conventional analysis.

De Novo Transcript Detection: In genomes lacking high-quality references, reference-free approaches can reconstruct transcripts from sequencing data alone. These methods benefit from longer read lengths and higher accuracy, though performance varies substantially between tools [11].

Quantitative Analysis of Rare Transcript Detection

The performance of different methodological approaches can be quantitatively assessed across multiple dimensions. Table 2 summarizes key metrics for evaluating rare transcript detection methodologies.

Table 2: Performance Metrics for Rare Transcript Detection Methods

| Methodological Approach | Sensitivity | Specificity | Diagnostic/Discovery Utility | Key Supporting Evidence |

|---|---|---|---|---|

| Blood RNA-seq for rare diseases | 70.6% of known rare disease genes expressed in blood | Filtering reduced candidate genes to <1% of initial outliers | 7.5% diagnostic rate, plus 16.7% with improved candidate resolution [18] | Integration of expression, splicing, and allele-specific expression signals |

| pext metric for variant interpretation | Filters 22.8% of falsely annotated pLoF variants | Removes <4% of high-confidence pathogenic variants [16] | Improved identification of pathogenic variants in haploinsufficient genes | Analysis of 11,706 GTEx tissue samples |

| Long-read vs short-read sequencing | Higher sensitivity for full-length isoforms | Moderate agreement among bioinformatics tools [11] | Superior for de novo transcript discovery | LRGASP consortium evaluation of multiple platforms |

| Single-cell RNA-seq | Identifies rare cell populations (e.g., neoplastic-stemness cells) | Requires validation for low-abundance transcripts | Reveals cellular heterogeneity in cancer [17] | Application in glioblastoma identifying rare subpopulations |

Statistical considerations for rare transcript analysis include:

Multiple Testing Correction: Traditional false discovery rate controls may be overly stringent for rare transcript detection. Bayesian approaches that incorporate prior knowledge about transcript characteristics can improve detection power.

Expression Thresholds: Setting appropriate expression thresholds is crucial. Overly stringent thresholds eliminate true rare transcripts, while lenient thresholds increase false positives. The pext metric provides a principled approach by focusing on the proportion of transcriptional output affected by a variant [16].

Power Analysis: Pilot studies are valuable for determining sample size requirements for rare transcript detection. The extreme skewness of transcript abundance distributions means that substantially larger sample sizes are often needed to detect rare events with statistical confidence [15].

Experimental Protocols for Rare Transcript Validation

Protocol: RNA-seq for Rare Disease Gene Identification

This protocol, adapted from Frésard et al. (2019), outlines an approach for identifying rare disease genes using blood RNA-seq [18]:

Sample Collection and RNA Extraction:

- Collect whole blood in RNA-stabilizing reagents (e.g., PAXgene).

- Extract total RNA using standardized protocols.

- Assess RNA quality using RIN, with values >7 preferred.

Library Preparation and Sequencing:

- Perform ribosomal RNA depletion rather than poly-A selection to capture non-polyadenylated transcripts.

- Use stranded library protocols to preserve transcript orientation.

- Sequence to a depth of 30-50 million reads per sample.

Data Processing:

- Align reads to the reference genome using splice-aware aligners.

- Quantify gene and transcript expression levels.

- Identify outlier expression and splicing events by comparing to large control cohorts (N=1,594 recommended).

Variant Filtering and Prioritization:

- Filter expression outliers based on:

- LoF intolerance (pLI ≥0.9)

- Phenotype relevance (HPO term matching)

- Presence of rare variants nearby (MAF ≥0.01%)

- Deleterious variant prediction (CADD score ≥10)

- Filter splicing outliers based on:

- Phenotype relevance (HPO term matching)

- Presence of deleterious rare variants near splice junctions

- Filter expression outliers based on:

Integration and Validation:

- Integrate expression, splicing, and allele-specific expression signals.

- Validate candidate genes through orthogonal methods (e.g., Sanger sequencing, functional assays).

Protocol: Single-Cell Analysis of Rare Cell Populations

This protocol, based on InfoScan methodology, details the identification of rare cell populations using single-cell RNA-seq [17]:

Single-Cell Library Preparation:

- Prepare single-cell suspensions from tissue samples.

- Perform single-cell RNA-seq using full-length transcript protocols.

- Include unique molecular identifiers (UMIs) to correct for amplification biases.

Data Processing and Transcript Identification:

- Process raw sequencing data to generate count matrices.

- Perform quality control to remove low-quality cells and doublets.

- Identify unannotated transcripts and isoforms.

Cell Clustering and Rare Population Identification:

- Perform dimensionality reduction and clustering.

- Identify rare clusters based on distinct expression profiles.

- Characterize marker genes for each population.

Functional Analysis:

- Perform pathway enrichment analysis on rare population markers.

- Investigate cell-cell communication patterns.

- Validate findings using orthogonal methods (e.g., immunohistochemistry, flow cytometry).

Visualization of Analytical Workflows

The following diagrams illustrate key workflows and relationships in rare transcript analysis, created using DOT language with the specified color palette.

Rare Transcript Analysis Workflow

Figure 1: Comprehensive workflow for rare transcript analysis, encompassing experimental and computational steps.

Rare Transcript Filtering Strategy

Figure 2: Multi-step filtering strategy for identifying high-confidence rare transcripts from initial candidates.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful rare transcript research requires specialized reagents and materials. Table 3 details key solutions and their applications.

Table 3: Essential Research Reagents for Rare Transcript Studies

| Reagent/Material | Function | Application Notes | Key References |

|---|---|---|---|

| RNA-stabilizing reagents | Preserve RNA integrity during sample collection and storage | Critical for clinical samples; PAXgene recommended for blood | [13] |

| Ribosomal depletion kits | Remove abundant ribosomal RNA to enhance detection of rare transcripts | Preferred over poly-A selection for comprehensive transcriptome coverage | [13] [14] |

| Stranded library prep kits | Preserve transcript orientation information | Essential for identifying antisense transcripts and overlapping genes | [13] |

| Spike-in controls | Quality control and normalization standards | Enable technical variability assessment; SIRVs recommended | [15] |

| Unique Molecular Identifiers | Correct for amplification biases | Crucial for accurate quantification in single-cell studies | [17] [14] |

| Long-read sequencing kits | Generate full-length transcript sequences | PacBio or Oxford Nanopore kits for isoform resolution | [11] |

The systematic study of rare transcripts represents a frontier in molecular biology with significant implications for understanding development and disease. Methodological advances in sequencing technologies, experimental design, and computational analysis are increasingly enabling researchers to detect and characterize these elusive molecules. The evidence demonstrates that rare transcripts frequently play critical roles in biological regulation, from guiding developmental processes to contributing to disease pathogenesis when dysregulated.

Future progress in this field will likely come from several directions. The integration of multi-omics datasets will provide crucial context for interpreting the functional significance of rare transcripts. Improvements in long-read sequencing accuracy and throughput will enhance detection capabilities while reducing costs. The development of more sophisticated computational methods that incorporate biological priors will improve discrimination between functional rare transcripts and transcriptional noise. Finally, the creation of comprehensive tissue and cell-type-specific transcriptome atlases will provide essential reference data for distinguishing truly rare transcripts from context-specific expression.

As these methodological advances mature, rare transcript analysis will increasingly transition from a specialized research area to an integral component of comprehensive transcriptional studies. This integration will deepen our understanding of biological complexity and provide new avenues for therapeutic intervention in human disease.

The detection and accurate quantification of low-abundance RNA transcripts represent a fundamental challenge in modern biology with profound implications for understanding cellular function, disease mechanisms, and therapeutic development. This technical guide examines three interrelated, core obstacles that critically define the boundaries of current research: tissue-specific expression patterns, pervasive transcriptional noise, and fundamental technological detection limits. Within the context of detecting low-abundance RNA transcripts, these factors conspire to obscure genuine biological signals. Tissue-specific expression dictates that critical regulatory genes, including transcription factors, are often expressed at low levels and in a confined subset of cells, making them difficult to capture in heterogeneous tissue samples [19] [20]. Furthermore, the transcriptome is not a static entity but is subject to intrinsic stochastic fluctuations, leading to transcriptional noise that can be misinterpreted as biological signal or, conversely, mask true cell-to-cell differences [21] [22]. Finally, technical limitations inherent to RNA sequencing protocols, from reverse transcription inefficiencies to the statistical sampling of sequencing itself, impose a hard ceiling on our ability to detect and quantify the rarest transcripts [23] [24]. This whitepaper provides an in-depth analysis of these obstacles, summarizes key quantitative data, details relevant experimental methodologies, and visualizes the core concepts and workflows for the research community.

Tissue-Specific Expression: A Spatial Challenge

Classification and Patterns

Global classification of human proteins and their corresponding mRNAs with regard to spatial expression patterns across organs and tissues is essential for interpreting transcriptomic data. A foundational study using quantitative transcriptomics (RNA-Seq) across a representative set of all major human organs and tissues led to a systematic classification of all human protein-coding genes. The research established eight distinct categories based on fragments per kilobase of exon model per million mapped reads (FPKM) levels in 27 tissues, with a detection limit cutoff set at 1 FPKM [19].

Table 1: Gene Classification Based on Tissue-Specific Expression Patterns [19]

| Classification Category | Definition |

|---|---|

| Not Detected | < 1 FPKM in all 27 tissues |

| Tissue Specific | ≥ 50-fold higher FPKM level in one tissue vs. all others |

| Tissue Enriched | ≥ 5-fold higher FPKM level in one tissue vs. all others |

| Group Enriched | ≥ 5-fold higher average FPKM level in a group of 2-7 tissues vs. all others |

| Mixed (Low) | Detected in 1-26 tissues and at least one tissue < 10 FPKM |

| Mixed (High) | Detected in 1-26 tissues and all detected tissues > 10 FPKM |

| Expressed in All (Low) | Detected in all 27 tissues and at least one tissue < 10 FPKM |

| Expressed in All (High) | Detected in all 27 tissues and all tissues > 10 FPKM |

This work, integrated into the Human Protein Atlas, demonstrated that a significant portion of the genome exhibits restricted expression patterns. This has direct consequences for detecting low-abundance transcripts, as many are not ubiquitously expressed but are instead concentrated in specific cell types, making them easy to miss in bulk tissue analyses or whole transcriptome studies that lack the necessary spatial or cellular resolution [19].

Experimental Protocol: Tissue-Specific RNA-Seq

The following methodology outlines the key steps for generating the data used in the aforementioned tissue-specific classification [19]:

- Sample Acquisition and Quality Control: Tissue samples are collected and embedded in Optimal Cutting Temperature (O.C.T.) compound. A hematoxylin-eosin (HE) stained section is prepared and examined by a pathologist to ensure proper tissue morphology.

- RNA Extraction: Three 10 μm sections are cut and collected for RNA extraction. Total RNA is extracted using a kit-based method (e.g., RNeasy Mini Kit).

- RNA Quality Assessment: Extracted RNA is analyzed using an automated electrophoresis system (e.g., Experion or Bioanalyzer). Only high-quality RNA samples with an RNA Integrity Number (RIN) ≥ 7.5 are used for subsequent library preparation.

- Library Preparation and Sequencing: mRNA sequencing is performed on a high-throughput platform (e.g., Illumina HiSeq) using the standard RNA-seq protocol with a read length of 2 x 100 bases. Samples are multiplexed, targeting an average of 18 million mappable read pairs per sample.

- Bioinformatic Processing:

- Quality Control: Raw reads are trimmed for low-quality ends.

- Alignment: Processed reads are mapped to the human genome (e.g., GRCh37) using a splice-aware aligner (e.g., Tophat).

- Quantification: Gene expression levels are calculated as FPKM values using software (e.g., Cufflinks), which corrects for transcript length and total mapped reads.

- Tissue-Specificity Classification: For each tissue, the average FPKM value across sample replicates is used. Each gene is then classified into one of the eight categories based on the predefined FPKM fold-change rules and detection limits.

Transcriptional Noise: A Stochastic Challenge

Quantifying and Interpreting Noise

Transcriptional noise refers to the stochastic fluctuations in gene expression that create cell-to-cell variability within an isogenic population. This noise is a significant confounding factor in detecting low-abundance transcripts, as it can be difficult to distinguish a genuine, consistently low signal from random transcriptional bursts. A critical challenge is that different single-cell RNA sequencing (scRNA-seq) algorithms systematically underestimate the fold change in transcriptional noise compared to the gold-standard method, single-molecule RNA fluorescence in situ hybridization (smFISH) [22] [25].

Research utilizing a small-molecule noise enhancer (5′-iodo-2′-deoxyuridine, IdU) demonstrated that while various scRNA-seq analysis algorithms (SCTransform, scran, Linnorm, BASiCS, SCnorm) could consistently detect genome-wide noise amplification, the magnitude of noise increase was consistently underestimated. smFISH validation confirmed that IdU amplifies noise in a "globally penetrant" manner—increasing variability without altering mean expression levels—for the vast majority of genes [22]. This underestimation by scRNA-seq has critical implications for interpreting data on low-abundance transcripts, where noise can represent a substantial portion of the measured signal.

Furthermore, the very presence of transcriptional "noisy transcripts" (erroneous transcription from intergenic regions, erroneous splicing, and retained introns) has been shown to impact computational methods. The inclusion of this biological noise leads to systematic errors in expression measurement, including an increase in false-positive genes and transcripts and an underestimation of true transcript abundance [21].

Table 2: Impact of Transcriptional Noise on RNA-seq Analysis Tools [21]

| Analysis Tool | False Positive Transcripts (without noise) | False Positive Transcripts (with noise) | Increase | Median Abundance of FPs (with noise) |

|---|---|---|---|---|

| StringTie2 | 18,844 (FPR=7%) | 23,494 (FPR=8%) | ~25% | 0.14 TPM |

| Salmon | 21,546 (FPR=8%) | 36,677 (FPR=13%) | ~70% | 0.85 TPM |

| kallisto | 34,316 (FPR=12%) | >51,000 (FPR=18%) | ~50% | 0.39 TPM |

It is also important to note that the role of transcriptional noise in biological processes like aging is complex and may not be a universal hallmark. Systematic analysis of multiple aging scRNA-seq datasets using specialized toolkits like Decibel shows large variability between tissues, suggesting that increased transcriptional noise is not a consistent feature of aged tissues and may be overshadowed by other factors like changes in cell type composition [26].

Experimental Protocol: Quantifying Noise with scRNA-seq and smFISH

The following combined protocol is used to quantify and validate transcriptional noise [22]:

- Cell Culture and Perturbation: Culture isogenic cells (e.g., mouse embryonic stem cells or human Jurkat T lymphocytes). Treat with a noise-enhancing molecule like IdU or a DMSO control.

- Single-Cell RNA Sequencing:

- Single-Cell Isolation: Isolate individual cells using a microfluidic or droplet-based system.

- Library Preparation: Generate barcoded cDNA libraries from the single cells. Sequence the pooled library on an appropriate platform.

- Computational Noise Quantification: Analyze the raw scRNA-seq data using multiple normalization and noise quantification algorithms (e.g., SCTransform, scran, BASiCS, a "raw" method normalized only by sequencing depth). Key metrics include:

- Coefficient of Variation (CV): σ/μ (standard deviation / mean).

- Fano Factor: σ²/μ (variance / mean), which is not inherently dependent on the mean expression level.

- Validation by smFISH (Gold Standard):

- Probe Design: Design fluorescently labeled oligonucleotide probes against a panel of target genes representing a range of expression levels and functions.

- Hybridization: Fix cells and hybridize the fluorescent probes to the target mRNAs.

- Imaging and Quantification: Acquire high-resolution images using fluorescence microscopy. Identify and count individual mRNA molecules as discrete spots within each cell. Calculate the mean, variance, and Fano factor for each gene across the cell population.

Detection Limits: A Technological Challenge

Methodological Limitations and Artifacts

The journey from a rare RNA transcript in a cell to a quantified signal in a dataset is fraught with technical hurdles that fundamentally limit detection. A primary challenge is the inherent inefficiency of reverse transcription (RT), the critical first step in most RNA-seq protocols. Modified nucleotides in RNA can cause the reverse transcriptase to stall, misincorporate a base, or "jump," creating a characteristic "RT-signature" [23]. For many important RNA modifications, these signatures are weak or non-existent, making the modifications effectively "RT-silent" and thus invisible to standard sequencing. The background of natural RT-stops and misincorporations creates significant noise, against which the signal of a rare transcript or modification must be detected, leading to a poor signal-to-noise ratio, especially for substoichiometric modifications [23].

In single-cell RNA-seq, the problem is exacerbated by the "gene dropout" problem, where genes that are truly expressed fail to be detected. This is due to the minuscule amount of starting RNA in a single cell and the low efficiency of mRNA capture. This issue is particularly pronounced for low-abundance transcripts, a category that includes many key regulatory genes like transcription factors [20]. Whole Transcriptome approaches spread a finite number of sequencing reads across all ~20,000 genes, resulting in shallow coverage for any individual gene and making it prone to missing low-abundance signals [20] [24].

Furthermore, the choice of tissue itself is a critical consideration. Tissues that are difficult to preserve (e.g., brain) or are processed using certain methods (e.g., Formalin-Fixed Paraffin-Embedded or FFPE samples) suffer from RNA degradation and modifications, leading to fragmented transcripts and biased gene expression quantification [24].

Experimental Protocol: Targeted RNA Expression Profiling

To overcome the limitations of whole transcriptome sequencing for detecting specific low-abundance transcripts, targeted gene expression profiling is often employed [20]. The protocol focuses sequencing resources on a pre-defined gene set.

- Panel Design: A panel of target genes (from dozens to several thousand) is selected based on the research question (e.g., a specific signaling pathway, candidate biomarkers).

- Library Preparation: The initial steps for single-cell isolation and cDNA synthesis may be similar to whole transcriptome methods. However, instead of sequencing all cDNA, a targeted amplification step is incorporated using probes designed for the specific gene panel.

- Sequencing and Analysis: All sequencing reads are channeled towards the target genes. This results in a much higher sequencing depth per gene for the same total number of reads.

- Advantages:

- Superior Sensitivity: Dramatically reduces the "gene dropout" rate for target genes, allowing reliable quantification of low-abundance transcripts.

- Cost-Effectiveness: Requires far fewer sequencing reads per cell, enabling scaling to hundreds or thousands of samples.

- Streamlined Bioinformatics: Analysis is simplified with data from a few hundred genes instead of the entire transcriptome.

Table 3: Comparison of scRNA-seq Methodologies for Detecting Low-Abundance Transcripts [20]

| Feature | Whole Transcriptome Sequencing | Targeted Gene Expression Profiling |

|---|---|---|

| Goal | Unbiased, discovery-oriented | Focused, hypothesis-driven |

| Sensitivity | Lower for low-abundance transcripts due to shallow coverage | Higher for target genes due to deep coverage |

| Quantitative Accuracy | Limited for rare transcripts by gene dropout | Superior for the pre-defined gene panel |

| Best For | De novo cell type identification, discovering novel disease pathways | Validating targets, interrogating specific pathways, clinical biomarker assays |

| Cost per Cell | Higher | Lower |

| Computational Complexity | High | Low to Moderate |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Reagents and Materials for Overcoming Key Obstacles in RNA Detection

| Reagent/Material | Function | Context |

|---|---|---|

| RNeasy Mini Kit (Qiagen) | Extraction of high-quality total RNA from tissue and cell samples. | Standard protocol for bulk RNA-seq; critical for ensuring high RIN numbers [19]. |

| ERCC Spike-In Controls | Synthetic RNA controls added to samples to estimate technical variation. | Allows for decomposition of total variance into biological and technical components in scRNA-seq, crucial for noise quantification [26]. |

| IdU (5′-Iodo-2′-deoxyuridine) | A small-molecule noise enhancer. | Used as an experimental perturbation to orthogonally amplify transcriptional noise without altering mean expression, enabling noise studies [22] [25]. |

| SMART-seq v4 Reagent Kit | For generating high-quality, full-length cDNA from single cells. | A common choice for whole transcriptome scRNA-seq protocols [20]. |

| Chromium Single Cell Gene Expression Solution (10x Genomics) | A droplet-based system for parallel barcoding of thousands of single cells. | Enables large-scale whole transcriptome scRNA-seq studies [20]. |

| Custom Targeted Gene Expression Panel | A set of probes designed to enrich for a specific set of genes of interest. | Used in targeted scRNA-seq to focus sequencing on a pre-defined gene set, increasing sensitivity for low-abundance targets [20]. |

| smFISH Probe Sets | Fluorescently labeled oligonucleotide probes designed to bind specific mRNA sequences. | The gold-standard for absolute mRNA quantification and validation of transcriptional noise in single cells [22]. |

| CMCT (Carbodiimide) | Chemical that forms alkaline-resistant adducts with pseudouridine (Ψ). | Converts an RT-silent RNA modification into a detectable RT-stop, enabling mapping of Ψ [23]. |

| Decibel (Python Toolkit) | A computational toolkit implementing multiple methods for quantifying transcriptional noise from scRNA-seq data. | Standardizes the analysis of age-related or disease-related transcriptional noise across datasets [26]. |

| StringTie2, Salmon, kallisto | Computational tools for transcript assembly and abundance estimation from RNA-seq data. | Essential for quantifying gene expression; their performance is differentially affected by transcriptional noise [21]. |

The transcriptome represents a vastly complex landscape where low-abundance transcripts play disproportionately critical roles in cellular regulation, disease mechanisms, and therapeutic development. These rare RNA molecules—including non-coding RNAs, alternatively spliced isoforms, and regulatory RNAs—often function at the very helm of gene regulatory networks despite their scarce numbers. Historically, technical limitations have obscured this "dark matter" of the transcriptome, but recent technological revolutions are now bringing these elusive molecules into clear view [14]. The comprehensive cataloging of these transcripts is not merely an academic exercise; it represents a fundamental requirement for advancing our understanding of cellular heterogeneity, precision medicine, and the development of novel RNA-based therapeutics [27].

The detection and accurate quantification of low-abundance transcripts present formidable technical challenges that conventional RNA sequencing approaches frequently fail to overcome. Sensitivity limitations inherent in standard protocols, combined with amplification biases and overwhelming signal from abundant housekeeping RNAs, have created critical blind spots in transcriptome analysis [28]. Furthermore, the limited input material available from rare cell populations and single-cell analyses compounds these issues, demanding innovative approaches specifically designed to enhance detection capabilities for rare transcript species [3]. This technical whitepaper examines the current state-of-the-art methodologies for uncovering and characterizing this hidden dimension of the transcriptome, providing researchers with a comprehensive framework for advancing discovery in this rapidly evolving field.

Technical Challenges in Low-Abundance Transcript Detection

The journey to comprehensively catalog low-abundance transcripts is fraught with technical hurdles that must be systematically addressed through experimental design and analytical refinement.

Fundamental Detection Barriers

- Signal-to-Noise Ratio: In standard RNA-Seq workflows, ribosomal RNA (rRNA) constitutes approximately 80% of cellular RNA, meaning the vast majority of sequencing resources are consumed without generating informative data about non-ribosomal transcripts. This creates an inherent sensitivity limitation for detecting rare transcripts [29].

- Amplification Bias: PCR amplification, an essential step in library preparation, introduces substantial bias as amplification efficiency varies significantly between transcripts. This variability disproportionately affects low-abundance transcripts, whose representation may be either artificially suppressed or exaggerated through stochastic effects [3].

- Sample Quality Degradation: RNA integrity directly impacts detection capability, particularly for longer transcripts. The RNA Integrity Number (RIN) serves as a critical quality metric, with values greater than 7 generally required for high-quality sequencing. However, challenging sample types like blood often yield compromised RNA, further reducing sensitivity for low-abundance targets [29].

Methodological Limitations

- Primer Efficiency Artifacts: Conventional isoform-specific qPCR requires distinct primer pairs that introduce amplification bias due to differences in primer efficiency. This presents a particular challenge for low-abundance transcripts where small absolute differences in detection threshold can dramatically alter biological interpretation [3].

- Throughput-Accuracy Tradeoffs: Different single-cell RNA-seq protocols present inherent tradeoffs. Full-length methods (Smart-Seq2, MATQ-Seq) offer superior sensitivity for low-abundance genes and better isoform characterization, while 3'-end counting methods (Drop-Seq, inDrop) enable higher throughput but may miss rare transcripts [27].

- Quantification Inaccuracy: According to MIQE guidelines, Cq values above 30-35 in RT-qPCR are considered unreliable due to poor reproducibility, creating a fundamental detection limit for many low-abundance transcripts using conventional approaches [3].

Table 1: Key Challenges in Low-Abundance Transcript Detection

| Challenge Category | Specific Limitation | Impact on Sensitivity |

|---|---|---|

| Sample Composition | rRNA dominance (80% of total RNA) | Reduces sequencing depth for non-rRNA targets |

| Amplification Effects | PCR stochasticity and bias | Distorts true abundance relationships |

| Technical Thresholds | RT-qPCR Cq > 30-35 limit | Precludes reliable quantification of rare transcripts |

| Protocol Selection | Throughput vs. sensitivity tradeoffs | Full-length methods more sensitive but lower throughput |

Advanced Methodologies for Enhanced Detection

Depletion and Enrichment Strategies

Strategic removal of abundant RNA species and targeted enrichment of low-abundance transcripts represent powerful approaches for enhancing detection sensitivity.

- rRNA Depletion Methods: Both magnetic bead-based precipitation and RNase H-mediated degradation methods effectively reduce ribosomal RNA content, dramatically improving signal-to-noise ratio for non-ribosomal transcripts. Bead-based methods typically offer greater enrichment but with higher variability, while RNase H approaches provide more modest but reproducible enrichment [29].

- Globin Depletion: In blood-derived samples, globin transcripts represent a major confounding abundance similar to rRNA. Depletion of globin mRNA significantly enhances detection capability for low-abundance transcripts in hematological samples, though this approach obviously precludes analysis of globin gene regulation itself [14] [29].

- Probe-Based Capture Enrichment: Targeted enrichment using custom-designed probes complementary to low-abundance transcripts of interest facilitates more efficient sequencing and significantly enhanced detection of RNA modification events like A-to-I editing. This approach directly addresses the fundamental limitation that sequencing depth for a given transcript correlates directly with expression level [28].

Library Preparation Innovations

- Unique Molecular Identifiers (UMIs): Incorporation of UMIs during cDNA synthesis enables accurate quantification by correcting for PCR amplification bias, allowing distinction between biological variation and technical artifacts. This approach is particularly valuable for quantifying low-abundance transcripts where amplification bias represents a major confounding factor [14] [27].

- Stranded Library Protocols: Strand-aware library preparation preserves transcript orientation information, which is critical for identifying antisense transcripts and non-coding RNAs that often exhibit low abundance. The use of dUTP incorporation during second-strand synthesis followed by uracil-DNA-glycosylase treatment effectively generates stranded libraries without significantly compromising sensitivity [29].

- Single-Cell RNA-Seq Adaptations: Droplet-based single-cell technologies (Drop-Seq, inDrop) combined with UMIs enable transcriptome analysis at individual cell resolution, inherently enhancing detection of low-abundance transcripts that may be specific to rare cell subpopulations [27].

Table 2: Comparison of Advanced Detection Methodologies

| Methodology | Mechanism | Advantages | Limitations |

|---|---|---|---|

| rRNA Depletion | Removal of ribosomal RNA | Increases sequencing depth for mRNA/lncRNA | Potential off-target effects; variable efficiency |

| Probe-Based Capture | Hybridization and enrichment of targets | Enables focused sequencing on transcripts of interest | Requires prior knowledge of target sequences |

| UMI Incorporation | Molecular barcoding of original molecules | Corrects for PCR amplification bias | Adds complexity and cost to library preparation |

| Long-Read Sequencing | Full-length transcript sequencing | Resolves isoform complexity without assembly | Higher error rates than short-read technologies |

The STALARD Method for Targeted Amplification

The STALARD (Selective Target Amplification for Low-Abundance RNA Detection) method represents a specialized approach designed specifically to overcome sensitivity limitations for known low-abundance transcripts. This rapid (<2 hour) targeted two-step RT-PCR method uses standard laboratory reagents to selectively amplify polyadenylated transcripts sharing a known 5'-end sequence [3].

The experimental workflow proceeds as follows:

- Primer Design: A gene-specific primer (GSP) is designed to match the 5'-end sequence of the target RNA (with thymine replacing uracil), with optimal Tm of 62°C and GC content of 40-60%.

- Reverse Transcription: First-strand cDNA synthesis is performed using an oligo(dT) primer tailed at its 5'-end with the GSP sequence.

- Targeted Amplification: Limited-cycle PCR (9-18 cycles) is performed using only the GSP, which anneals to both ends of the cDNA, specifically amplifying the target transcript without requiring a separate reverse primer.

- Quantification: Amplified products are purified and quantified using either qPCR or nanopore sequencing [3].

When applied to Arabidopsis thaliana, STALARD successfully amplified the low-abundance VIN3 transcript to reliably quantifiable levels and enabled consistent quantification of the extremely low-abundance antisense transcript COOLAIR, resolving inconsistencies reported in previous studies [3].

STALARD Method Workflow

Experimental Design Considerations for Optimal Detection

Strategic Planning Framework

- Define Clear Biological Questions: Prior to initiating any RNA-Seq study, researchers must precisely define their biological questions, as this dictates all subsequent experimental design choices. A clearly articulated hypothesis helps design appropriate statistical analysis strategies and determines the required sequencing depth, replication, and analytical approaches [29].

- Select Appropriate RNA Biotype Targeting: Different RNA species require specialized approaches. mRNA sequencing typically employs poly-A selection, while non-coding RNAs often require ribosomal depletion. Small RNAs need specialized size selection, and non-polyadenylated transcripts demand specific ribosomal depletion protocols [29].

- Implement Robust Quality Control: RNA quality must be rigorously assessed through RIN measurement, 260/280 and 260/230 ratios, and visual inspection of electropherograms. High-quality RNA shows distinct 28S and 18S rRNA peaks in a 2:1 ratio, which is particularly critical for detecting low-abundance transcripts where degradation artifacts can completely obscure true signals [29].

Platform and Protocol Selection

The Long-read RNA-Seq Genome Annotation Assessment Project (LRGASP) consortium conducted a comprehensive evaluation of long-read approaches for transcriptome analysis, generating over 427 million long-read sequences from complementary DNA and direct RNA datasets [11]. Their findings provide critical guidance for platform selection:

- Sequence Length vs. Depth Tradeoff: Libraries with longer, more accurate sequences produce more accurate transcripts than those with increased read depth, whereas greater read depth improves quantification accuracy. This distinction helps researchers optimize resources based on their primary objective—isoform discovery versus expression quantification [11].

- Reference-Based Advantages: In well-annotated genomes, tools based on reference sequences demonstrate the best performance for transcript identification, though reference-free approaches remain valuable for discovering novel transcripts in less-characterized systems [11].

- Orthogonal Validation: Incorporating additional orthogonal data and replicate samples is strongly advised when aiming to detect rare and novel transcripts, as technical artifacts can mimic biological signals, particularly for low-abundance targets [11].

Table 3: Technical Recommendations for Experimental Design

| Experimental Factor | Recommendation | Rationale |

|---|---|---|

| Sequencing Depth | 50-100 million reads per sample | Enhances statistical power for low-abundance detection |

| Replication | Minimum 3 biological replicates | Enables robust differential expression analysis |

| RNA Quality | RIN >7, distinct 28S/18S peaks | Preserves full-length transcript integrity |

| rRNA Depletion | RNAse H method for consistency | More reproducible than bead-based approaches |

| Library Type | Stranded protocols | Preserves orientation for non-coding RNA detection |

Computational and Analytical Approaches

Bioinformatics Strategies for Enhanced Sensitivity

The computational analysis of RNA-seq data requires specialized approaches to accurately identify and quantify low-abundance transcripts amidst background noise and technical artifacts.

- Unique Molecular Identifier (UMI) Processing: Dedicated computational methods are required to correctly process UMI-tagged reads, including accurate UMI extraction, error correction for sequencing errors in the UMI sequence, and deduplication to distinguish biological duplicates from PCR artifacts. These steps are particularly critical for low-abundance transcripts where few original molecules are present [14] [27].

- Single-Cell Data Analysis: Specialized computational tools are essential for addressing the high dimensionality, sparsity, and noise characteristic of single-cell RNA-seq data. These include imputation algorithms for missing values, batch effect correction methods that preserve biological variation, and dimensionality reduction techniques that can identify rare cell populations based on low-abundance marker transcripts [27].

- Long-Read Transcriptome Assembly: For novel transcript discovery, long-read sequencing technologies enable full-length transcript sequencing without assembly, but still require sophisticated algorithms for accurate identification of splice variants, transcription start and end sites, and differentiation between real transcripts and technical artifacts [11].

Visualization Principles for Complex Data

Effective visualization of transcriptome data requires adherence to established design principles that maximize information transfer while minimizing distortion.

- Maximize Data-Ink Ratio: A concept introduced by Edward Tufte, the data-ink ratio emphasizes maximizing the proportion of ink (or pixels) dedicated to presenting actual data rather than non-informative elements. Removing chartjunk such as unnecessary gridlines, backgrounds, and 3D effects dramatically improves clarity [30] [31].

- Direct Labeling and Meaningful Baselines: Label elements directly to avoid indirect look-up through legends, and ensure axes start at meaningful baselines (bar charts should typically start at zero) to prevent visual distortion of quantitative relationships [30].

- Color Selection for Scientific Communication: Choose color palettes appropriate to data type—qualitative palettes for categorical data, sequential palettes for ordered numeric data, and diverging palettes for data that diverges from a central value. Critically, ensure color choices are perceptible to those with color vision deficiencies, affecting approximately 8% of men worldwide [30] [32].

Computational Analysis Workflow

Research Reagent Solutions for Low-Abundance Transcript Detection

Table 4: Essential Research Reagents and Their Applications

| Reagent Category | Specific Examples | Function in Low-Abundance Detection |

|---|---|---|

| Depletion Reagents | rRNA depletion kits (RNase H-based), Globin depletion probes | Remove abundant RNA species to increase sequencing depth for rare transcripts |

| Library Preparation Kits | Stranded cDNA synthesis kits, UMI-containing adapters | Preserve strand information and enable amplification bias correction |

| Enzymes | SeqAmp DNA polymerase, HiScript IV reverse transcriptase | Ensure high efficiency in cDNA synthesis and targeted amplification |

| Target Capture Reagents | Custom DNA probes, GSoligo(dT) primers | Specifically enrich for transcripts of interest to enhance detection |

| Quality Assessment Tools | Bioanalyzer RNA kits, AMPure XP beads | Assess RNA integrity and purify amplification products |

The comprehensive cataloging of novel low-abundance transcripts represents both a formidable technical challenge and a tremendous opportunity for advancing biological understanding and therapeutic development. As the methodologies detailed in this whitepaper demonstrate, successful detection requires an integrated approach combining strategic sample preparation, specialized enrichment protocols, sophisticated computational analysis, and appropriate visualization techniques. The field is rapidly evolving toward multi-omics integration, where Total RNA Sequencing data gains exponential value when analyzed alongside genomic variants, epigenetic modifications, protein expression patterns, and metabolic profiles [14].

Looking ahead, several emerging trends promise to further enhance our ability to explore the hidden dimensions of the transcriptome. Long-read sequencing technologies continue to improve in accuracy and throughput, enabling more comprehensive isoform characterization without assembly artifacts [11]. Spatial transcriptomics approaches are beginning to map low-abundance transcripts within their tissue context, revealing microenvironment-specific expression patterns that bulk sequencing approaches inevitably miss. Microfluidics and single-cell technologies are advancing toward true single-molecule sensitivity, potentially eliminating the final barriers to detecting even the rarest transcriptional events [27]. As these technologies mature and converge, we anticipate a new era of transcriptome analysis where the complete regulatory landscape becomes visible, unlocking unprecedented opportunities for understanding disease mechanisms and developing targeted interventions.

Cutting-Edge Technologies for Sensitive Detection and Quantification

The advent of ultra-deep RNA sequencing, which pushes sequencing depths to approximately one billion reads, represents a paradigm shift in transcriptomic research and clinical diagnostics. This approach addresses a fundamental limitation of standard RNA-seq protocols, which typically operate at 50-150 million reads and frequently fail to detect low-abundance transcripts and rare splicing events critical for accurate biological interpretation and clinical diagnosis [33] [34]. The core thesis of this whitepaper is that by dramatically increasing sequencing depth, researchers can achieve unprecedented sensitivity to uncover molecular features previously obscured by technical limitations, thereby advancing both fundamental research and precision medicine.

In Mendelian disorder diagnostics, for example, variants of uncertain significance (VUSs) often affect gene expression and splicing in ways that remain cryptic at conventional sequencing depths [33]. Research from Baylor College of Medicine demonstrates that pathogenic splicing abnormalities undetectable at 50 million reads become readily apparent at 200 million reads and are further elucidated at 1 billion reads [34] [35]. This whitepaper provides a comprehensive technical examination of ultra-deep RNA sequencing methodologies, their experimental parameters, and their transformative applications for researchers and drug development professionals focused on detecting the most elusive elements of the transcriptome.

Quantitative Benefits of Ultra-Deep Sequencing

Depth-Dependent Gains in Detection Sensitivity

The relationship between sequencing depth and transcript detection follows a predictable yet impactful trajectory. While standard-depth sequencing (∼50 million reads) captures the majority of highly expressed transcripts, ultra-deep sequencing provides diminishing returns for high-abundance genes but offers exponential gains for low-abundance targets [33]. At approximately 1 billion reads, experiments achieve near-saturation for gene-level detection, although isoform-level coverage continues to benefit from even deeper sequencing [36].

Table 1: Impact of Sequencing Depth on Transcript Detection Sensitivity

| Sequencing Depth | Gene Detection Capability | Splicing Event Detection | Clinical Utility for VUS |

|---|---|---|---|

| 50 million reads (Standard) | Saturated for high-expression genes | Limited to common splicing events | Pathogenic abnormalities often missed |

| 200 million reads (High) | Improved low-expression gene detection | Enhanced rare splicing discovery | Emerging detection of pathogenic signals |

| 1 billion reads (Ultra-deep) | Near-saturation for most genes | Comprehensive splicing landscape | Clear resolution of previously cryptic VUS |

The diagnostic implications of these depth-dependent sensitivity gains are profound. In two clinical cases described by Zhao et al., pathogenic splicing abnormalities were completely undetectable at 50 million reads but emerged clearly at 200 million reads and became even more pronounced at 1 billion reads [33] [36]. This demonstrates that for critical applications in genetic diagnostics and biomarker discovery, ultra-deep sequencing can reveal pathogenic mechanisms that would otherwise remain undetected.

The MRSD-deep Resource for Experimental Design

To guide researchers in selecting appropriate sequencing depths for their specific applications, the Baylor team developed MRSD-deep, a resource that estimates the Minimum Required Sequencing Depth to achieve desired coverage thresholds [33] [35]. This tool provides both gene- and junction-level guidelines, enabling laboratories to optimize their sequencing investments based on their specific targets.

For genes with low expression but high clinical relevance, such as those expressed at minimal levels in clinically accessible tissues like blood, MRSD-deep can calculate the depth necessary to achieve sufficient coverage for confident variant interpretation [34]. This is particularly valuable for neurodevelopmental and neurological disorder genes that may be weakly expressed in readily available tissues but require comprehensive characterization for accurate diagnosis.

Experimental Framework for Ultra-Deep RNA Sequencing

Core Methodology and Platform Selection

The foundational study validating ultra-deep RNA sequencing utilized the Ultima Genomics platform to achieve depths of up to ∼1 billion unique reads across four clinically accessible tissues: blood, fibroblast, lymphoblastoid cell lines (LCLs), and induced pluripotent stem cells (iPSCs) [33] [36]. The experimental workflow encompasses several critical phases:

Sample Preparation and Quality Control: RNA extraction followed by rigorous quality assessment, including RNA Integrity Number (RIN) evaluation. For FFPE samples, the DV200 score (percentage of RNA fragments >200 nucleotides) serves as a critical quality metric [37].

Library Construction: Employing either rRNA depletion or poly-A selection methods. The Baylor team used rRNA removal approaches to capture both coding and non-coding RNA species [34] [38].

Ultra-Deep Sequencing: Implementation on the Ultima platform with quality control measures including PhiX spike-in controls (typically at 5%) to monitor sequencing performance [37].

Bioinformatic Processing: A comprehensive pipeline including quality control, alignment, and quantification, as detailed in Section 3.3.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of ultra-deep RNA sequencing requires careful selection of reagents, platforms, and computational tools. The following table catalogs essential components validated in recent studies.

Table 2: Essential Research Reagents and Platforms for Ultra-Deep RNA Sequencing

| Category | Specific Product/Platform | Function & Application |

|---|---|---|

| Sequencing Platform | Ultima Genomics | Enables cost-effective sequencing up to 1 billion reads [33] |

| Library Prep Kit | Stranded Total RNA Prep with Ribo-Zero Plus (Illumina) | rRNA depletion for comprehensive transcriptome capture [37] |

| RNA Quality Control | Tapestation High Sensitivity RNA Assay (Agilent) | Assesses RNA integrity for sequencing suitability [37] |

| RNA Quantification | Qubit HS RNA Assay (Thermo Fisher) | Accurately measures RNA concentration [37] |

| Alignment Software | HISAT2, STAR | Splice-aware alignment to reference genome [39] [40] |

| Quantification Tool | featureCounts, RSEM | Generates gene and isoform expression counts [39] [37] |

| Splicing Analysis | MRSD-deep | Determines minimum sequencing depth for specific targets [33] [35] |

Bioinformatics Processing Pipeline

The computational workflow for ultra-deep RNA sequencing data builds upon standard RNA-seq pipelines but requires enhanced processing capabilities to manage the substantial data volumes. A representative pipeline integrates the following components:

- Quality Control and Trimming: FastQC for quality assessment and Trimmomatic for adapter removal and quality trimming [40].

- Alignment: HISAT2 or STAR with splice-aware alignment to GRCh38 reference genome [39] [40].

- Quantification: featureCounts (from Subread package) or RSEM for generating raw count matrices [39] [37].

- Normalization: FPKM/RPKM or TPM for cross-sample comparisons, with caution regarding limitations for quantitative comparisons across different sample types [39].

- Specialized Applications: For allele-specific expression analysis, the ASET pipeline provides an end-to-end solution for quantification and visualization [41].

The NCBI also offers precomputed RNA-seq count data for human studies, generated through a standardized pipeline that aligns reads to GRCh38 using HISAT2 and quantifies expression with featureCounts [39]. However, researchers should note that these counts may not match publication results if different processing approaches were used originally.

Diagnostic Applications: Resolving Variants of Uncertain Significance

Clinical Workflow for Mendelian Disorders

Ultra-deep RNA sequencing demonstrates particular utility in resolving VUSs in Mendelian disorders, where it illuminates the functional consequences of non-coding and splice-region variants. The clinical application follows a structured pathway:

Expanding Tissue Utility for Diagnostic Applications

A significant advantage of ultra-deep sequencing is its ability to expand the diagnostic utility of clinically accessible tissues. Genes causing developmental and neurological disorders may not be strongly expressed in blood and skin cells, which are commonly used for clinical testing [34]. As Dr. Pengfei Liu of Baylor College of Medicine notes, "If you can sequence blood samples to extremely high depths, you can capture those genes traditionally thought to be tissue specific" [34].

This capability is further enhanced through the development of expanded splicing-variation references built from deep RNA-seq data. By applying ultra-deep sequencing to fibroblasts, the Baylor team created a comprehensive resource that successfully identifies low-abundance splicing events missed by standard-depth data [33] [36]. This resource enables more accurate interpretation of splicing anomalies in patient samples compared against a more comprehensive baseline of natural splicing variation.

Future Directions and Implementation Considerations

Clinical Translation and Validation

The transition of ultra-deep RNA sequencing from research to clinical applications requires careful validation and standardization. The Baylor team is pursuing clinical validation for ultra-deep RNA-seq and planning for a clinical test based on their findings [34]. This process involves establishing standardized protocols, depth requirements for specific clinical applications, and rigorous quality control metrics.