Decoding Cell Fate: A Comprehensive Guide to Single-Cell RNA Sequencing Developmental Trajectory Analysis

This article provides a comprehensive overview of how single-cell RNA sequencing (scRNA-seq) is revolutionizing our understanding of developmental trajectories and cellular potency.

Decoding Cell Fate: A Comprehensive Guide to Single-Cell RNA Sequencing Developmental Trajectory Analysis

Abstract

This article provides a comprehensive overview of how single-cell RNA sequencing (scRNA-seq) is revolutionizing our understanding of developmental trajectories and cellular potency. Aimed at researchers, scientists, and drug development professionals, it covers foundational concepts, from defining cell potency states (totipotent to differentiated) to the principles of trajectory inference. It delves into cutting-edge computational methods, including deep learning frameworks like CytoTRACE 2, and details diverse applications in fields like regenerative medicine, oncology, and toxicology. The content also addresses critical technical and analytical challenges—such as batch effects, dropout events, and data interpretation—offering best practices for robust analysis. Finally, it benchmarks current tools and validation strategies, synthesizing how these advancements are accelerating drug discovery and enabling a more precise mapping of cellular life cycles.

Mapping Cellular Lifecycles: Core Concepts in Developmental Potency and Trajectory Analysis

Within the framework of single-cell RNA sequencing (scRNA-seq) developmental trajectory research, delineating the precise hierarchy of cell potency is paramount. Cell potency describes the capacity of a single cell to differentiate into other cell types, forming a continuum from the totipotent zygote, capable of generating an entire organism, to terminally differentiated cells with specialized functions [1]. Understanding this hierarchy is fundamental to developmental biology, regenerative medicine, and oncology.

Modern scRNA-seq technologies have revolutionized this field by allowing researchers to move beyond population-level averages and capture gene expression profiles at the single-cell level. This enables the dissection of cellular heterogeneity within seemingly uniform populations and the reconstruction of developmental trajectories as cells transition from potent to restricted states [2] [3]. Computational methods like CytoTRACE 2 have been developed specifically to predict absolute developmental potential from scRNA-seq data, providing a quantitative framework for ordering cells along the potency continuum [1]. This Application Note details the core concepts of cell potency and provides standardized protocols for its investigation using scRNA-seq, framed within the context of developmental trajectory research.

The Potency Spectrum: Definitions and Key Transitions

The hierarchy of cell potency is traditionally categorized into distinct stages based on the range of cell types a cell can produce. The table below summarizes the defining characteristics of each stage.

Table 1: The Hierarchy of Cell Potency

| Potency Stage | Developmental Potential | Key Molecular Hallmarks (Examples) | Representative In Vivo Cell Type |

|---|---|---|---|

| Totipotent | Can generate all embryonic and extra-embryonic (placental) tissues, forming a complete organism. | POU5F1 (OCT4), NANOG (low/transient), specific isoforms. | Zygote, early blastomere. |

| Pluripotent | Can generate all cell types of the three embryonic germ layers (ectoderm, mesoderm, endoderm). | POU5F1 (OCT4), NANOG, SOX2 [1]. | Inner Cell Mass (ICM) of the blastocyst, Embryonic Stem Cells (ESCs). |

| Multipotent | Can generate multiple cell types within a specific lineage or organ system. | Lineage-specific transcription factors (e.g., SOX10 for neural crest); Cholesterol metabolism genes (e.g., Fads1, Fads2) [1]. | Hematopoietic Stem Cells (HSCs), Neural Crest Cells (NCCs) [4]. |

| Oligopotent | Can differentiate into a few, closely related cell types. | Further restricted expression of lineage-specific factors. | Myeloid or Lymphoid Progenitors. |

| Unipotent | Can produce only one cell type, but possess self-renewal capability. | Terminal lineage markers begin to emerge. | Satellite cells in muscle. |

| Differentiated | Fully specialized, functional cell with no further developmental potential. | High expression of tissue-specific functional genes (e.g., hemoglobin in erythrocytes). | Neuron, adipocyte, osteocyte. |

A critical application of scRNA-seq in potency research is trajectory inference. These computational algorithms use the high-dimensional gene expression data from single cells to reconstruct their progression through biological processes, such as differentiation, ordering cells from least to most differentiated and modeling the branching points where cell fates diverge [3].

Computational Analysis of Potency from scRNA-Seq Data

A primary method for assessing cell potency from scRNA-seq data is the computational tool CytoTRACE 2. This is an interpretable deep learning framework designed to predict the absolute developmental potential of individual cells.

Protocol: Predicting Developmental Potential with CytoTRACE 2

Principle: CytoTRACE 2 uses a deep learning model trained on an extensive atlas of human and mouse scRNA-seq datasets with experimentally validated potency levels. It employs a Gene Set Binary Network (GSBN) architecture to identify highly discriminative gene sets that define each potency category, providing both a discrete potency category prediction and a continuous potency score ranging from 1 (totipotent) to 0 (differentiated) [1].

Experimental Workflow:

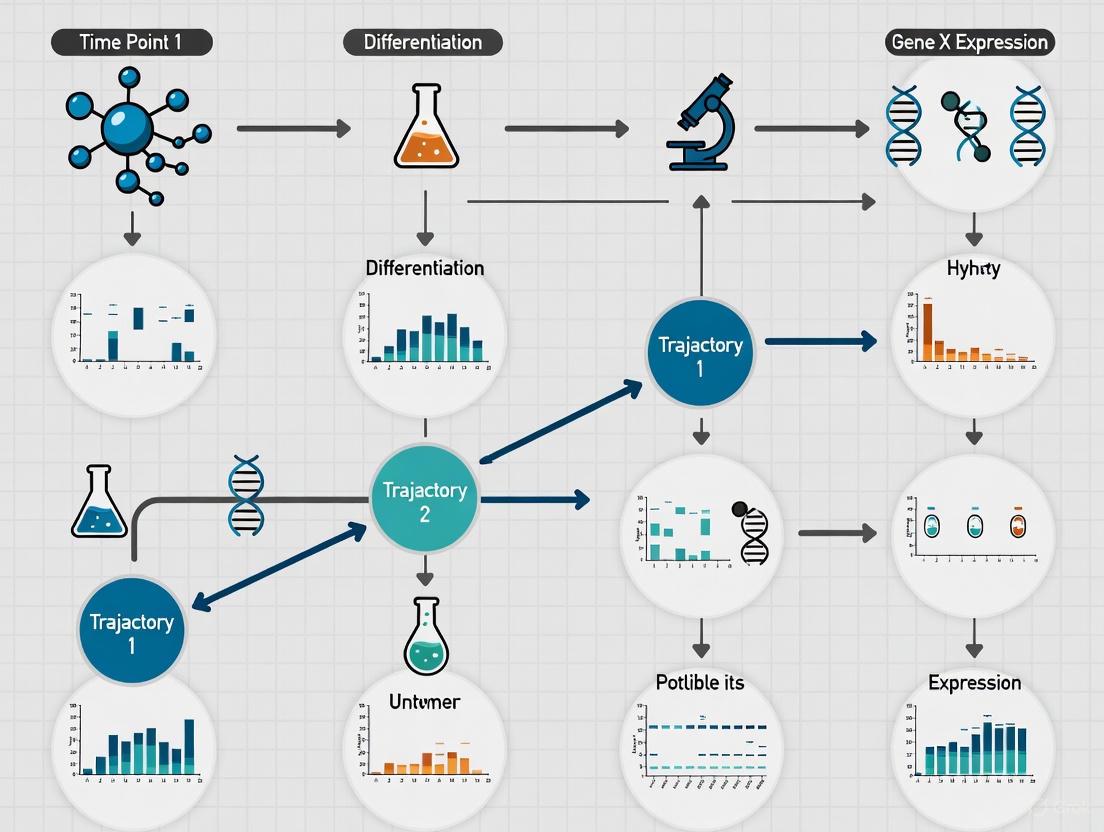

The following diagram illustrates the key stages of a developmental potency analysis using a computational pipeline like CytoTRACE 2.

Diagram 1: Computational Analysis Workflow for Cell Potency

Step-by-Step Procedure:

- Input Data Preparation: Begin with a cell-by-gene count matrix, which is the standard output from processing raw scRNA-seq data (e.g., from Cell Ranger for 10x Genomics data). The data can be in the form of a Seurat (R) or AnnData (Python) object [5].

- Quality Control (QC) and Normalization:

- Perform rigorous QC to remove low-quality cells. Common metrics include:

- Excluding cells with an abnormally high number of detected genes (potential doublets).

- Excluding cells with a high percentage of mitochondrial reads (indicative of cell stress or damage).

- Normalize the data to account for differences in sequencing depth between cells using methods like SCTransform (Seurat) or log-normalization [5] [2].

- Perform rigorous QC to remove low-quality cells. Common metrics include:

- Dimensionality Reduction and Clustering:

- Perform linear dimensionality reduction using Principal Component Analysis (PCA).

- Cluster cells based on their gene expression profiles using graph-based methods (e.g., Louvain, Leiden algorithm) to identify putative cell populations [5].

- Visualize the clusters in two dimensions using non-linear methods like UMAP or t-SNE (see Diagram 1).

- Running CytoTRACE 2:

- Install CytoTRACE 2 according to the official documentation (https://cytotrace2.stanford.edu).

- Input the normalized count matrix into the CytoTRACE 2 algorithm. The model will output a potency score for every cell and can assign a broad potency category (e.g., pluripotent, multipotent) [1].

- Downstream Analysis and Interpretation:

- Visualization: Overlay the continuous CytoTRACE 2 scores onto the UMAP/t-SNE plot to visualize how developmental potential is distributed across clusters.

- Trajectory Inference: Use the potency scores to guide or validate trajectory inference with pseudotime analysis tools (e.g., Monocle 3, PAGA). Cells with higher potency scores should be positioned at the start of inferred differentiation trajectories [1] [3].

- Marker Gene Discovery: Leverage the interpretability of the GSBN to extract the top genes associated with each potency state (e.g., multipotency) for further biological validation [1].

The Scientist's Toolkit: Key Reagents and Tools for scRNA-seq Potency Analysis

Table 2: Essential Research Reagent Solutions for scRNA-seq Developmental Trajectory Research

| Item/Category | Function/Purpose | Specific Examples |

|---|---|---|

| Single-Cell Isolation | To physically separate individual cells for sequencing. | FACS (Fluorescent Activated Cell Sorting) [2], Microfluidics (e.g., 10x Genomics Controller) [5] [2]. |

| scRNA-seq Library Kit | To generate sequencing libraries from single cells. | 10x Genomics Chromium Single Cell 3' Kit (3'-end counting, high-throughput) [2], Smart-seq2 (Full-length transcript, in-depth coverage) [2]. |

| Unique Molecular Identifiers (UMIs) | Molecular barcodes that label individual mRNA transcripts to enable accurate quantification and reduce amplification bias [5]. | Incorporated in droplet-based protocols like 10x Genomics and inDrops. |

| Bioinformatics Software | For computational analysis of scRNA-seq data. | Seurat (R package) [5], Scanpy (Python package) [5], Cell Ranger (10x Genomics pipeline) [5]. |

| Trajectory Inference Tools | To computationally order cells along a differentiation trajectory. | CytoTRACE 2 (Potency prediction) [1], Monocle 3 (Pseudotime analysis) [3], PAGA (Mapping fate decisions). |

Experimental Validation of Developmental Potential

Computational predictions of potency require functional validation. A key model system for studying multipotency in vertebrates is the neural crest cell (NCC).

Protocol: Functional Validation of Neural Crest Cell Multipotency

Principle: NCCs are a highly multipotent embryonic cell population that gives rise to diverse derivatives, including neurons, glia, melanocytes, and craniofacial cartilage and bone [6] [7] [4]. This protocol outlines how to isolate and differentiate NCCs to validate their multipotent potential, a process that can be monitored using scRNA-seq.

Experimental Workflow:

The pathway from pluripotent stem cells to differentiated neural crest derivatives involves precise signaling and transcriptional changes, as shown in the following diagram.

Diagram 2: Key Pathway in Trunk Neural Crest Formation

Step-by-Step Procedure:

In Vitro Differentiation of Human Pluripotent Stem Cells (hPSCs) to NCCs:

- Principle: Guide hPSCs towards a neural crest fate by recapitulating developmental signals.

- Method:

- Culture hPSCs in defined media.

- To generate trunk NCCs, first induce neuromesodermal progenitors (NMPs) by activating WNT and FGF signaling pathways. These TBXT (Brachyury)-positive NMPs are the precursors to trunk NCCs [7].

- Subsequent inhibition of BMP signaling and continued WNT activation can promote the specification of NCCs from the neural plate border [7].

- Monitor the emergence of NCCs by assessing the expression of key markers like SOX10, TFAP2B, and P75NTR via flow cytometry or immunocytochemistry.

Multilineage Differentiation Assay:

- Principle: Functionally test the multipotency of the derived NCCs by exposing them to conditions that promote differentiation into specific lineages.

- Method:

- Adipogenic Differentiation: Culture NCCs in media containing insulin, dexamethasone, and indomethacin. Confirm differentiation with Oil Red O staining of lipid droplets.

- Chondrogenic Differentiation: Pellet culture in media containing TGF-β3. Confirm differentiation with Alcian Blue staining of sulfated glycosaminoglycans.

- Osteogenic Differentiation: Culture in media containing ascorbic acid, β-glycerophosphate, and dexamethasone. Confirm differentiation with Alizarin Red S staining of calcium deposits [4].

- Neuronal Differentiation: Culture in media containing BDNF, GDNF, and NGF. Confirm by immunostaining for neuronal markers like β-III-Tubulin (TUJ1).

scRNA-seq for Validation and Characterization:

- Principle: Use scRNA-seq to molecularly characterize the hPSC-derived NCC population and their differentiation products.

- Method:

- Perform scRNA-seq on the derived NCCs and cells from each differentiation assay.

- Analysis:

- Confirm that the putative NCC cluster expresses high levels of core NCC signature genes (e.g., SOX9, SNAI2, TWIST1) and shows high CytoTRACE 2 scores, indicative of multipotency [1] [4].

- After applying trajectory inference to the combined dataset, verify that the undifferentiated NCCs are positioned at the start of branching trajectories that lead to the adipogenic, chondrogenic, and osteogenic clusters.

- Identify the specific gene expression programs activated in each successful differentiation lineage.

The integration of sophisticated scRNA-seq technologies with robust computational frameworks like CytoTRACE 2 provides an powerful strategy for systematically defining the hierarchy of cell potency. The protocols outlined herein—for computational prediction of developmental potential and experimental validation using model systems like neural crest cells—offer a structured approach for researchers aiming to map differentiation landscapes. This is critical for advancing foundational knowledge in developmental biology and for translating insights into regenerative medicine strategies and therapeutic development for cancer and congenital diseases.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the transcriptomic profiling of individual cells, revealing unprecedented levels of cellular heterogeneity within seemingly homogeneous populations [8] [9]. While conventional bulk RNA sequencing averages gene expression across thousands to millions of cells, thereby masking cell-to-cell variations, scRNA-seq exposes the remarkable diversity inherent in biological systems [10] [9]. This capability is particularly transformative for developmental biology, where understanding the continuum of cellular transitions is fundamental to deciphering mechanisms of differentiation, lineage commitment, and tissue morphogenesis.

A powerful application of scRNA-seq lies in its ability to reconstruct developmental trajectories from static snapshot data—that is, from samples collected at a single time point [9]. This approach computationally infers dynamic processes from the transcriptomic relationships between individual cells captured in a single experiment. Each cell represents a point along a continuum of biological processes, and by analyzing the transcriptomic similarities and differences among these cells, researchers can reconstruct the sequence of transcriptional changes that define developmental pathways [11] [12]. This methodology has been successfully applied to diverse biological contexts, including embryonal development, cancer progression, immune cell differentiation, and cellular reprogramming, providing insights into the regulatory networks that govern cell fate decisions [10] [9].

Fundamental Principles of Trajectory Inference

The core premise underlying developmental trajectory reconstruction is that the transcriptomic state of a cell reflects its position along a biological continuum. When scRNA-seq is performed on a population of cells undergoing a dynamic process, the resulting data contains cells at various stages of transition. Computational methods can then order these cells along pseudotemporal trajectories based on transcriptomic similarity, effectively reconstructing the progression of gene expression changes without the need for physical time-series experiments [11].

Several key principles enable this reconstruction:

- Transcriptomic continuity: Cells undergoing continuous biological processes form connected manifolds in high-dimensional gene expression space.

- Branching decisions: Developmental bifurcations appear as diverging paths in the reduced-dimensional representation of scRNA-seq data.

- Underlying regulatory networks: The progression along trajectories is driven by coordinated changes in transcription factor networks, which can be inferred from the data [11].

These principles allow researchers to move beyond simple cell type classification to understand the processes by which cells transition between states, identify key decision points in differentiation pathways, and discover genes that drive these transitions.

Experimental Workflow for Developmental Trajectory Analysis

The following diagram illustrates the comprehensive workflow for scRNA-seq-based developmental trajectory reconstruction, from sample preparation through biological interpretation:

Sample Preparation and Single-Cell Isolation

The initial critical step involves creating high-quality single-cell suspensions from developing tissues or experimental models. The choice of dissociation method significantly impacts data quality, as enzymatic or mechanical stress can induce artificial transcriptional responses that confound biological interpretation [8]. For tissues particularly sensitive to dissociation artifacts (e.g., neuronal tissues), single-nucleus RNA sequencing (snRNA-seq) provides a valuable alternative, as nuclei are more resilient to isolation procedures [8] [12]. Experimental evidence indicates that maintaining tissues at 4°C during dissociation, rather than 37°C, minimizes stress-induced transcriptional changes [8].

Multiple approaches exist for single-cell isolation, each with distinct advantages:

- Droplet-based systems (10x Genomics, ddSEQ, InDrop) use microfluidics to encapsulate individual cells in oil droplets with barcoded beads, enabling high-throughput processing of thousands to millions of cells [10] [8].

- Microwell platforms (Seq-Well) employ nanoliter-scale wells to isolate cells, offering a portable and cost-effective alternative [12].

- Laser capture microdissection allows precise isolation of specific cells based on spatial location, though with lower throughput [8].

- Fluorescence-activated cell sorting (FACS) enables isolation based on specific surface markers or fluorescent reporters, facilitating targeted analysis of predefined populations [8].

Library Preparation and Sequencing

Following cell isolation, the scRNA-seq library preparation process captures and amplifies the transcriptome from each individual cell. Most high-throughput methods employ poly[T]-primed reverse transcription to selectively convert polyadenylated mRNA molecules into cDNA [10] [12]. A critical innovation for accurate quantification is the incorporation of Unique Molecular Identifiers (UMIs)—short random nucleotide sequences that tag individual mRNA molecules during reverse transcription [8] [12]. UMIs enable distinction between biological transcript abundance and technical amplification bias, as all cDNA molecules derived from the same original mRNA molecule share the same UMI [12].

Two primary amplification strategies are employed:

- PCR-based amplification (used in Smart-seq2, Fluidigm C1, 10x Genomics) provides high sensitivity for gene detection [8] [12].

- In vitro transcription (IVT)-based amplification (used in CEL-Seq, MARS-Seq) offers linear amplification but may introduce 3' coverage biases [12].

For developmental trajectory studies, full-length transcript methods (e.g., Smart-seq2) can be advantageous for detecting isoform switches during differentiation, while 3' end counting methods (e.g., 10x Genomics) typically offer higher cell throughput for comprehensive population sampling [12].

Computational Analysis and Trajectory Inference

The computational workflow for trajectory inference begins with quality control to remove low-quality cells, doublets, and background noise [13] [12]. Data normalization (e.g., using Seurat's LogNormalize function with a scale factor of 10,000) accounts for varying sequencing depths between cells [13]. Dimensionality reduction techniques like PCA are then applied, followed by non-linear methods such as UMAP (Uniform Manifold Approximation and Projection) for visualization [11] [13].

The trajectory inference process itself employs specialized algorithms that reconstruct the underlying developmental continuum:

- Pseudotime analysis orders cells along a trajectory based on transcriptomic similarity, assigning each cell a "pseudotime" value representing its inferred position in the developmental process [11].

- Branching analysis identifies points where developmental pathways diverge, revealing fate decisions and alternative differentiation routes [11].

- Gene dynamics analysis examines how expression of key regulators changes along pseudotime, identifying potential drivers of developmental transitions [11].

Table 1: Key Experimental Parameters for scRNA-seq in Developmental Studies

| Parameter | Considerations for Developmental Studies | Impact on Data Quality |

|---|---|---|

| Cell Viability | >80% recommended to minimize stress responses | Low viability increases apoptotic signatures |

| Cells Captured | Hundreds to thousands depending on heterogeneity | Insufficient cells may miss rare intermediates |

| Sequencing Depth | 20,000-100,000 reads/cell for complex differentiations | Low depth reduces gene detection sensitivity |

| Gene Detection | 1,000-5,000 genes/cell for trajectory analysis | Low gene count limits resolution of transitions |

| Mitochondrial RNA | <10-25% recommended [13] | High percentage indicates stressed/dying cells |

Research Reagent Solutions and Computational Tools

Successful developmental trajectory reconstruction requires both wet-lab reagents and computational resources. The table below outlines essential components of the single-cell analysis toolkit:

Table 2: Essential Research Reagents and Computational Tools for scRNA-seq Trajectory Analysis

| Category | Specific Examples | Function and Application |

|---|---|---|

| Cell Isolation | 10x Genomics Chromium, Fluidigm C1, Dolomite Bio μEncapsulator | High-throughput single-cell capture with cell barcoding [10] [8] |

| Library Prep Kits | SMARTer Ultra Low Input RNA, Clontech SMARTer, Illumina Nextera | cDNA synthesis, amplification, and library construction from single cells [10] |

| UMI Reagents | Custom UMI-barcoded primers, Commercial UMI kits (10x, Bio-Rad) | Accurate mRNA molecule counting and elimination of PCR amplification bias [8] [12] |

| Sequencing Platforms | Illumina NovaSeq, NextSeq, HiSeq | High-throughput sequencing of barcoded cDNA libraries [12] |

| Analysis Pipelines | Seurat [13], Monocle, SCANPY, Asc-Seurat [12] | Data preprocessing, normalization, clustering, and trajectory inference |

| Visualization Tools | dittoSeq [14], ArchR, ggplot2, Plotly | Creation of publication-quality visualizations of developmental trajectories |

Case Study: Arabidopsis Callus Formation

A recent study exemplifies the power of scRNA-seq for reconstructing developmental trajectories in plant systems. Researchers investigated callus formation in Arabidopsis, a process where plant cells dedifferentiate and acquire totipotency [11]. Using scRNA-seq at key developmental stages (initiation, proliferation, and greening), they generated transcriptomic profiles of individual callus cells and performed UMAP-based clustering to identify distinct cell populations [11].

Pseudotime analysis revealed the developmental trajectory from differentiated cells to fully formed callus, identifying distinct transcription factor networks operative at different stages [11]. The study further demonstrated environmental regulation of this process, showing that low oxygen and salinity promoted callus formation while light inhibited it (though light remained essential for the greening phase) [11]. This comprehensive analysis illustrates how static snapshot data can be transformed into dynamic developmental maps through appropriate computational methods.

The following diagram illustrates the key computational steps in trajectory inference following scRNA-seq data generation:

Quality Assessment and Technical Validation

Rigorous quality control is essential throughout the experimental and computational pipeline. At the wet-lab stage, cell viability should be assessed before library preparation, with mitochondrial RNA percentage serving as a key metric during computational preprocessing [13]. For developmental studies, it is particularly important to ensure adequate representation of transitional states, as rare intermediate cell types may be lost during aggressive quality filtering.

Technical validation of inferred trajectories should include:

- Comparison with known markers: Expression patterns of established lineage markers should align with pseudotime ordering.

- Robustness testing: Trajectory stability should be assessed through bootstrap resampling or downsampling.

- Functional validation: Key predictions from trajectory analysis (e.g., regulator genes) should be tested experimentally using genetic perturbations [11].

When properly executed, scRNA-seq-based trajectory reconstruction provides unparalleled insights into developmental processes, enabling researchers to move beyond static classifications to dynamic models of cell fate decisions with significant implications for developmental biology, regenerative medicine, and disease modeling.

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to study biological processes at unprecedented resolution, capturing the transcriptomic states of individual cells within complex tissues. A particularly powerful application lies in developmental trajectory analysis, which computationally reconstructs the continuum of cellular states during processes like differentiation, immune activation, or disease progression. By ordering cells along a pseudotime axis based on transcriptional similarity, this approach maps dynamic transitions that would be impossible to observe in bulk analyses where cellular heterogeneity is averaged [15]. This pseudotime metric quantifies the relative progression of individual cells through a biological process, serving as a proxy for their developmental "age" even when actual temporal data is unavailable [15] [16].

The reconstruction of gene regulatory networks (GRNs)—the directed graphs representing regulatory relationships between transcription factors (TFs) and their target genes—is fundamental to understanding the molecular control of these dynamic processes. Transcription factors are prime regulators of cell fate specification, but their typically low expression levels make them particularly vulnerable to the dropout problem in scRNA-seq, where technical limitations result in false zero measurements [17]. Fortunately, trajectory analysis provides a powerful framework to overcome this challenge. By modeling expression changes along developmental continua, it enables more accurate inference of causal regulatory relationships than static snapshots of cellular populations [18] [19]. The integration of these two approaches—trajectory analysis and GRN reconstruction—creates a synergistic pipeline for identifying key TFs that drive cell fate decisions, offering profound insights for developmental biology, disease modeling, and therapeutic development.

Methodological Approaches for Integrated Trajectory and Regulatory Analysis

Trajectory Inference Algorithms

Multiple computational approaches exist for inferring trajectories from scRNA-seq data, each with distinct strengths and methodological considerations. The TSCAN algorithm employs a cluster-based minimum spanning tree (MST) approach, first grouping cells into clusters, computing cluster centroids, and then forming the most parsimonious tree structure that connects these centroids. Cells are then projected onto the nearest edge of the MST, and pseudotime is calculated as the distance along this tree from a user-defined root node [15]. This approach offers computational efficiency and robustness to noise by operating on clusters rather than individual cells, though its trajectory complexity is limited by the granularity of the clustering [15].

An alternative approach implemented in Monocle3 uses machine learning to reverse-engineer the sequence of transcriptional changes that generated the observed distribution of cells. After selecting root cells as starting points, the algorithm performs dimension reduction (typically PCA followed by UMAP) and graphs the trajectory that best explains the data, allowing for complex branching events and loops [20]. The slingshot package takes yet another approach, fitting principal curves—non-linear generalizations of PCA that bend through the high-dimensional cloud of cells—to define the trajectory backbone [15]. Each method makes different assumptions about the underlying biology, with choice depending on whether expected trajectories are simple linear progressions, branching events, or more complex cyclic processes.

Gene Regulatory Network Inference from Trajectory Data

Once pseudotime is established, several computational approaches can reconstruct GRNs that explain the observed transcriptional dynamics. The Inferelator framework uses regression with regularization to infer regulatory relationships between TFs and target genes based on expression patterns along trajectories [21]. This approach benefits from incorporating prior information and can explicitly estimate transcription factor activities, which may not directly correlate with mRNA levels due to post-translational regulation [21].

SCENIC (Single-Cell Regulatory Network Inference and Clustering) is another widely used method that combines GRN inference with cis-regulatory analysis. It first identifies potential TF-target relationships based on co-expression along the trajectory, then uses motif enrichment analysis to prune false positives, retaining only targets with supported regulatory mechanisms [18] [22]. This two-step process produces more biologically validated networks with higher confidence in predicted regulatory interactions.

For capturing the dynamic nature of regulation along trajectories, scPADGRN implements a novel approach specifically designed for time-series scRNA-seq data. It combines a preconditioned alternating direction method of multipliers (PADMM) with cell clustering to reconstruct dynamic GRNs, optimizing for network precision, sparsity, and continuity across pseudotemporal stages [19]. This method can identify how regulatory relationships change throughout differentiation, revealing stage-specific regulators that might be missed in static network analyses.

Table 1: Comparison of GRN Reconstruction Methods Compatible with Trajectory Analysis

| Method | Underlying Algorithm | Key Features | Trajectory Requirement |

|---|---|---|---|

| Inferelator [21] | Regression with regularization | Incorporates prior information; estimates TF activity | Not required but beneficial |

| SCENIC [18] [22] | Co-expression + motif enrichment | Validates networks with cis-regulatory evidence | Not required but beneficial |

| scPADGRN [19] | PADMM + clustering | Optimized for time-series data; models network dynamics | Specifically designed for trajectories |

| GRN from barcoded genotypes [21] | Multitask learning | Combines genetic perturbations with environmental diversity | Not required |

Experimental Enhancements for Transcription Factor Detection

Standard scRNA-seq protocols often miss critical biological information due to limited sensitivity for lowly expressed transcripts like transcription factors. scCapture-seq addresses this limitation through targeted sequencing of approximately 1,000 TFs using oligonucleotide probes to enrich for these transcripts before sequencing [17]. This approach results in a remarkable 36-fold enrichment for targeted TFs, with the percentage of reads mapping to TFs increasing from 2.2% pre-capture to 78.3% post-capture [17]. The method increases the number of detected TFs per cell by more than fourfold, dramatically improving the resolution of subsequent regulatory network analyses and enabling identification of key developmental regulators that would otherwise remain hidden [17].

Another experimental strategy combines genetic perturbations with scRNA-seq profiling. Perturb-seq introduces diverse genetic perturbations (typically using CRISPR/Cas9) in a pooled format, then assesses the transcriptomic consequences in thousands of individual cells [21]. When applied along differentiation trajectories, this approach can directly test regulatory hypotheses by examining how TF perturbation alters progression through pseudotime and branch point decisions, providing causal validation for inferred regulatory relationships.

Application Examples in Developmental Systems

Neurodevelopment: Revealing Divergent Differentiation Pathways

When applied to iPSC-derived neuronal cultures, integrated trajectory and regulatory analysis revealed unexpected developmental divergences that traditional scRNA-seq had missed. In a study comparing neuronal differentiation across two laboratories using the same protocol, standard clustering identified four populations interpreted as neurons and glial cells [17]. However, after implementing scCapture-seq to enhance TF detection, trajectory analysis based on 731 TFs (a 25% increase over pre-capture) revealed three distinct groups: two neuronal trajectories (N1 and N2) and a glial-like population [17].

Pseudotime analysis demonstrated that the N1 population, derived primarily from one laboratory, expressed TFs including NEUROD2, TBR1, NEUROG2, and EOMES, consistent with excitatory cortical neuron identity. In contrast, the N2 population from the other laboratory expressed MEIS1, SP9, DLX2, and DLX6, signifying an inhibitory interneuron fate [17]. Furthermore, the presumed "glial" cluster was re-identified as radial glial or neural progenitor cells based on expression of HES1, HES5, PAX6, and NR2E1 [17]. This refined analysis revealed that laboratory-specific variations resulted in fundamentally different neuronal differentiation trajectories rather than merely different ratios of neurons to glia as originally interpreted.

The enhanced TF data further enabled reconstruction of more accurate gene regulatory networks, revealing a role for retinoic acid signaling in the developmental divergence between these neuronal lineages [17]. This case study demonstrates how targeted TF detection combined with trajectory analysis can uncover critical biological variation that would otherwise be lost in technical noise and interpretation limitations.

Cardiogenesis: Identifying Key Regulators of Heart Development

In a comprehensive study of iPSC-derived cardiomyocyte differentiation, researchers collected 32,365 cells across four timepoints (days 0, 2, 4, and 10) to reconstruct the cardiac developmental trajectory [23]. Pseudotime analysis revealed a clear branching point at day 2, where cells committed to either cardiac progenitors or alternative fates [23]. By combining this trajectory with differential expression and SCENIC analysis, they identified several candidate TFs driving cardiomyocyte specification, including CREG and NR2F2 [23].

The study successfully identified distinct clusters corresponding to developmental stages: pluripotent stem cells (clusters 1-4), primitive streak mesoderm (cluster 5), cardiac progenitors (cluster 0), cardiomyocytes (cluster 6), and smooth muscle cells (cluster 8) [23]. Through trajectory-based GRN analysis, they further identified key TFs including GATA4, ISL1, NKX2-5, TBX5, and MEF2C as central regulators of the cardiomyocyte lineage commitment [23]. Gene Set Enrichment Analysis of the cardiomyocyte cluster revealed enrichment for cardiac-specific pathways including "dilated cardiomyopathy," "hypertrophic cardiomyopathy," "cardiac muscle contraction," and "adrenergic signaling in cardiomyocytes," validating the functional identity of the cells along the reconstructed trajectory [23].

Table 2: Key Transcription Factors Identified Through Trajectory Analysis in Model Systems

| Biological System | Key Identified Transcription Factors | Functional Role | Reference |

|---|---|---|---|

| iPSC to Neuronal Cultures | BHLHE22, NFIX, ZBTB20, NHLH1 (differential between labs) | Distinguish excitatory vs. inhibitory neuronal fates | [17] |

| iPSC to Cardiomyocytes | CREG, NR2F2, GATA4, ISL1, NKX2-5, TBX5 | Cardiomyocyte lineage commitment and differentiation | [23] |

| Mouse ES to Primitive Endoderm | Bhlhe40, Msx2, Foxa2, Dnmt3l | Primitive endoderm specification | [19] |

| Embryonic Fibroblast to Myocytes | Scx, Fos, Tcf12 | Myocyte differentiation | [19] |

| Human ES to Definitive Endoderm | Sox5, Meis2, Hoxb3, Tcf7l1, Plagl1 | Definitive endoderm formation | [19] |

| Sepsis Immune Response | CREB5 | Monocyte differentiation and immune response | [22] |

Detailed Protocols for Integrated Trajectory and Regulatory Analysis

Protocol 1: scCapture-seq for Enhanced TF Detection Followed by Trajectory Analysis

This protocol describes how to implement targeted transcription factor sequencing to enhance trajectory and regulatory network analysis, based on the method described by [17].

Research Reagent Solutions and Essential Materials

- Oligonucleotide probes: Designed against ~1000 transcription factors

- Single-cell libraries: Prepared using standard scRNA-seq protocols (10x Genomics, inDrop, or DROP-seq)

- Hybridization and capture reagents: Including hybridization buffer, streptavidin beads, and wash buffers

- Sequencing reagents: Appropriate for the sequencing platform being used

- Computational tools: scCapture-seq processing pipeline, Seurat, Monocle3 or TSCAN

Experimental Workflow

- Library Preparation: Prepare single-cell RNA-seq libraries according to standard protocols for your platform of choice.

- Target Enrichment: Hybridize libraries with biotinylated oligonucleotide probes targeting approximately 1,000 transcription factors.

- Capture and Amplification: Capture probe-bound fragments using streptavidin beads, wash stringently, and amplify captured DNA.

- Sequencing: Sequence enriched libraries on an appropriate high-throughput sequencing platform.

- Quality Control: Assess capture efficiency by comparing the percentage of reads mapping to TFs pre- and post-capture (expect ~78% post-capture vs. ~2% pre-capture).

- Trajectory Inference: Perform trajectory analysis using Monocle3 or TSCAN, leveraging the enhanced TF detection.

- GRN Reconstruction: Apply SCENIC or Inferelator to identify regulators driving trajectory paths.

Figure 1: scCapture-seq Workflow for Enhanced TF Detection and Analysis

Protocol 2: Trajectory-Informed GRN Reconstruction with Monocle3 and SCENIC

This protocol outlines an integrated computational pipeline for reconstructing developmental trajectories and inferring the underlying gene regulatory networks.

Research Reagent Solutions and Essential Materials

- Single-cell expression matrix: Raw count data from any scRNA-seq platform

- Cell metadata: Including experimental conditions, batch information, and initial clustering labels

- Computational tools: Monocle3 or TSCAN for trajectory inference, SCENIC for GRN reconstruction

- Reference databases: TF-motif annotations (e.g., CIS-BP, JASPAR)

- High-performance computing resources: Sufficient memory and processing power for large datasets

Experimental Workflow

- Data Preprocessing: Filter cells based on quality metrics (mitochondrial percentage, feature counts) and normalize using log-normalization or size-factor normalization.

- Dimension Reduction: Perform principal component analysis (PCA) on highly variable genes, typically selecting the top 50 principal components for datasets with >5,000 cells.

- Trajectory Inference:

- Use Monocle3 to learn the trajectory graph, specifying root cells based on biological knowledge (e.g., earliest time point, most pluripotent state).

- Adjust parameters including UMAP minimum distance (controls cluster tightness) and number of neighbors (balances local vs. global structure).

- Allow for branching events and loops if biologically justified.

- Pseudotime Calculation: Assign each cell a pseudotime value representing its progression along the trajectory from the root.

- GRN Inference with SCENIC:

- Identify potential TF-target relationships based on co-expression patterns along the pseudotime gradient.

- Prune targets without enrichment of the TF's binding motif in their regulatory regions.

- Calculate regulon activity for each cell using AUCell.

- Regulon Dynamics Analysis: Model how regulon activity changes along pseudotime to identify TFs with stage-specific activity.

- Validation: Compare identified TFs with known biology and use functional assays to validate key predictions.

Figure 2: Computational Pipeline for Trajectory-Informed GRN Analysis

Advanced Applications and Future Perspectives

The integration of trajectory analysis with regulatory network inference continues to evolve with emerging technologies and computational approaches. Spatial transcriptomics adds another dimension to these analyses, allowing researchers to contextualize developmental trajectories within tissue architecture. Methods like Palo (spatially aware color palette optimization) enhance visualization of complex trajectory data, ensuring neighboring cell states in both physical and transcriptional space are visually distinct for better interpretation [24].

For disease modeling, these approaches are increasingly applied to identify pathogenic regulatory programs. In sepsis research, trajectory analysis of immune cells identified CREB5 as a key regulator of monocyte differentiation during the immune response [22]. Such findings highlight the potential for identifying therapeutic targets by comparing regulatory networks along disease trajectories versus normal development.

Looking forward, several technological advances promise to enhance trajectory-based regulatory network analysis. Multi-omic single-cell technologies that simultaneously measure gene expression, chromatin accessibility, and protein abundance provide more direct evidence of regulatory relationships [18]. Machine learning approaches are being increasingly incorporated to handle the complexity of these datasets and improve prediction accuracy [22]. The development of dynamic network metrics like DGIE (Differentiation Genes' Interaction Enrichment) allows quantitative assessment of how regulatory interactions change along trajectories, providing insights into the temporal dynamics of gene regulation during fate decisions [19].

As these methods mature and become more accessible, integrated trajectory and regulatory analysis will continue to reveal the fundamental principles governing cell fate decisions in development, disease, and regeneration, ultimately accelerating the discovery of novel therapeutic strategies that target key regulatory nodes in pathological processes.

Plant cellular totipotency, the ability of a single cell to regenerate an entire plant, is fundamentally demonstrated through callus formation and subsequent organogenesis. This process involves the dedifferentiation of somatic cells into a pluripotent callus state, followed by redifferentiation into organized tissues and organs. Within the context of modern developmental biology, single-cell RNA sequencing (scRNA-seq) provides an unprecedented resolution to dissect the transcriptional dynamics and cellular heterogeneity underlying these phenomena. This case study integrates detailed experimental protocols for callus induction and regeneration with a scRNA-seq framework to trace the developmental trajectories of individual cells, offering researchers a comprehensive toolkit for investigating plant cell fate transitions.

Application Notes: Key Insights from Callus Regeneration Studies

Recent studies across diverse plant species have refined our understanding of the factors governing efficient callus formation and regeneration, while also highlighting the critical importance of genetic stability.

Table 1: Summary of Optimized Callus Induction and Regeneration Protocols Across Species

| Plant Species | Explant Source | Optimal Callus Induction Medium | Optimal Regeneration Medium | Key Outcomes | Genetic Stability Assessment |

|---|---|---|---|---|---|

| Gladiolus (Gladiolus grandiflorus) [25] | Basal part of elongated mother corm sprout | MS + 2 mg/L 2,4-D + 2 mg/L NAA + 1 mg/L BAP | MS + 2 mg/L BAP + 2 mg/L Kin + 0.25 mg/L NAA | 95.55% shoot regeneration; 39.44 shoots/explant | Flow cytometry, ISSR markers confirmed stability |

| Stevia (Stevia rebaudiana) [26] | Leaf | MS + NAA + 2,4-D (optimal concentrations vary) | MS + NAA + BAP | 89.20% callus induction; 87.77% shoot induction | RAPD and ISSR markers showed no polymorphism |

| Japonica Rice (Oryza sativa L.) [27] | Isolated microspores | IM2/IM3 + 1 mg/L 2,4-D + ≤1.5 mg/L KT | 1/2 MS + 2 mg/L 6-BA + 0.5 mg/L NAA + 30 g/L sucrose | 61–211 green plantlets/100 mg calli | (Protocol focused on doubled haploid production) |

| Chili Pepper (Capsicum annuum L.) [28] | Cotyledon, Hypocotyl | Medium with 1 mg/L IAA or 1 nM CaREF1 peptide | Medium with 5 mg/L AgNO₃ & 1 nM CaREF1 | Regeneration ratio increased from 27.2% to 55.0% with CaREF1 | Histological analysis showed improved cellular organization |

| Paramignya trimera [29] | Leaf | WPM + 2.0 mg/L NAA + 0.2 mg/L BAP (in dark) | WPM + 4.0 mg/L BAP + 500 mg/L malt extract + 30 g/L sucrose | 100% callus formation; somatic embryogenesis | SCoT markers confirmed genetic fidelity |

Critical Insights for Experimental Design

- Genotype Dependency: The regeneration capacity is highly genotype-specific. Studies in sorghum [30] and chili pepper [28] emphasize the need to screen multiple genotypes to identify responsive lines, as genetic background significantly influences callus induction and regeneration efficiency.

- Explant Selection: The developmental state and type of explant are critical. For instance, in japonica rice, microspores from yellow-green florets at the mid- to late-uninucleate stage were optimal [27], while in Paramignya trimera, one-year-old leaf explants showed superior performance [29].

- Controlling Oxidative Browning: The accumulation of phenolic compounds is a major constraint. In gladiolus, adding 150 mg/L ascorbic acid, 100 mg/L citric acid, and 500 mg/L activated charcoal to the maintenance medium reduced phenolic accumulation by 80% [25]. Similarly, sorghum studies note callus browning as a key challenge [30].

- Developmental Cues from scRNA-seq: scRNA-seq in Arabidopsis callus has revealed that the process is regulated by distinct transcription factor networks and is influenced by environmental factors; low oxygen and salinity promoted callus formation, while light inhibited it (though it remained essential for subsequent greening) [11].

Experimental Protocols

This protocol is designed for high-efficiency regeneration with stable genetic fidelity.

1. Plant Material and Sterilization

- Material: Use mother corm sprouts (3–3.5 cm diameter) from cultivars like 'Rose Supreme', 'Amsterdam', or 'Advance Red'.

- Sterilization:

- Remove surface scales and cut sprouts into 1 cm² explants.

- Wash under running tap water with mild detergent for 30 min.

- Apply heat treatment at 45°C in a water bath for 45 min.

- Surface disinfect with 70% (v/v) ethanol for 1 min, followed by 1.5% (w/v) sodium hypochlorite for 10 min.

- Rinse thoroughly (4x) with sterile distilled water in a laminar flow cabinet.

2. Callus Induction

- Culture Conditions: Maintain at 25 ± 1°C in the dark.

- Basal Medium: Murashige and Skoog (MS) salts and vitamins.

- Plant Growth Regulators (PGRs): Supplement with 2 mg/L 2,4-D, 2 mg/L NAA, and 1 mg/L BAP.

- Observations: Callus should initiate from the base of explants within 3 weeks.

3. Long-term Callus Maintenance

- Medium: MS medium with 0.5 mg/L 2,4-D.

- Additives for Phenolic Control: Include 150 mg/L ascorbic acid, 100 mg/L citric acid, and 500 mg/L activated charcoal.

- Subculturing: Transfer callus to fresh medium every 4-6 weeks.

4. Shoot Regeneration

- Culture Conditions: 16-h light/8-h dark photoperiod, 25 ± 1°C.

- Medium: Transfer maintained callus to MS medium containing 2 mg/L BAP, 2 mg/L Kin, and 0.25 mg/L NAA.

- Observations: Shoot primordia should appear within 2-4 weeks.

5. Rooting and Cormel Formation

- Rooting: Elongated shoots (>3 cm) on MS medium without PGRs or with low auxin.

- Cormel Induction: For gladiolus, use MS medium with 9% sucrose and 2 mg/L IAA to induce cormels at the base of plantlets.

6. Acclimatization

- Transfer well-rooted plantlets to greenhouse conditions. A survival rate of 100% was achieved in gladiolus [25].

Protocol: Integration with Single-Cell RNA Sequencing

This ancillary protocol outlines steps for profiling callus development using scRNA-seq.

1. Experimental Design and Sampling

- Time Points: Collect samples at key developmental stages: explant (T0), callus initiation (T1, 3-7 days), proliferating callus (T2, 2-3 weeks), and early organogenesis (T3, 1-2 weeks on regeneration medium) [11].

- Replicates: Include at least three biological replicates per time point.

2. Single-Cell Suspension Preparation

- Enzyme Solution: Prepare a solution containing 1.5% Cellulase R-10, 0.4% Macerozyme R-10, 0.1% Pectolyase Y-23, 20 mM KCl, 20 mM MES (pH 5.7), and 10 mM CaCl₂.

- Digestion: Finely chop 0.5 g of callus tissue, incubate in 10 mL enzyme solution for 3-6 hours at 25°C with gentle shaking (40 rpm).

- Filtration and Washing: Pass the digest through a 40 μm cell strainer. Centrifuge the filtrate at 500 x g for 5 min and resuspend the pellet in a wash solution (10 mM MES, 5 mM CaCl₂, 0.5 M Mannitol, pH 5.7).

- Viability and Counting: Assess viability using Fluorescein Diacetate (FDA) staining [27] and count cells with a hemocytometer. Aim for >85% viability and a concentration of 800-1,200 cells/μL.

3. Library Preparation and Sequencing

- Platform: Use a commercial high-throughput platform (e.g., 10x Genomics Chromium).

- Target: Aim for 5,000-10,000 cells per sample.

- Sequencing Depth: Target a minimum of 50,000 reads per cell.

4. Computational Analysis

- Data Processing: Use Cell Ranger or similar tools for alignment, barcode assignment, and UMI counting.

- Dimensionality Reduction and Clustering: Perform PCA, followed by graph-based clustering embedded in UMAP for visualization [11].

- Trajectory Inference: Reconstruct developmental pathways using pseudotime analysis tools (e.g., Monocle3, PAGA) to model the continuum from somatic cell to callus to regenerated organ [11] [31].

- Differential Expression and Network Analysis: Identify stage-specific marker genes and reconstruct gene regulatory networks using WGCNA or similar approaches [31].

Visualization of Developmental Pathways and Workflows

The following diagrams, generated using Graphviz DOT language, illustrate the core concepts and experimental workflows.

Diagram Title: Cellular Reprogramming Pathway in Plant Regeneration

Diagram Title: Integrated Experimental Workflow for Regeneration and scRNA-seq Analysis

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Callus Regeneration and scRNA-seq

| Category | Item | Function / Application | Example Usage / Note |

|---|---|---|---|

| Basal Media | Murashige and Skoog (MS) Medium | Provides essential macro/micronutrients, vitamins | Standard for many species; full- or half-strength [25] [26] [27] |

| Woody Plant Medium (WPM) | Lower salt concentration, suitable for woody plants | Used for Paramignya trimera [29] | |

| Auxins | 2,4-Dichlorophenoxyacetic acid (2,4-D) | Potent synthetic auxin; induces cell division & callus formation | Critical for callus induction in gladiolus (2 mg/L) [25], rice (1 mg/L) [27] |

| Naphthalene Acetic Acid (NAA) | Stable synthetic auxin; promotes callus formation & rooting | Used in combination with 2,4-D in gladiolus [25] | |

| Indole-3-acetic Acid (IAA) | Natural auxin; used for root induction | Effective for stevia rooting [26] | |

| Cytokinins | 6-Benzylaminopurine (BAP) | Promotes cell division and shoot organogenesis | Key for shoot regeneration in gladiolus (2 mg/L) and stevia [25] [26] |

| Kinetin (Kin) | Stimulates shoot formation and growth | Used in combination with BAP in gladiolus [25] | |

| Thidiazuron (TDZ) | Potent cytokinin-like regulator; induces organogenesis | Used for shoot organogenesis in P. trimera [29] | |

| Additives | Activated Charcoal (AC) | Absorbs pigments, toxins, and excess PGRs | Reduced phenolic browning in gladiolus (500 mg/L) [25] |

| Ascorbic & Citric Acid | Antioxidants that reduce phenolic oxidation | 150 mg/L & 100 mg/L in gladiolus callus maintenance [25] | |

| Malt Extract | Contains undefined growth factors; promotes embryogenesis | Enhanced somatic embryogenesis in P. trimera (500 mg/L) [29] | |

| scRNA-seq | Enzyme Cocktail (Cellulase, Macerozyme) | Digests cell wall to release protoplasts | Essential for generating single-cell suspensions from callus |

| Fluorescein Diacetate (FDA) | Stains live cells; assesses viability pre-sequencing | Used in rice microspore culture [27] | |

| 10x Genomics Chromium | Platform for high-throughput single-cell partitioning | Industry standard for droplet-based scRNA-seq | |

| Genetic Stability | ISSR Markers | Multi-locus dominant markers; assesses somaclonal variation | Confirmed genetic fidelity in gladiolus and stevia [25] [26] |

| RAPD Markers | Random amplification; screens for DNA-level polymorphisms | Used alongside ISSR in stevia [26] | |

| Flow Cytometry | Rapid ploidy analysis | Verified gladiolus regenerants were diploid [25] |

Single-cell RNA sequencing (scRNA-seq) has revolutionized the biological sciences by enabling the investigation of transcriptional profiles at the resolution of the fundamental unit of life—the cell. Unlike traditional bulk RNA sequencing, which averages gene expression across thousands to millions of cells, scRNA-seq captures the heterogeneity and stochasticity inherent in biological systems, revealing cellular diversity that would otherwise be obscured [9] [10]. This technological advancement is pivotal for dissecting the complex molecular networks that govern normal development and the mechanisms that underlie disease pathogenesis. By tracing developmental trajectories and identifying rare cell populations, scRNA-seq provides an unprecedented window into the dynamic processes that shape tissue formation, homeostasis, and dysfunction [10] [32]. The ability to study the transcriptome of individual cells has transformed our understanding of cellular fate decisions, lineage commitment, and the regulatory circuits that drive these processes, offering profound insights for both basic research and clinical applications [23].

ScRNA-seq Methodologies and Experimental Design

The foundation of any scRNA-seq study lies in selecting an appropriate protocol, as each method offers distinct advantages and limitations depending on the biological question. The core steps involve single-cell isolation, cell lysis, reverse transcription, cDNA amplification, and library preparation [10] [32]. Protocols can be broadly categorized by their transcript coverage: full-length methods (e.g., Smart-Seq2, MATQ-Seq) capture nearly complete transcripts, enabling isoform usage analysis and detection of RNA editing, while 3'- or 5'-end counting methods (e.g., Drop-Seq, inDrop, 10x Chromium) focus on digital gene expression counting, often with higher throughput and lower cost per cell [33] [32]. A critical innovation in protocol design is the incorporation of Unique Molecular Identifiers (UMIs), which tag individual mRNA molecules to correct for amplification bias and allow for absolute transcript quantification [10]. More recently, total RNA sequencing protocols like VASA-seq have been developed to detect both polyadenylated and non-polyadenylated transcripts, including long non-coding RNAs (lncRNAs) and short non-coding RNAs (sncRNAs), thereby providing a more comprehensive view of the cellular transcriptome [33].

Experimental Workflow and Platform Selection

The following diagram outlines a generalized scRNA-seq workflow, from sample preparation to data analysis:

Table 1: Common scRNA-seq Platforms and Their Characteristics

| Platform/Protocol | Cell Isolation Strategy | Transcript Coverage | UMIs | Throughput | Primary Application |

|---|---|---|---|---|---|

| 10x Genomics Chromium | Droplet-based | 3'-end | Yes | High (10,000s of cells) | Cell atlas, heterogeneity |

| Smart-Seq2 | FACS/Microfluidics | Full-length | No | Low (96-384 cells) | Isoform analysis, SNP detection |

| VASA-seq | Droplet-based/Plate | Total RNA | Yes | High (10,000s of cells) | Non-coding RNA, splicing analysis |

| Fluidigm C1 | Microfluidics IFC | Full-length | No | Medium (800 cells) | High sensitivity, predefined cell types |

| SPLiT-Seq | Combinatorial Indexing | 3'-end | Yes | Very High (1,000,000s of cells) | Fixed tissue, large sample numbers |

The choice of platform has direct implications on data output. For instance, droplet-based methods like 10x Chromium and VASA-seq excel in profiling tens of thousands of cells, making them ideal for discovering novel cell types within complex tissues [33] [10]. In contrast, full-length, plate-based methods like Smart-Seq2 offer superior sensitivity for detecting low-abundance transcripts and are better suited for detailed analysis of alternative splicing [32]. A key consideration during sample preparation is the isolation of viable single cells. While fluorescence-activated cell sorting (FACS) provides high specificity, microfluidic devices (e.g., Fluidigm C1) offer integrated capture and processing. For difficult-to-dissociate tissues or frozen samples, single-nucleus RNA-seq (snRNA-seq) provides a viable alternative, minimizing dissociation-induced stress responses [10] [32].

Application Notes: Unraveling Development and Disease

Mapping Developmental Trajectories

scRNA-seq is uniquely powerful for reconstructing developmental lineages and understanding the process of cellular differentiation. By applying pseudotime analysis, computational tools can order individual cells along a continuum of differentiation, inferring a developmental trajectory based on transcriptional similarity [11] [23]. This approach reveals the sequence of gene expression changes that occur as a progenitor cell transitions into a fully differentiated state.

A prominent example is the differentiation of human induced pluripotent stem cells (iPSCs) into cardiomyocytes. A study profiling 32,365 cells across four time points (days 0, 2, 4, and 10) successfully reconstructed this cardiac differentiation trajectory [23]. The analysis began with iPSCs expressing pluripotency markers (NANOG, POU5F1), progressed through a primitive streak/mesoderm state (marked by T, MIXL1), and gave rise to cardiac progenitors (expressing NKX2-5, ISL1, TBX5), before finally maturing into definitive cardiomyocytes. The cardiomyocyte cluster was identified by the high expression of sarcomeric genes (MYL7, TNNT2, TTN) and enrichment of pathways like "Cardiac muscle contraction" and "Adrenergic signaling in cardiomyocytes" [23]. This detailed mapping provides a reference for understanding normal heart development and offers a model to study congenital heart diseases.

Similarly, in plant biology, scRNA-seq has been used to investigate the regenerative potential of Arabidopsis callus cells. The study tracked cells through key developmental stages—initiation, proliferation, and greening—revealing distinct transcription factor networks and highlighting the role of environmental factors like oxygen and light in regulating this process [11].

Deciphering Disease Mechanisms

In disease contexts, particularly cancer, scRNA-seq has been instrumental in characterizing intra-tumoral heterogeneity and understanding the cellular hierarchy and plasticity that drive disease progression and therapy resistance. In glioblastoma, a highly aggressive brain tumor, scRNA-seq of patient-derived cells has helped move beyond a bulk-tumor view to identify distinct transcriptional signatures of rare cell subpopulations, including cancer stem cells that may be responsible for tumor recurrence [34].

The technology also enables the study of the tumor microenvironment (TME) at a single-cell level, dissecting the complex interactions between malignant cells and non-malignant cells such as immune infiltrates and stromal cells. This can reveal mechanisms of immune evasion and identify potential targets for immunotherapy [32]. The ability to uncover novel and rare cell types, along with their specific gene regulatory networks, positions scRNA-seq as a cornerstone technology for biomarker discovery and the advancement of personalized medicine [9] [32].

Detailed Experimental Protocol

This section provides a generalized but detailed protocol for a droplet-based scRNA-seq experiment, suitable for profiling complex tissues.

Sample Preparation and Single-Cell Suspension

- Tissue Dissociation: Mechanically dissect the tissue of interest and mince it into small fragments (~1-2 mm³). Use a combination of enzymatic digestion (e.g., collagenase, trypsin) tailored to the tissue type and gentle trituration to dissociate the fragments into a single-cell suspension. Perform all steps on ice or at 4°C whenever possible to preserve RNA integrity.

- Quality Control and Viability Assessment: Pass the cell suspension through a flow cytometry-compatible filter (e.g., 40 μm nylon mesh) to remove clumps and debris. Determine cell viability and concentration using a hemocytometer with Trypan Blue staining or an automated cell counter. Critical: A viability of >90% is strongly recommended to minimize background noise from apoptotic cells.

- Cell Sorting (Optional): If targeting a rare population, use FACS to sort cells based on specific surface markers or fluorescent reporters. Resuspend the final cell preparation at a optimized concentration (e.g., 1,000 cells/μL) in a cold, protein-rich buffer like PBS with 1% BSA to maintain cell viability.

Single-Cell Partitioning and Library Preparation

- Single-Cell Barcoding: Load the single-cell suspension, along with barcoded beads and partitioning oil, into a commercial droplet-based system (e.g., 10x Genomics Chromium). The system will co-encapsulate individual cells with single barcoded beads in nanoliter-scale droplets. Each bead is coated with oligonucleotides containing a cell barcode (unique to each droplet), a UMI, and a poly(dT) sequence.

- In-Droplet Reverse Transcription: Within each droplet, cells are lysed, and the poly(T)-primers on the beads capture polyadenylated mRNA. The reverse transcription reaction occurs, generating cDNA molecules tagged with the cell barcode and UMI.

- Library Construction: Break the droplets and pool the barcoded cDNA. Synthesize the second strand and amplify the cDNA via PCR. During this step, sample indexes and sequencing adapters (e.g., P5 and P7 for Illumina platforms) are added to create the final sequencing library.

- Library QC and Sequencing: Quality-check the library using a Bioanalyzer or TapeStation to confirm the expected size distribution and quantify the library using qPCR. Pool libraries if multiplexing and sequence on an appropriate Illumina platform (e.g., NovaSeq) with a read depth of 20,000-50,000 reads per cell as a starting guideline.

Key Reagent Solutions

Table 2: Essential Research Reagents for scRNA-seq

| Reagent / Material | Function | Example |

|---|---|---|

| Cell Suspension Buffer | Maintains cell viability and prevents clumping during loading. | PBS + 0.04% BSA or 1% FBS |

| Barcoded Beads | Uniquely labels all mRNA from a single cell with a shared barcode and UMIs. | 10x Genomics Gel Beads |

| Partitioning Oil | Creates stable, single-cell-containing droplets for parallel processing. | 10x Genomics Partitioning Oil |

| Reverse Transcriptase Enzyme | Synthesizes stable cDNA from captured mRNA templates. | Maxima H- Reverse Transcriptase |

| Library Amplification Kit | Amplifies barcoded cDNA and adds sequencing adapters. | KAPA HiFi HotStart ReadyMix |

| Dual Index Kit | Adds unique sample indexes to allow for multiplexing of libraries. | 10x Genomics Dual Index Kit |

Data Analysis and Computational Tools

Core Analytical Workflow

The analytical workflow for scRNA-seq data involves several standardized steps, typically executed using R or Python-based environments. The following diagram illustrates the key stages of data processing and interpretation:

- Quality Control and Filtering: Sequence demultiplexing and alignment to a reference genome are performed using tools like

Cell Ranger(10x Genomics) orSTARsolo. The initial quality control step involves filtering out low-quality cells, which are typically characterized by a low number of detected genes, a high proportion of mitochondrial reads (indicating apoptosis or cellular stress), and a high count of UMIs (potentially indicating doublets) [10] [23]. A common threshold is to remove cells where mitochondrial gene counts exceed 10% [23]. - Normalization, Integration, and Clustering: Data normalization (e.g., with

SCTransform) corrects for technical variations like sequencing depth. If multiple samples are involved, integration tools (e.g.,Harmony,Seurat's CCA) are used to remove batch effects. Principal component analysis (PCA) is performed on the highly variable genes, followed by graph-based clustering in a low-dimensional space (e.g., UMAP or t-SNE) to group transcriptionally similar cells [23]. - Cluster Annotation and Trajectory Inference: Cell clusters are annotated into biological cell types by finding differentially expressed genes (DEGs) for each cluster and comparing them to known marker genes from databases. To understand dynamic processes, pseudotime analysis is conducted using tools like

MonocleorPAGA. This places cells along a reconstructed trajectory, modeling the continuous process of development or transition between states [11] [23]. - Advanced Analysis: Further analysis can include

SCENICfor inferring gene regulatory networks,CellChatfor analyzing cell-cell communication, andAUCellfor scoring gene set activity [23].

Visualization and Accessibility

Effective visualization is critical for interpreting scRNA-seq data. Tools like dittoSeq provide color-blind-friendly and publication-ready visualizations for dimensionality reduction plots, heatmaps, and expression plots, seamlessly integrating with popular analysis frameworks like Seurat and SingleCellExperiment [14]. For spatial transcriptomics data, which adds locational context to gene expression, tools like Spaco offer space-aware colorization methods to better visualize categorical data within tissue topologies [35].

Single-cell RNA sequencing has fundamentally altered our approach to investigating biological systems, providing a high-resolution lens to examine the cellular heterogeneity that underpins both normal development and disease. By enabling the deconstruction of tissues into their constituent cell types, tracing lineage trajectories, and revealing the molecular signatures of rare but critical populations, scRNA-seq delivers on the promise of the cell theory. The ongoing refinement of protocols, such as the development of total RNA-seq and multi-omic assays, coupled with increasingly sophisticated and user-friendly computational tools, ensures that this technology will remain at the forefront of biomedical research. As we continue to build comprehensive atlases of human tissues in health and disease, the biological significance uncovered by scRNA-seq will be instrumental in driving forward the fields of regenerative medicine, drug discovery, and personalized therapeutics.

From Data to Discovery: Computational Methods and Real-World Applications

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to study developmental processes, cellular heterogeneity, and disease progression at unprecedented resolution. A fundamental challenge in analyzing these datasets is reconstructing dynamic biological processes from static snapshots of gene expression. This has given rise to several core computational techniques for trajectory inference, including pseudotime analysis, RNA velocity, and related methods that collectively enable researchers to infer temporal dynamics from cross-sectional data [36]. These methods allow scientists to order cells along developmental trajectories, predict future cell states, and uncover the molecular drivers of cellular fate decisions.

In developmental biology and drug discovery, understanding the trajectory of cell differentiation is crucial for identifying key transition points and potential therapeutic targets. Pseudotime analysis provides a quantitative measure of progress through a biological process, while RNA velocity infers the immediate future state of cells based on splicing kinetics [37]. When integrated, these approaches form a powerful framework for reconstructing developmental lineages and understanding the dynamics of gene regulation.

Theoretical Foundations

Pseudotime Analysis

Pseudotime analysis is a computational approach that orders individual cells along a trajectory representing a biological process such as differentiation, development, or disease progression. The core assumption is that transcriptional similarity between cells reflects their temporal progression through a dynamic process. Pseudotime is defined as a "quantitative measure of progress through a biological process" and is inferred from the transcriptomic profiles of cells captured at a single time point [38].

The mathematical foundation of pseudotime construction typically begins with dimensionality reduction, projecting high-dimensional scRNA-seq data onto a lower-dimensional space that preserves the intrinsic structure of the data. This is justified by the observation that dynamical processes progress on a low-dimensional manifold [39]. Following this projection, cells are ordered based on one of several principles:

- Cluster-based approaches: Cells are first clustered using algorithms such as k-means, Leiden, or hierarchical clustering, then connections between clusters are identified and ordered [39].

- Graph-based approaches: Connections between cells are established in the reduced dimensional space, defining a graph from which trajectories are extracted [39].

- Manifold-learning approaches: Methods like Slingshot use principal curves or graphs to estimate underlying trajectories [39].

- Probabilistic frameworks: Methods like Palantir model trajectories as Markov chains and assign pseudotime based on transition probabilities between cell states [39].

A significant challenge in pseudotime analysis is that transcriptional similarity does not always imply developmental relationships. For example, primitive endoderm and definitive endoderm in early mammalian development have similar expression profiles but emerge from different precursors at different developmental stages [36]. This highlights the importance of incorporating additional information, such as RNA velocity or time-series data, to improve trajectory inference.

RNA Velocity

RNA velocity exploits the dynamics of RNA splicing to predict the immediate future state of individual cells. The method distinguishes between unspliced (nascent) and spliced (mature) mRNAs, which are captured simultaneously in standard scRNA-seq protocols [37]. The core concept is that the ratio of unspliced to spliced mRNAs for each gene provides information about the rate and direction of gene expression changes.

The kinetics of RNA velocity are described by a system of linear differential equations:

Where u(t) and s(t) represent the abundance of unspliced and spliced mRNAs at time t, respectively, α(t) is the transcription rate, β is the splicing rate, and γ is the degradation rate [40].

RNA velocity vectors are high-dimensional predictors that estimate the future state of individual cells on a timescale of hours. When projected onto low-dimensional embeddings, these vectors reveal the directional flow of cells, enabling the inference of developmental trajectories and fate decisions [37]. However, the method relies on the assumption that splicing rates are constant and gene-specific, which may not hold true in all biological contexts [36].

Integrated Trajectory Inference Methods

More recent methods integrate gene expression data with RNA velocity to overcome limitations of individual approaches. CellPath, for example, is a trajectory inference method that leverages both single-cell gene expression and RNA velocity information to infer high-resolution trajectories without constraints on topology [41]. This integration allows CellPath to automatically detect trajectory direction and identify multiple parallel trajectories that might represent distinct biological processes within the same dataset.

Another innovative approach is UniTVelo, which introduces a unified latent time across the transcriptome when inferring gene expression dynamics and RNA velocity [40]. This temporal unification aggregates dynamic information across all genes to reinforce the temporal ordering of cells, addressing challenges posed by genes with weak kinetics or complex branching patterns.

Computational Protocols

Protocol 1: Standard Pseudotime Analysis with Monocle3

This protocol outlines the steps for performing pseudotime analysis using the Monocle3 package on a multi-sample scRNA-seq dataset of mouse mammary gland development [42].

Step 1: Data Preprocessing and Integration

- Download count matrices, barcode information, and feature annotations from GEO repositories (e.g., GSE164017 and GSE103275).

- Perform quality control to remove low-quality cells and doublets using tools like Scrublet.

- Normalize data using standard scRNA-seq normalization approaches.

- Integrate multiple samples using Seurat's anchor-based integration, Harmony, or MNN methods to correct for batch effects.

Step 2: Dimension Reduction and Clustering

- Reduce dimensionality using principal component analysis (PCA).

- Perform clustering using graph-based clustering algorithms (e.g., Leiden clustering).

- Visualize cells in two dimensions using UMAP or t-SNE.

Step 3: Trajectory Inference with Monocle3

- Create a CellDataSet object from the integrated and normalized data.

- Preprocess data using

preprocess_cds()function with normalization and PCA. - Reduce dimensions further using UMAP or t-SNE via

reduce_dimension(). - Cluster cells using

cluster_cells(). - Learn the trajectory graph with

learn_graph(). - Order cells along the trajectory using

order_cells(), specifying a root node based on biological knowledge (e.g., progenitor cell population).

Step 4: Downstream Analysis

- Identify genes that vary along pseudotime using differential expression tests.

- Perform gene set enrichment analysis to identify biological processes associated with pseudotime.

- Visualize results by plotting cells in reduced dimension space colored by pseudotime values.

Table 1: Key Software Packages for Pseudotime Analysis

| Package | Methodology | Trajectory Types | Reference |

|---|---|---|---|

| Monocle3 | Single-rooted directed acyclic graph | Complex graphs | [38] |

| Slingshot | Principal curves | Linear, branching | [38] |

| Palantir | Markov chains | Branching | [39] |

| DPT | Diffusion maps | Linear, branching | [39] |

Protocol 2: RNA Velocity Analysis with velocyto.py and scVelo

This protocol describes how to estimate and analyze RNA velocity using the velocyto.py and scVelo packages [43].

Step 1: Data Generation and Annotation

- Align sequencing reads to a reference genome using STAR or HISAT2.

- Annotate spliced and unspliced reads using

velocyto runcommand with appropriate annotation files. - Generate count matrices for spliced and unspliced mRNAs.

Step 2: Data Preprocessing

- Filter genes based on minimum expression thresholds.

- Normalize counts using size factors or depth scaling.

- Select highly variable genes for downstream analysis.

- Perform dimensionality reduction using PCA.

Step 3: Velocity Estimation

- For velocyto.py: Use the steady-state model to estimate RNA velocity.

- For scVelo: Choose between stochastic, dynamical, or latent time models for velocity estimation.

- Compute velocity vectors for each cell based on the phase portraits of spliced vs. unspliced counts.

Step 4: Visualization and Interpretation

- Project velocity vectors onto existing embeddings (e.g., UMAP, t-SNE).

- Visualize as streamlines or arrows indicating the direction and magnitude of cellular dynamics.

- Identify terminal states and branching points in the trajectory.

Protocol 3: Integrated Analysis with CellPath

CellPath is a method that integrates single-cell gene expression and RNA velocity information to infer high-resolution trajectories [41]. This protocol outlines its application.

Step 1: Input Data Preparation

- Obtain scRNA-seq count matrix.

- Calculate RNA velocity matrix using upstream methods (scVelo or velocyto).

- Construct meta-cells by grouping small clusters of cells to reduce noise.

Step 2: Graph Construction and Path Finding

- Construct k-nearest neighbor (kNN) graphs on meta-cells.

- Apply path finding algorithms to identify possible trajectories.

- Use a greedy algorithm to select major trajectories representing key biological processes.

Step 3: Cell-level Trajectory Assignment

- Map trajectories back to individual cells from meta-cells.