Navigating Batch Effects: A Comprehensive Guide to Integrating Embryo Multi-Omics Datasets

Integrating multiple embryo datasets from diverse studies and platforms is crucial for unlocking large-scale biological insights into early development.

Navigating Batch Effects: A Comprehensive Guide to Integrating Embryo Multi-Omics Datasets

Abstract

Integrating multiple embryo datasets from diverse studies and platforms is crucial for unlocking large-scale biological insights into early development. However, this integration is severely challenged by batch effects—technical variations that can obscure true biological signals and lead to irreproducible findings. This article provides a comprehensive guide for researchers and scientists, covering the foundational principles of batch effects in embryo studies, a practical overview of state-of-the-art correction methodologies, strategies for troubleshooting and optimization to prevent overcorrection, and a rigorous framework for validating and comparing correction performance using reference benchmarks. By synthesizing the latest computational advances and consortium-driven standards, this guide aims to empower robust and reliable data integration in developmental biology.

Understanding the Challenge: Why Batch Effects Complicate Embryo Research

Batch effects are technical sources of variation that are irrelevant to the biological questions under investigation but can systematically distort omics data analysis [1]. These non-biological variations arise from differences in experimental conditions, reagent lots, personnel, sequencing platforms, or processing times [1] [2]. In the context of embryo research, where integrating multiple datasets is essential for building comprehensive developmental atlases, batch effects present particularly formidable challenges [3]. The presence of batch effects can obscure true biological signals, lead to incorrect conclusions about developmental pathways, and ultimately compromise the reproducibility of scientific findings [1].

The profound impact of batch effects extends beyond mere technical nuisance—they represent a critical factor in the broader reproducibility crisis affecting scientific research [1]. A survey conducted by Nature found that 90% of researchers believe there is a reproducibility crisis, with over half considering it significant [1]. Batch effects from reagent variability and experimental bias have been identified as paramount factors contributing to this problem, sometimes resulting in retracted papers and discredited research findings [1]. In one notable example, the sensitivity of a fluorescent serotonin biosensor was found to be highly dependent on the reagent batch, specifically the batch of fetal bovine serum (FBS), leading to retraction of a high-profile publication when key results could not be reproduced with different reagent lots [1].

In embryonic development research, the integration of multiple single-cell RNA-sequencing datasets has become standard practice for constructing comprehensive reference atlases [3]. However, this integration process is particularly vulnerable to batch effects, which can confound the identification of true cell states and developmental trajectories. As researchers increasingly rely on stem cell-based embryo models to study early human development, the need for effective batch effect correction becomes paramount for proper validation and benchmarking against in vivo counterparts [3].

Fundamental Mechanisms

At its core, the batch effect problem stems from the basic assumptions of data representation in omics technologies [1]. In quantitative omics profiling, the absolute instrument readout or intensity (I)—whether represented as FPKM, FOT, peak area, or other measures—serves as a surrogate for the actual concentration or abundance (C) of an analyte in a sample. This relationship relies on the assumption that under any experimental conditions, there exists a linear and fixed relationship (f) between I and C, expressed as I = f(C). However, in practice, fluctuations in this relationship due to diverse experimental factors make I inherently inconsistent across different batches, leading to inevitable batch effects in omics data [1].

Batch effects can emerge at virtually every step of a high-throughput study, though some sources are specific to particular omics types while others are more universal [1]:

Flawed or confounded study design: This occurs when samples are not collected randomly or when selection is based on specific characteristics like age, gender, or clinical outcome, creating systematic biases [1].

Protocol procedures: Variations in sample preparation, such as different centrifugal forces during plasma separation or differences in time and temperature prior to centrifugation, can cause significant changes in mRNA, proteins, and metabolites [1].

Sample storage conditions: Differences in storage temperature, duration, and freeze-thaw cycles introduce technical variations that can mask biological signals [1].

Reagent lots: Changes in reagent batches, particularly enzymes or kits used in library preparation, can introduce substantial technical variations [1].

In single-cell technologies such as scRNA-seq, batch effects are particularly pronounced due to lower RNA input, higher dropout rates, and a greater proportion of zero counts compared to bulk RNA-seq [1]. The complex nature of single-cell data, with its inherent cell-to-cell variations, makes these datasets especially vulnerable to batch effects [1] [4].

Special Considerations for Embryo Research

Embryonic development studies present unique challenges for batch effect management. The construction of comprehensive human embryo reference tools requires integration of multiple datasets spanning different developmental stages, often collected across different laboratories using varying protocols [3]. In one effort to create an integrated human embryogenesis transcriptome reference, researchers collected six published datasets covering stages from zygote to gastrula, employing fast mutual nearest neighbor (fastMNN) methods to mitigate batch effects while preserving biological signals [3]. Such integration efforts are crucial for establishing universal references for benchmarking human embryo models, but are highly susceptible to batch effects that can distort the representation of developmental trajectories [3].

Evaluating Batch Effect Correction Methods: Key Metrics and Methodologies

Performance Evaluation Frameworks

The assessment of batch effect correction (BEC) methods requires multiple complementary approaches to evaluate both technical effectiveness and biological preservation. RBET (Reference-informed Batch Effect Testing) has emerged as a robust statistical framework that leverages reference gene expression patterns to evaluate BEC performance with sensitivity to overcorrection [5]. This method utilizes housekeeping genes with stable expression patterns across cell types as internal controls to distinguish successful integration from overcorrection that erases biological variation [5].

Other established metrics include:

- kBET (k-nearest neighbor batch effect test): Measures batch mixing at the local level of every cell's neighborhood [5] [2].

- LISI (Local Inverse Simpson's Index): Evaluates batch diversity in local neighborhoods, with higher values indicating better mixing [5].

- ASW (Average Silhouette Width): Quantifies cluster quality and separation, with values closer to 1 indicating well-defined clusters [6].

- NMI (Normalized Mutual Information): Assesses biological preservation by comparing clusters to ground-truth annotations [4].

Experimental Designs for Method Validation

Rigorous evaluation of BEC methods requires carefully designed experiments that test performance under different scenarios:

Balanced vs. Confounded Designs: In balanced scenarios, samples across biological groups are evenly distributed across batches, while in confounded scenarios, biological groups are completely aligned with batch groups, creating challenging conditions for BEC methods [7].

Reference Material-Based Designs: The Quartet Project has pioneered the use of multiomics reference materials from matched cell lines to objectively assess BEC performance. This approach enables precise evaluation by providing ground truth measurements across batches and platforms [7].

Table 1: Key Metrics for Evaluating Batch Effect Correction Methods

| Metric | Measurement Focus | Optimal Value | Strengths | Limitations |

|---|---|---|---|---|

| RBET [5] | Batch effect on reference genes | Lower values indicate better correction | Sensitive to overcorrection; uses biologically meaningful signals | Requires validated reference genes |

| kBET [5] [2] | Local batch mixing | Lower values indicate better mixing | Comprehensive local assessment | Can lose discrimination with large batch effects |

| LISI [5] | Batch diversity in neighborhoods | Higher values indicate better mixing | Local assessment of integration | May favor overcorrection in some cases |

| ASW [6] | Cluster quality and separation | Closer to 1 indicates better clusters | Simple interpretation | Global measure may miss local issues |

| NMI [4] | Biological preservation against ground truth | Higher values indicate better preservation | Direct measure of biological fidelity | Requires accurate ground truth labels |

Comparative Analysis of Batch Effect Correction Methods

Algorithmic Approaches and Their Mechanisms

Batch effect correction methods can be broadly categorized into several algorithmic families, each with distinct mechanisms and applications:

1. Latent Space Merging Methods

- Seurat: Uses mutual nearest neighbors (MNNs) to find shared cell states between batches and calculate nonlinear projections to reduce inter-batch distances [8].

- Harmony: Employs cross-dataset fuzzy clustering to iteratively merge clusters of cells predicted to be in similar states [8] [7].

- fastMNN: Applies mutual nearest neighbors for batch correction in large-scale integration tasks, as demonstrated in human embryo reference construction [3].

2. Generative Models

- scVI: A variational autoencoder-based approach that parametrizes the distribution of observed counts using deep neural networks conditioned on latent variables and batch labels [8].

- CODAL: Extends variational autoencoder framework with mutual information regularization to explicitly disentangle technical and biological effects [8].

- sysVI: A conditional variational autoencoder method employing VampPrior and cycle-consistency constraints to improve integration across systems with substantial batch effects [4].

3. Ratio-Based Methods

- Ratio-based Scaling: Transforms expression values relative to concurrently profiled reference materials, particularly effective when batch effects are completely confounded with biological factors [7].

4. Tree-Based Integration

- BERT (Batch-Effect Reduction Trees): Decomposes integration tasks into binary trees of batch-effect correction steps, efficiently handling incomplete omic profiles [6].

Performance Comparison Across Scenarios

Recent comprehensive evaluations have revealed significant differences in method performance under various experimental conditions:

Table 2: Performance Comparison of Batch Effect Correction Methods Across Omics Types

| Method | Algorithm Type | Balanced Scenarios | Confounded Scenarios | Single-Cell Data | Multi-Omics Integration | Key Limitations |

|---|---|---|---|---|---|---|

| ComBat [7] | Empirical Bayes | Good performance | Struggles with complete confounding | Moderate performance with adaptations | Limited capabilities | Assumes balanced design; may over-correct |

| Harmony [7] | Latent space merging | Excellent performance | Moderate performance | Originally designed for single-cell | Limited capabilities | Requires substantial cell type overlap |

| Ratio-Based [7] | Reference scaling | Good performance | Best performance in completely confounded cases | Works across technologies | Excellent capabilities | Requires reference materials |

| scVI [8] | Generative model | Good performance | Moderate performance | Excellent with large datasets | Growing capabilities | Computational intensity |

| CODAL [8] | Disentangling VAE | Good performance | Good performance with confounded cell states | Excellent for perturbation datasets | Specialized for multi-batch | Complex implementation |

| BERT [6] | Tree-based integration | Excellent performance | Good performance with references | Handles various data types | Broad capabilities | Newer method with less validation |

In a comprehensive assessment of seven BEC algorithms using multiomics reference materials, the ratio-based method demonstrated superior performance in confounded scenarios where biological factors and batch factors were completely aligned [7]. This approach, which scales absolute feature values of study samples relative to concurrently profiled reference materials, proved particularly effective when batch effects were strongly confounded with biological factors of interest [7].

For single-cell embryo studies, methods like sysVI that specifically address substantial batch effects across biological systems have shown promise. sysVI's combination of VampPrior and cycle-consistency constraints enables better integration across challenging domains like cross-species comparisons, organoid-tissue integrations, and different sequencing protocols [4].

Experimental Protocols for Batch Effect Correction

Reference-Based Correction Protocol

The ratio-based method identified as particularly effective for confounded scenarios follows a systematic protocol [7]:

Reference Material Selection: Identify and characterize appropriate reference materials (e.g., Quartet Project reference materials from matched cell lines) that can be profiled concurrently with study samples.

Concurrent Profiling: In each batch, process both study samples and reference materials using identical experimental conditions and protocols.

Ratio Calculation: For each feature (gene, protein, metabolite) in each study sample, calculate ratio values using the expression data of reference samples as denominators:

Ratio_sample = Expression_sample / Expression_referenceData Integration: Combine ratio-scaled values across batches for downstream analysis.

Validation: Assess integration quality using known biological truths and technical metrics to ensure preservation of biological signals while removing technical variations.

Computational Integration Protocol

For computational methods like Seurat, Harmony, or scVI, a standardized workflow ensures reproducible results:

Data Preprocessing: Normalize counts within each batch using standard methods (e.g., SCTransform for Seurat, library size normalization for scVI).

Feature Selection: Identify highly variable genes or features that drive biological variation while minimizing technical noise.

Method Application: Apply the chosen batch correction method with appropriate parameters:

Downstream Analysis: Perform clustering, visualization, and differential expression on integrated data.

Quality Assessment: Evaluate integration success using multiple metrics (RBET, kBET, LISI) and biological validation [5].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Resources for Batch Effect Management

| Resource Type | Specific Examples | Function in Batch Effect Management | Application Context |

|---|---|---|---|

| Reference Materials | Quartet Project multiomics reference materials [7] | Provides ground truth for ratio-based correction and method validation | Multi-batch multiomics studies |

| Housekeeping Genes | Tissue-specific validated reference genes [5] | Serves as internal controls for evaluating batch correction success | Single-cell RNA-seq integration |

| Standardized Kits | Consistent reagent lots across batches | Minimizes technical variation from different reagent batches | All experimental workflows |

| BatchQC Software | BatchQC R/Bioconductor package [9] | Interactive diagnostics and visualization of batch effects | Pre- and post-correction quality control |

| Pluto Bio Platform | Pluto Bio multiomics platform [10] | Web-based batch correction without coding expertise | Multiomics data harmonization |

Visualization and Interpretation

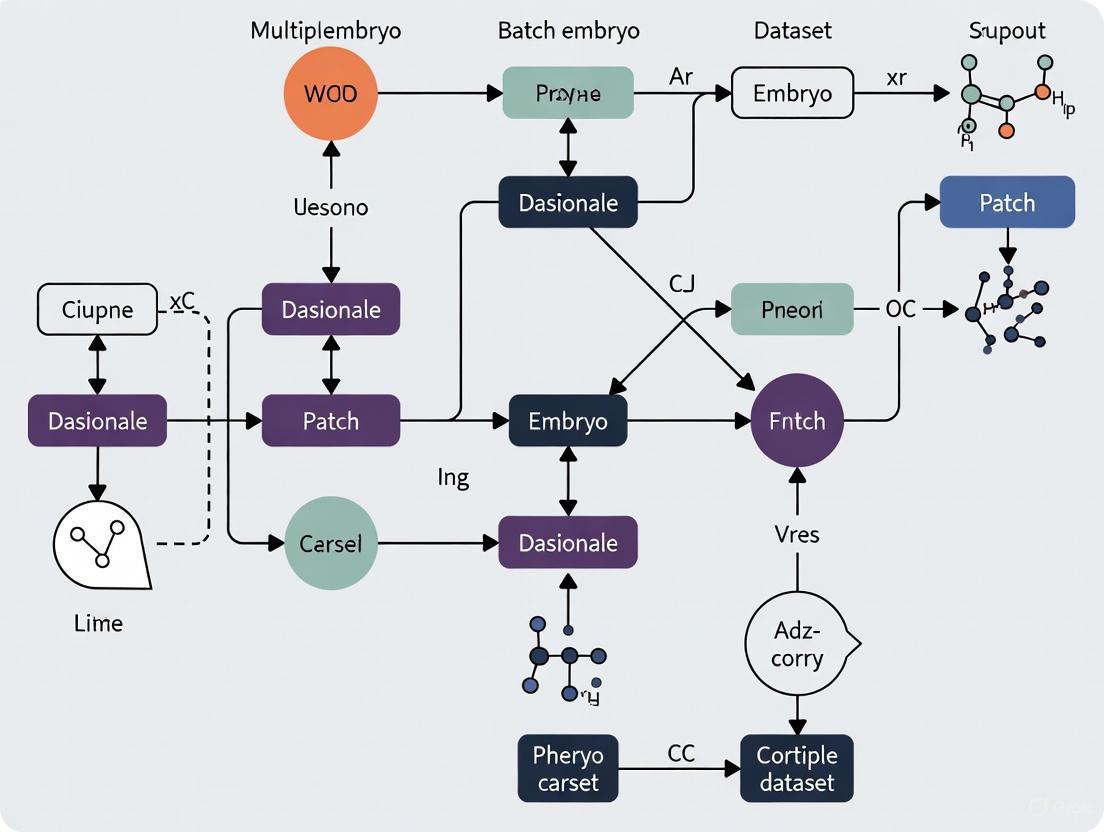

Batch Effect Management Workflow and Risks: This diagram illustrates the complete workflow from experimental design to biological interpretation, highlighting critical decision points and potential risks from improper batch effect correction.

Batch effects remain a formidable challenge in omics research, particularly in integrating multiple embryo datasets where technical variations can easily obscure delicate biological signals of developmental processes. The comparative analysis presented in this guide reveals that method performance is highly context-dependent, with no single approach universally superior across all scenarios.

The ratio-based method emerges as particularly valuable for confounded experimental designs where biological factors align completely with batch factors—a common scenario in multi-center embryo studies [7]. Meanwhile, advanced computational methods like sysVI [4] and CODAL [8] offer powerful approaches for disentangling technical and biological variations in complex single-cell embryo atlases.

As embryo research progresses toward increasingly ambitious integration of diverse datasets—spanning different species, developmental stages, and experimental platforms—effective batch effect management will become even more critical. The development of standardized reference materials [7], robust evaluation metrics [5], and computationally efficient methods [6] represents promising directions for addressing batch effects in the era of large-scale, multiomics developmental biology.

The key to success lies in matching correction strategies to specific experimental scenarios, rigorous validation using multiple complementary metrics, and maintaining awareness that both under-correction and over-correction can lead to misleading biological interpretations. By adopting the systematic approaches outlined in this guide, researchers can navigate the complex landscape of batch effects to extract meaningful biological insights from integrated embryo datasets.

In developmental biology, where the precise orchestration of gene expression dictates fundamental processes, batch effects present a formidable challenge to research reproducibility. These technical variations, unrelated to the biological questions under investigation, are notoriously common in omics data and may result in misleading outcomes if uncorrected—or hinder authentic discovery if over-corrected [1]. The profound negative impact of batch effects extends beyond mere data noise, acting as a paramount factor contributing to irreproducibility that can result in retracted articles, invalidated research findings, and significant economic losses [1]. This problem is particularly acute in developmental studies, where researchers increasingly rely on integrating multiple embryo datasets to uncover the subtle molecular patterns governing development.

The reproducibility crisis in science is well-documented, with a Nature survey finding that over 70% of researchers were unable to reproduce others' findings, and approximately 60% could not reproduce their own results [11]. While multiple factors contribute to this problem, batch effects from reagent variability and experimental bias represent significant, often preventable sources of irreproducibility that can compromise the integrity of developmental research [11].

Understanding Batch Effects in Developmental Contexts

Batch effects are technical variations introduced into high-throughput data due to variations in experimental conditions over time, using data from different labs or machines, or employing different analysis pipelines [1]. In developmental studies specifically, these unwanted variations can emerge at virtually every stage of investigation:

- Sample collection and preparation: Differences in embryonic staging, dissection techniques, or preservation methods

- Reagent variability: Changes in reagent lots, enzyme activities, or solution compositions

- Instrumentation differences: Variations between sequencing platforms, operators, or maintenance cycles

- Environmental factors: Fluctuations in temperature, humidity, or other laboratory conditions

The fundamental cause of batch effects can be partially attributed to the basic assumptions of data representation in omics data, where instrument readout or intensity is often used as a surrogate for analyte concentration or abundance [1]. In practice, the relationship between these elements fluctuates due to differences in diverse experimental factors, making measurements inherently inconsistent across different batches [1].

The Special Case of Developmental Systems

Developmental studies present particular challenges for batch effect management. The precise coordination of molecular events during development leads to highly reproducible macroscopic structural outcomes, with these reproducible patterns emerging at the molecular level during the earliest stages of development [12]. When batch effects interfere with the detection of these subtle patterns, they can fundamentally distort our understanding of developmental processes.

In Drosophila embryo research, for instance, the reproducibility of the Bicoid protein gradient is crucial for proper anterior-posterior patterning, with studies showing that both maternal mRNA counts and the resulting protein gradient are reproducible to within approximately 10% between embryos [12]. This level of precision is essential for accurate positional information encoding in development, and batch effects that exceed this variation threshold could completely obscure fundamental biological relationships.

Quantifying the Impact: Batch Effects and Irreproducibility

Consequences for Data Interpretation

The impact of batch effects on developmental research can be profound and multifaceted:

- Masking of biological signals: Batch effects can introduce noise that dilutes authentic biological signals, reducing statistical power to detect real developmental phenomena [1]

- Erroneous conclusions: When batch effects correlate with biological outcomes, they can lead to incorrect conclusions, as demonstrated by a clinical trial where a change in RNA-extraction solution resulted in incorrect classification outcomes for 162 patients, 28 of whom received incorrect or unnecessary chemotherapy regimens [1]

- Cross-species misinterpretation: In one notable example, reported cross-species differences between human and mouse were initially attributed to biological factors but were later shown to be driven primarily by batch effects related to different data generation timepoints [1]

Economic and Scientific Costs

The financial implications of irreproducibility are staggering. A 2015 meta-analysis estimated that $28 billion per year is spent on preclinical research that is not reproducible [11]. Looking at avoidable waste in biomedical research more broadly, it is estimated that as much as 85% of expenditure may be wasted due to factors that similarly contribute to non-reproducible research [11].

Beyond financial costs, irreproducibility caused by batch effects can lead to rejected papers, discredited research findings, and ultimately, a erosion of public trust in scientific research [1]. Many high-profile articles have been retracted due to batch-effect-driven irreproducibility of key results, including a study on a fluorescent serotonin biosensor whose sensitivity was later found to be highly dependent on reagent batch, particularly the batch of fetal bovine serum [1].

Comparative Evaluation of Batch Effect Correction Strategies

Multiple computational strategies have been developed to address batch effects in biological data. The table below summarizes the primary approaches relevant to developmental studies:

Table 1: Batch Effect Correction Algorithms (BECAs) for Developmental Studies

| Method Category | Representative Algorithms | Key Principles | Advantages | Limitations |

|---|---|---|---|---|

| Linear Models | ComBat [7], removeBatchEffect() [13] | Linear regression to adjust for batch covariates | Statistical efficiency, well-established | Assumes composition invariance, additive effects |

| Ratio-Based Methods | Ratio-G [7] | Scaling relative to reference materials | Effective in confounded designs, practical | Requires reference materials, may not capture non-linearities |

| Mutual Nearest Neighbors | MNN Correct [13] | Identifies mutual nearest neighbors across batches | No need for identical population composition | Performance depends on population overlap |

| Dimensionality Reduction | Harmony [7], PCA | Iterative clustering and correction in reduced space | Handles large datasets, effective integration | May remove subtle biological variation |

| cVAE-Based Methods | sysVI [14] | Conditional variational autoencoders with cycle consistency | Handles substantial batch effects, preserves biology | Computational complexity, parameter sensitivity |

Performance Comparison in Controlled Assessments

Comprehensive evaluations of batch effect correction methods have been conducted using multiomics reference materials from the Quartet Project, which provides well-characterized reference materials from matched cell lines enabling objective assessment of BECA performance [7]. These assessments typically evaluate methods based on multiple performance metrics:

- Signal-to-noise ratio (SNR): Quantifying the ability to separate distinct biological groups after integration

- Relative correlation (RC): Measuring agreement with reference datasets in terms of fold changes

- Classification accuracy: Assessing the ability to correctly cluster cross-batch samples by their biological origin

- Biological preservation: Evaluating how well biological signals are maintained after correction

Table 2: Performance Comparison of BECAs Across Omics Types (Based on Quartet Project Assessment)

| Method | Transcriptomics Performance | Proteomics Performance | Metabolomics Performance | Recommended Scenario |

|---|---|---|---|---|

| Ratio-Based | High [7] | High [7] | High [7] | Confounded designs, all omics types |

| Harmony | Moderate to High [7] | Moderate [7] | Moderate [7] | Balanced batch-group designs |

| ComBat | Moderate [7] | Moderate [7] | Moderate [7] | Balanced designs with known covariates |

| RUVs | Variable [7] | Variable [7] | Variable [7] | When control genes are available |

| BMC | Low to Moderate [7] | Low to Moderate [7] | Low to Moderate [7] | Minimal batch effects, balanced designs |

The ratio-based method consistently demonstrates superior performance, particularly in confounded scenarios where biological factors and batch factors are completely mixed—a common situation in longitudinal developmental studies [7]. This approach works by scaling absolute feature values of study samples relative to those of concurrently profiled reference materials, effectively creating a proportional scaling system that maintains biological relationships while removing technical variations.

Experimental Protocols for Batch Effect Evaluation

Reference Material-Based Assessment

The most robust approach for evaluating batch effects utilizes well-characterized reference materials. The Quartet Project protocol exemplifies this strategy [7]:

- Reference Material Design: Establish multiomics reference materials from matched sources (e.g., B-lymphoblastoid cell lines from a monozygotic twin family)

- Cross-Batch Profiling: Distribute reference materials to multiple labs for generating data across different platforms, protocols, and timepoints

- Data Integration: Combine datasets from multiple batches while maintaining reference sample data

- Performance Metrics Calculation: Evaluate batch effect correction using quantitative metrics including SNR, RC, and classification accuracy

- Method Recommendation: Identify optimal correction methods based on comprehensive assessment

This protocol can be adapted for developmental studies by creating or identifying appropriate developmental reference materials (e.g., pooled embryo extracts at specific developmental stages) that are included in every experimental batch.

The BatchEval Pipeline for Systematic Evaluation

For researchers without access to specialized reference materials, the BatchEval Pipeline provides a comprehensive workflow for evaluating batch effects in integrated datasets [15]:

BatchEval Pipeline Workflow for Systematic Batch Effect Assessment

The BatchEval Pipeline generates a comprehensive report that includes [15]:

- Statistical evaluation: Using Kruskal-Wallis H-test to evaluate variation in gene expression across tissue sections, Kolmogorov-Smirnov Test to assess distributional differences, and Cramer's V correlation coefficient to quantify batch-condition confounding

- Biological preservation metrics: Employing a non-linear neural network classifier to estimate data mixing across multiple tissue sections, with low prediction accuracy indicating well-mixed integrated data

- Visualization panels: Providing PCA, t-SNE, and other visualizations to assess integration quality

- Method recommendation: Identifying the most suitable batch effect removal method for the specific dataset characteristics

Special Considerations for Single-Cell Developmental Data

Enhanced Challenges in Single-Cell Approaches

Single-cell RNA sequencing technologies have revolutionized developmental biology by enabling the resolution of gene expression heterogeneity in individual cells. However, these approaches suffer higher technical variations than bulk RNA-seq, with lower RNA input, higher dropout rates, and a higher proportion of zero counts, low-abundance transcripts, and cell-to-cell variations [1]. These factors make batch effects more severe in single-cell data than in bulk data [1].

Large single-cell RNA sequencing projects in developmental biology usually need to generate data across multiple batches due to logistical constraints [13]. The processing of different batches is often subject to uncontrollable differences (e.g., changes in operator, differences in reagent quality), resulting in systematic differences in the observed expression in cells from different batches [13].

Advanced Integration Strategies for Substantial Batch Effects

Recent methodological advances have addressed the challenges of substantial batch effects in single-cell data, particularly relevant for developmental studies comparing different systems (e.g., different species, organoids vs. primary tissue). The sysVI approach, based on conditional variational autoencoders (cVAE) with VampPrior and cycle-consistency constraints, has shown particular promise for integrating datasets with substantial batch effects while preserving biological signals [14].

Table 3: Performance of cVAE-Based Integration Methods for Substantial Batch Effects

| Method | Batch Correction Strength | Biological Preservation | Key Advantages | Notable Limitations |

|---|---|---|---|---|

| Standard cVAE | Moderate [14] | High [14] | Established methodology, good general performance | Struggles with substantial batch effects |

| KL-Regularized cVAE | High [14] | Low to Moderate [14] | Increased integration strength | Removes biological and batch variation indiscriminately |

| Adversarial cVAE | High [14] | Low to Moderate [14] | Active batch distribution alignment | Prone to mixing unrelated cell types |

| sysVI (VAMP + CYC) | High [14] | High [14] | Preserves biology while integrating substantially | Computational complexity, parameter sensitivity |

Research Reagent Solutions for Batch Effect Mitigation

Essential Materials for Reproducible Developmental Studies

Successful management of batch effects in developmental research requires both computational approaches and careful experimental design with appropriate research reagents. The following table outlines key solutions:

Table 4: Essential Research Reagents and Resources for Batch Effect Management

| Resource Type | Specific Examples | Function in Batch Effect Control | Implementation Considerations |

|---|---|---|---|

| Reference Materials | Quartet Project RMs [7], Drosophila embryo pools | Enable ratio-based correction, quality tracking | Must be biologically relevant, well-characterized |

| Authenticated Cell Lines | Low-passage reference cells [11] | Reduce biological variation from cell state changes | Regular authentication, contamination monitoring |

| Standardized Reagents | Consistent enzyme lots, defined media formulations | Minimize technical variation from component changes | Bulk purchasing, rigorous quality control |

| Nucleic Acid Isolation Kits | Consistent RNA extraction systems | Reduce technical bias in nucleic acid recovery | Avoid protocol changes mid-study |

| Batch Tracking Systems | Laboratory information management systems (LIMS) | Enable documentation and modeling of batch variables | Comprehensive sample metadata capture |

Strategic Implementation of Reference Materials

The most effective strategy for managing batch effects in developmental studies involves the systematic implementation of reference materials. The Quartet Project approach demonstrates how to deploy these resources [7]:

- Concurrent Profiling: Always include reference materials in each experimental batch alongside study samples

- Ratio-Based Transformation: Convert absolute expression values to ratios relative to reference measurements

- Quality Monitoring: Use reference material data to track technical performance across batches

- Cross-Batch Calibration: Employ reference measurements to align data across different platforms and protocols

For developmental studies specifically, researchers can create custom reference materials by pooling embryos or tissues from the relevant model system at specific developmental stages, then including these pools in every batch of sample processing.

Visualizing Batch Effect Assessment Workflows

The complex process of batch effect evaluation and correction can be visualized through the following comprehensive workflow:

Comprehensive Batch Effect Management Workflow for Developmental Studies

Batch effects represent a fundamental challenge to reproducibility in developmental studies, where subtle molecular patterns dictate critical biological outcomes. The evidence presented demonstrates that proactive batch effect management through appropriate experimental design and computational correction is essential for generating reliable, reproducible research findings.

The comparative assessment of correction methods reveals that ratio-based approaches using reference materials consistently outperform other methods, particularly in the confounded batch-group scenarios common in developmental research [7]. For single-cell developmental studies, emerging methods like sysVI show promise for handling substantial batch effects while preserving biological signals [14].

By implementing the rigorous assessment workflows, strategic reagent solutions, and method selection guidelines outlined in this review, developmental biologists can significantly enhance the reproducibility of their findings, ensuring that the profound insights gained from embryo research reflect biological reality rather than technical artifacts.

The integration of single-cell RNA-sequencing (scRNA-seq) datasets from embryo studies has become a fundamental approach for uncovering new insights into developmental biology. However, this integration is frequently complicated by technical variations, or batch effects, that are unrelated to the biological questions of interest. These batch effects arise from multiple sources, including different reagents, sequencing platforms, and confounded study designs, which can introduce unwanted technical variation that obscures true biological signals and potentially leads to misleading scientific conclusions [16]. In the specific context of embryo research, where samples are often scarce and experimental conditions vary substantially across laboratories, these challenges are particularly pronounced. The emergence of large-scale embryo atlases and the increasing use of stem cell-based embryo models have further highlighted the critical need for robust batch effect correction methods [3]. This guide objectively compares current approaches for identifying and mitigating these technical artifacts, providing embryo researchers with practical frameworks for ensuring the reliability and reproducibility of their integrative analyses.

Reagents and Sample Preparation

Variability in reagents and sample preparation protocols represents a major source of batch effects in embryo datasets. These technical variations can be introduced at multiple stages, including sample collection, preparation, and storage [16]. In embryo studies, differences in reagent batches—such as different lots of fetal bovine serum (FBS) used in culture media—have been shown to significantly impact experimental outcomes, sometimes to such a degree that key results become irreproducible when reagent batches are changed [16]. This is particularly problematic in embryo research where consistent culture conditions are essential for normal development. Additional variations can arise from differences in RNA-extraction solutions, enzyme lots for single-cell library preparation, and other critical reagents that may introduce systematic biases between experiments conducted at different times or in different laboratories.

Sequencing Platforms and Profiling Technologies

The rapid evolution of single-cell technologies has led to a diversity of profiling platforms, each with its own technical characteristics that can introduce substantial batch effects. Embryo datasets may be generated using different scRNA-seq protocols (e.g., SMART-seq, 10X Genomics), single-nuclei RNA-seq (snRNA-seq), or even emerging technologies like single-cell Hi-C [4] [17]. Each of these technologies exhibits distinct technical variations, including differences in sensitivity, precision, dropout rates, and coverage [16]. When integrating data from multiple technologies, these platform-specific biases can create substantial challenges. For example, snRNA-seq data often shows systematic differences compared to scRNA-seq data due to differences in RNA capture between whole cells and isolated nuclei [4]. Similarly, integrating data across different species (e.g., mouse and human embryo studies) introduces additional technical and biological variations that can confound analysis [4].

Confounded Study Designs

Confounded study designs represent a particularly insidious source of batch effects in embryo research. This occurs when technical factors are systematically correlated with biological variables of interest [16]. For instance, if all control embryo samples are processed in one batch while experimental conditions are processed in another batch, it becomes impossible to distinguish true biological effects from technical artifacts. In longitudinal embryo studies, sample processing time is often confounded with developmental time, making it difficult to determine whether observed transcriptional changes reflect genuine developmental progression or batch effects [16]. Additionally, the common practice of combining publicly available embryo datasets from different studies almost guarantees confounded designs, as biological conditions of interest are typically correlated with laboratory-specific processing protocols. These confounded designs are particularly problematic because they can create the appearance of biologically meaningful patterns that are actually driven by technical artifacts.

Comparison of Integration and Batch Correction Methods

Performance Metrics and Evaluation Framework

The evaluation of batch effect correction methods typically employs multiple complementary metrics that assess both the removal of technical artifacts and the preservation of biological signals. For batch correction effectiveness, commonly used metrics include batch Average Silhouette Width (bASW), which measures batch separation; graph integration local inverse Simpson's Index (iLISI), which evaluates batch mixing in local neighborhoods; and Graph Connectivity (GC), which assesses whether cells of the same type from different batches form connected subgraphs [18]. For biological conservation, standard metrics include cell type Average Silhouette Width (dASW), dataset local inverse Simpson's Index (dLISI), and Inverse Ligand-receptor Loss (ILL) for spatial data [18]. In embryo-specific contexts, additional evaluations may assess the preservation of known developmental trajectories and lineage relationships [19] [3].

Method Comparison and Performance

Table 1: Comparison of Batch Effect Correction Methods for Embryo Datasets

| Method | Approach | Strengths | Limitations | Reported Performance (Key Metrics) |

|---|---|---|---|---|

| sysVI (VAMP + CYC) | Conditional VAE with VampPrior and cycle-consistency | Effective for substantial batch effects; preserves biological signals; suitable for cross-species integration | Complex implementation; requires substantial computational resources | Improved batch correction while retaining biological signals for downstream interpretation [4] |

| BERT | Tree-based using ComBat/limma | Handles incomplete omic data; efficient for large datasets; considers covariates | May not capture complex non-linear batch effects | Retains all numeric values; 11× runtime improvement; 2× ASW improvement in some scenarios [6] |

| COSICC | Statistical framework with sampling bias correction | Specifically designed for embryo perturbation studies; corrects compositional bias | Limited to comparative perturbation analyses | Effective for chimera studies; identifies developmental delays and lineage effects [19] |

| HarmonizR | Matrix dissection with ComBat/limma | Handles arbitrarily incomplete data; established performance | High data loss with increased missing values; does not address design imbalance | Up to 88% data loss for blocking of 4 batches with 50% missing values [6] |

| FastMNN | Mutual nearest neighbors | Fast integration; preserves biological variation | May not handle strongly confounded designs | Used successfully in human embryo reference integration from zygote to gastrula [3] |

Table 2: Performance Comparison Across Integration Challenges in Embryo Studies

| Integration Scenario | Top Performing Methods | Key Considerations | Biological Preservation Challenges |

|---|---|---|---|

| Cross-species (e.g., mouse-human) | sysVI, FastMNN | Account for evolutionary divergence; align orthologous genes | Risk of over-correction of genuine biological differences between species [4] |

| Multi-technology (e.g., scRNA-seq vs. snRNA-seq) | sysVI, BERT | Address systematic sensitivity differences | Potential loss of cell type-specific signals [4] |

| Organoid-Tissue | sysVI, COSICC | Distinguish in vitro artifacts from genuine biology | Preserving subtle but biologically meaningful differences [4] |

| Perturbation Studies (e.g., knockout chimeras) | COSICC, sysVI | Account for sampling bias; reference-based normalization | Distinguishing true developmental effects from technical confounders [19] |

| Spatial Transcriptomics | GraphST-PASTE, MENDER, STAIG | Integrate spatial and expression information | Balancing spatial context preservation with batch effect removal [18] |

Experimental Protocols for Benchmarking Batch Effect Correction

Standardized Workflow for Method Evaluation

When benchmarking batch effect correction methods for embryo datasets, researchers should follow a standardized workflow to ensure fair and interpretable comparisons. The following protocol outlines key steps for rigorous evaluation:

Dataset Selection and Preprocessing: Curate multiple embryo datasets with known batch effects and established biological ground truth. These should include datasets with varying degrees of technical and biological complexity, such as cross-species comparisons, different sequencing technologies, or confounded designs [4]. Perform uniform preprocessing including quality control, normalization, and feature selection using consistent parameters across all datasets.

Method Application: Apply each batch correction method to the integrated datasets using recommended parameters and implementations. For methods requiring parameter tuning (e.g., KL regularization strength in cVAE-based approaches), perform systematic sweeps to evaluate sensitivity [4].

Metric Computation: Calculate both batch correction and biological preservation metrics using established implementations. For embryo-specific evaluations, include assessment of developmental trajectory preservation using tools like Slingshot [3] and lineage abundance consistency using approaches like COSICCDAgroup [19].

Downstream Analysis: Evaluate the impact of batch correction on downstream analyses relevant to embryo research, including differential expression testing, cell type identification, and trajectory inference [18] [19].

Visual Inspection: Complement quantitative metrics with visualization techniques such as UMAP or t-SNE to assess overall integration quality and identify potential artifacts [3].

Case Study: Human Embryo Reference Integration

The creation of a comprehensive human embryo reference dataset from zygote to gastrula stage provides an illustrative case study for batch effect correction in embryo research [3]. This effort integrated six published datasets generated with different scRNA-seq protocols using fastMNN correction. The protocol included:

Standardized Reprocessing: Raw data from all studies was uniformly processed using the same genome reference (GRCh38) and annotation pipeline to minimize batch effects introduced during alignment and quantification [3].

Iterative Integration: fastMNN was applied to correct batch effects while preserving developmental continuity across datasets from different laboratories and protocols.

Validation: The integrated reference was validated through multiple approaches including: (1) confirmation of known developmental markers across the continuum, (2) SCENIC analysis to verify transcription factor activities, and (3) Slingshot trajectory inference to ensure biologically plausible developmental paths [3].

Functionality Assessment: The utility of the integrated reference was demonstrated by projecting new embryo models onto the reference space and assessing fidelity to in vivo counterparts, highlighting the risk of misannotation when proper references are not used [3].

Visualization of Batch Effect Challenges and Solutions

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Embryo Dataset Integration

| Reagent/Resource | Function | Considerations for Embryo Studies |

|---|---|---|

| fetal bovine serum (FBS) | Cell culture supplement for embryo models | Batch-to-batch variability can significantly impact results; require batch testing and consistency [16] |

| scRNA-seq library prep kits | Single-cell RNA library construction | Different protocols (SMART-seq, 10X) introduce systematic biases; consistency crucial for integration [4] |

| Dissociation enzymes | Tissue dissociation for single-cell suspension | Enzyme lots and activity can affect cell viability and transcriptome integrity [16] |

| spatial transcriptomics slides | Spatial localization of gene expression | Platform-specific biases (10X Visium, MERFISH) require specialized integration approaches [18] |

| Reference datasets | Benchmarking and authentication | Essential for validating embryo models; human embryo reference available from zygote to gastrula [3] |

| Batch effect correction software | Computational integration of datasets | Method choice depends on data type and specific integration challenge [4] [6] |

The integration of embryo datasets across reagents, platforms, and studies remains a significant challenge in developmental biology, but continued methodological advances are providing increasingly robust solutions. No single batch effect correction method universally outperforms others across all embryo data types and integration scenarios [18]. Instead, method selection should be guided by the specific integration challenge—whether cross-species, multi-technology, or confounded design—and validated using multiple complementary metrics that assess both technical artifact removal and biological signal preservation.

Future directions in the field include the development of more sophisticated benchmarks specifically tailored to embryo datasets, improved methods for handling severe data incompleteness [6], and approaches that better preserve subtle but biologically meaningful variations in developmental processes. As single-cell technologies continue to evolve and embryo atlases expand, robust batch effect correction will remain essential for extracting biologically meaningful insights from integrated embryo datasets.

In the field of single-cell RNA sequencing (scRNA-seq) research, particularly in studies integrating multiple embryo datasets, the integrity of any conclusion rests entirely on the quality of the underlying experimental design. The process of batch correction—harmonizing datasets from different studies, protocols, or species—is fraught with challenges where technical artifacts can be mistaken for biological discovery [4]. A balanced experimental design acts as a safeguard, controlling for extraneous variables and ensuring that observed differences in the data are attributable to the biological phenomenon under investigation, such as embryonic developmental stages. In contrast, a confounded design allows these extraneous variables to become entangled with the primary variables of interest, rendering results uninterpretable and potentially misleading [20] [21]. For researchers and drug development professionals building upon integrated atlases of embryonic development, understanding this distinction is not merely academic; it is the critical factor that separates robust, reproducible science from wasted resources. This guide objectively compares the performance of different batch-correction methods and the experimental scenarios that validate them, framing the analysis within the broader thesis of integrating multiple embryo datasets.

Core Concepts: Balance, Confounding, and Batch Effects

Defining the Scenarios

Balanced Scenario: In a balanced experimental design, the different conditions or groups of the primary independent variable are, on average, highly similar to each other with respect to extraneous variables. This is typically achieved through random assignment, a process that uses a random procedure to decide which experimental units (e.g., cells, samples, embryos) are assigned to which condition [21]. This balancing act ensures that any extraneous participant variables—such as genetic background, initial cell viability, or sample quality—are distributed evenly across groups, preventing them from becoming confounding variables.

Confounded Scenario: A confounded scenario arises when the effects of the independent variable cannot be separated from the effects of another, extraneous variable [20]. This occurs when the experimental design fails to control for these extraneous variables across conditions. For example, if all samples from one embryonic stage are processed using a single-nuclei RNA-seq protocol while all samples from another stage are processed using a single-cell RNA-seq protocol, the variable "sequencing protocol" is perfectly confounded with the biological variable "developmental stage." Any observed difference is then ambiguous and cannot be reliably attributed to either factor [4].

The Pervasive Challenge of Batch Effects

In the context of integrating multiple embryo datasets, "batch effects" are a quintessential confounder. These are technical variations introduced when datasets are generated at different times, by different labs, using different protocols, or even from different model systems (e.g., mouse vs. human) [4]. The central goal of batch correction algorithms is to disentangle these non-biological technical variations from the true biological signals of embryonic development. The performance of these algorithms, however, is highly dependent on the initial experimental design used to generate the validation data. A method validated on a confounded dataset may appear to perform well while merely reinforcing the confounding, leading to a false sense of security when applied to new, complex embryo atlases.

Experimental Designs for Robust Integration

The evaluation of batch correction methods relies on experimental designs that can create a known ground truth against which these methods can be tested. The three primary designs used in this field are outlined in the table below.

Table 1: Experimental Designs for Evaluating Batch Correction Methods

| Design Type | Key Principle | Advantages | Disadvantages | Common Use in Batch Correction |

|---|---|---|---|---|

| Independent Measures (Between-Groups) [20] [21] | Different participants or biological samples are used in each condition. | Avoids order effects (practice, fatigue). Simple to setup. | Requires more samples/cells. Risk of participant/sample variables confounding results if not properly randomized. | Comparing batches from entirely different biological samples (e.g., different embryos). |

| Repeated Measures (Within-Subjects) [20] [21] | The same participants or biological samples are measured under all conditions. | Maximally controls for extraneous participant/sample variables. Requires fewer samples. | Vulnerable to order effects (e.g., carryover effects from one batch processing to another). | Splitting a single sample across two sequencing protocols or batches to isolate the technical effect. |

| Matched Pairs [20] [21] | Different participants are used, but they are matched in pairs based on key variables (e.g., genetic background, developmental stage). | Reduces the influence of specific, known extraneous variables. Avoids order effects. | Very time-consuming to find matched pairs. Impossible to match on all possible variables. | Matching mouse and human embryonic cells by homologous cell types to enable cross-species integration. |

Workflow for a Balanced Experimental Scenario

The following diagram visualizes the workflow for establishing a balanced experimental scenario to benchmark batch correction methods, incorporating key control mechanisms like randomization and counterbalancing.

Comparative Analysis: Batch Correction Method Performance

The performance of batch correction methods varies significantly depending on the experimental scenario. The following table summarizes key findings from benchmarking studies, highlighting how a method's ability to preserve biological signal is contingent on the design.

Table 2: Performance of Batch Correction Methods Across Experimental Scenarios

| Method / Approach | Core Methodology | Performance in Balanced Scenarios | Performance in Confounded Scenarios | Key Limitations |

|---|---|---|---|---|

| Standard cVAE with KL Tuning [4] | Conditional Variational Autoencoder using Kullback-Leibler divergence regularization. | Effective at removing technical variation when biological and technical variables are not confounded. | Poor. Removes biological signal along with batch effect; cannot distinguish between them. | KL regularization is a blunt instrument that compresses information, leading to loss of biologically relevant dimensions. |

| Adversarial Learning (e.g., GLUE) [4] | Adds an adversarial module to force batch indistinguishability in the latent space. | Can achieve strong integration when cell type proportions are similar across batches. | Poor. Prone to incorrectly mixing unrelated cell types that have unbalanced proportions across systems (e.g., acinar and immune cells). | Forces alignment even when biologically unjustified, destroying cell-type-specific signals. |

| sysVI (VAMP + CYC) [4] | cVAE using VampPrior and cycle-consistency constraints. | Maintains high performance, demonstrating robust batch correction and biological preservation. | Good. Outperforms other methods by better preserving biological signals while integrating across substantial batch effects (e.g., cross-species). | The combination of VampPrior (for biological preservation) and cycle-consistency (for batch correction) prevents the loss of critical variation. |

Visualizing the Outcome of Method Failure

A primary risk in confounded scenarios is the over-correction of biological signal by adversarial methods. The following diagram illustrates this failure mode, where unbalanced cell types are incorrectly merged.

The Scientist's Toolkit: Essential Reagents & Computational Tools

Successful integration of embryo datasets requires both wet-lab reagents and dry-lab computational tools. The following table details key solutions for this field.

Table 3: Research Reagent and Tool Solutions for Embryo Dataset Integration

| Item Name / Category | Function & Purpose | Specific Application in Embryo Research |

|---|---|---|

| Single-Cell/Nuclei RNA-seq Kits | To isolate and barcode individual cells or nuclei for downstream sequencing, generating the primary digital gene expression matrix. | Profiling embryonic tissues where cellular dissociation can be challenging; single-nuclei protocols are often critical for frozen embryo samples. |

| Species-Specific Antibodies | To validate the presence of specific, conserved cell types across different model systems (e.g., mouse, human) via flow cytometry or immunohistochemistry. | Providing orthogonal confirmation for cell type annotations and identities predicted by computational integration methods like sysVI. |

| Batch Correction Software (sysVI) | A conditional VAE-based method employing VampPrior and cycle-consistency to integrate datasets with substantial batch effects [4]. | The method of choice for challenging integrations, such as combining data from human embryos and mouse models or from organoid and primary tissue systems. |

| cVAE-Based Models (e.g., scvi-tools) | A flexible framework for scRNA-seq data analysis, including batch correction, that is scalable to large atlas projects [4]. | Standard integration of datasets with moderate batch effects, often used as a baseline in benchmarking studies and large-scale atlas construction. |

| Adversarial Models (e.g., GLUE) | Integration methods that use an adversarial component to make batch origin indistinguishable in the latent space [4]. | Can be effective for integrating datasets with very similar cell type compositions, but use with caution in confounded scenarios with unique cell populations. |

The critical distinction between balanced and confounded experimental scenarios is the bedrock upon which reliable single-cell science is built. As the field moves toward ever-larger embryonic cell atlases that combine data from diverse species, protocols, and laboratories [4], the temptation to apply powerful batch correction algorithms to confounded data will grow. This analysis demonstrates that the performance of any method, from standard cVAE to advanced frameworks like sysVI, is inextricably linked to the experimental design of the data it processes. A balanced design, achieved through careful randomization and the use of repeated or matched-pairs measures where possible, provides the only trustworthy ground truth for benchmarking. For researchers and drug developers, the imperative is clear: invest in rigorous experimental design upfront. The integrity of your biological insights into embryonic development—and the success of downstream applications in drug discovery—depends on it.

The integration of multiple single-cell and spatial transcriptomics datasets is a foundational step in modern developmental biology, enabling the study of embryonic processes at unprecedented resolution. However, this integration is complicated by batch effects—technical variations introduced when samples are processed in different experiments, sequencing runs, or technological platforms. These effects can confound true biological variation, such as the subtle transcriptional changes that delineate embryonic cell lineages and developmental stages. The challenge is particularly acute in embryo transcriptomics, where the preservation of delicate spatial patterning and temporal dynamics is paramount. This guide objectively compares the performance of current computational batch correction methods, providing a structured overview of their operational principles, experimental validation, and applicability to embryonic studies to inform researchers and drug development professionals.

Method Comparison: Performance and Operational Characteristics

Table 1: Key Characteristics of Featured Batch Correction Methods

| Method Name | Core Algorithm | Designed for Spatial Data? | Corrects Gene Counts? | Key Advantage for Embryo Studies |

|---|---|---|---|---|

| sysVI [14] | Conditional Variational Autoencoder (cVAE) with VampPrior & cycle-consistency | No | No (Embedding) | Integrates across substantial biological systems (e.g., species); preserves biological signal. |

| Crescendo [22] | Generalized Linear Mixed Model | Yes | Yes | Enables direct visualization of gene spatial patterns across batches; imputes lowly-expressed genes. |

| Tacos [23] | Community-enhanced Graph Contrastive Learning | Yes | No (Embedding) | Effective for data with different spatial resolutions; preserves spatial structures. |

| SpaCross [24] | Cross-masked Graph Autoencoder & Adaptive Spatial-Semantic Graph | Yes | No (Embedding) | Balances local spatial continuity with global semantic consistency for multi-slice integration. |

| Harmony [25] [26] | Soft k-means & linear correction within PCA clusters | No | No (Embedding) | Well-calibrated, introduces minimal artifacts; robust in standard single-cell integration. |

| RBET [5] | Reference-informed Evaluation (uses Housekeeping Genes) | Evaluation Metric | N/A | Sensitive to overcorrection; uses stable gene patterns to assess integration quality. |

Table 2: Comparative Performance on Key Metrics

| Method | Batch Correction (iLISI/bLISI) | Biological Preservation (cLISI/NMI) | Overcorrection Sensitivity | Scalability to Large Atlases |

|---|---|---|---|---|

| Standard cVAE (e.g., scVI) | Struggles with substantial effects [14] | Good for similar samples [14] | Low (KL regularization removes biological signal) [14] | High [14] |

| Adversarial Methods (e.g., GLUE) | High | Low (mixes unrelated cell types) [14] | Low | Variable |

| sysVI (VAMP+CYC) | High on cross-system data [14] | High, improves downstream analysis [14] | Medium (mitigated by cycle-consistency) [14] | High [14] |

| Harmony | Good [25] [26] | Good, well-calibrated [25] [26] | Medium | Good |

| Tacos | High (on spatial data) [23] | High (captures linear trajectories) [23] | Information Not Available | Information Not Available |

| SpaCross | High (on multi-slice data) [24] | High (identifies conserved & stage-specific structures) [24] | Information Not Available | Information Not Available |

Operational Workflows and Data Flow

The following diagram illustrates the general workflow and key decision points for applying these methods to embryo transcriptomics data.

Batch Correction Workflow Selection: A decision tree for selecting an appropriate batch correction method based on data type and analytical goals.

Experimental Protocols and Validation Metrics

Standardized Evaluation Workflow with RBET

The RBET framework provides a robust, reference-informed method for evaluating batch correction success, with particular sensitivity to overcorrection [5].

RBET Evaluation Framework: A workflow for reference-informed evaluation of batch correction performance.

Detailed RBET Protocol [5]:

Reference Gene (RG) Selection: Two strategies can be employed.

- Strategy 1 (Preferred): Curate a list of experimentally validated tissue- or context-specific housekeeping genes (HKGs) from published literature. For embryonic studies, this might include genes involved in fundamental cellular processes known to be stable across developmental stages.

- Strategy 2 (Data-Driven): In the absence of validated HKGs, select genes from the dataset itself that demonstrate stable expression both within and across phenotypically distinct cell clusters. These genes should exhibit low variance and no significant differential expression across batches in the uncorrected data.

Batch Correction Application: Apply the batch correction method(s) to the integrated dataset. The output can be a corrected count matrix or a low-dimensional embedding.

Dimensionality Reduction and Distribution Comparison:

- Project the integrated (and corrected) data into a two-dimensional space using UMAP.

- On this UMAP projection, use Maximum Adjusted Chi-squared (MAC) statistics to compare the underlying distributions of the RGs across different batches. The MAC test is a two-sample distribution comparison designed for high-dimensional data.

RBET Score Calculation and Interpretation: The RBET score is derived from the MAC statistics. A smaller RBET value indicates that the expression patterns of RGs are more consistent across batches, signifying successful batch correction without overcorrection. An increase in the RBET value after aggressive correction can signal that true biological variation is being erased.

Benchmarking Spatial Integration with Tacos

The Tacos method provides a protocol for integrating spatial transcriptomics datasets of varying resolutions, a common challenge when combining embryo data from different platforms [23].

Detailed Tacos Protocol [23]:

Input and Graph Construction: Provide the normalized gene expression matrices and spatial coordinates for all slices. For each slice, construct a spatial graph (k-NN graph) based on the spatial coordinates.

Community-Enhanced Augmentation: Generate two augmented views of each graph to enhance contrastive learning. This involves:

- Communal Attribute Voting: Identifies node features (genes) that are more likely to be masked based on community structure.

- Communal Edge Dropping: Computes probabilities for dropping edges between nodes.

Graph Contrastive Learning Encoding: A graph convolutional network (GCN) encoder extracts spatially aware embeddings from the augmented graph views.

Inter-Slice Alignment via Triplet Loss:

- Identify Mutual Nearest Neighbor (MNN) pairs between spots from different slices based on their embeddings. These are treated as positive pairs.

- Randomly select spots from different slices to form negative pairs.

- Apply a triplet loss function to pull the positive MNN pairs closer together in the latent space and push the negative pairs further apart.

Downstream Analysis: The output is a integrated low-dimensional embedding that can be used for spatial domain identification, denoising, and trajectory inference (e.g., with PAGA).

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Research Reagents and Computational Tools

| Item Name | Type (Wet-Lab/Computational) | Primary Function in Embryo Transcriptomics | Key Consideration |

|---|---|---|---|

| Housekeeping Gene Panels | Wet-Lab & Computational | Serve as Reference Genes (RGs) in RBET evaluation; internal controls for stable biological processes [5]. | Must be validated for specific embryonic tissue and developmental stage. |

| Visium Spatial Slides | Wet-Lab Reagent | In situ capture of full-transcriptome data from tissue sections [27]. | FFPE vs. Fresh-Frozen choice trades off RNA integrity for tissue morphology. |

| High-Variability Gene List | Computational Reagent | Input for graph-based methods (e.g., SpaCross, Tacos); focuses analysis on biologically relevant signals [24]. | Gene selection method can impact downstream spatial domain detection. |

| Validated Cell Type Annotations | Computational Reagent | Ground truth for benchmarking biological preservation post-correction (using ARI, NMI) [5]. | Critical for assessing overcorrection in complex embryonic cell types. |

| Iterative Closest Point (ICP) | Computational Algorithm | 3D spatial registration of consecutive tissue slices in frameworks like SpaCross [24]. | Necessary for building 3D atlas from 2D embryonic sections. |

The field of batch correction for single-cell and spatial transcriptomics is rapidly advancing, with newer methods like sysVI, Tacos, and SpaCross offering sophisticated approaches to handle the substantial technical and biological variations encountered in integrating diverse embryonic datasets. The move towards methods that leverage advanced priors (VampPrior), graph structures, and self-supervised learning reflects an increasing awareness of the need to preserve delicate biological signals, such as spatiotemporal patterning in developing embryos. Furthermore, the development of robust evaluation frameworks like RBET, which is sensitive to the critical problem of overcorrection, empowers researchers to make more informed choices about their integration strategies. As spatial technologies continue to evolve towards higher resolution and the generation of large-scale embryonic atlases accelerates, the careful selection and application of well-calibrated, context-aware batch correction methods will be indispensable for deriving accurate biological insights.

The Correction Toolkit: From Classic Algorithms to Next-Generation AI

Batch effects are notorious technical variations in high-throughput omics data that are unrelated to the biological signals of interest. These unwanted variations arise from differences in experimental conditions, such as reagent lots, personnel, laboratory equipment, sequencing platforms, or data generation timelines. In the context of integrating multiple embryo datasets, batch effects can profoundly confound biological interpretations by introducing systematic biases that mask true biological differences or create artificial ones. The profound negative impact of batch effects includes reduced statistical power, skewed analyses, and potentially incorrect conclusions that can compromise research reproducibility and reliability. When batch effects are confounded with biological factors of interest—a common scenario in longitudinal studies or multi-center collaborations—distinguishing technical artifacts from genuine biological signals becomes particularly challenging [1] [7].

The challenge is especially pronounced in embryo research, where samples may be collected over extended periods, processed in different laboratories, or analyzed using evolving technologies. Without proper correction, batch effects can lead to irreproducible findings and diminished scientific value. A survey published in Nature found that 90% of respondents believed there is a reproducibility crisis in science, with batch effects identified as a paramount contributing factor [1]. This review comprehensively benchmarks batch effect correction algorithms (BECAs) to guide researchers in selecting appropriate methods for integrating multi-embryo datasets, with a focus on performance characteristics, practical implementation, and experimental design considerations.

Understanding the Nature and Impact of Batch Effects

Batch effects can originate at virtually every stage of an omics experiment, creating complex technical variations that must be addressed before meaningful biological interpretation can occur. During study design, flawed or confounded arrangements—such as non-randomized sample collection or selection based on specific characteristics—can introduce biases that become embedded in the data. The sample preparation and storage phase introduces variability through differences in protocols, centrifugal forces, storage temperatures, duration, and freeze-thaw cycles, all of which can significantly alter molecular profiles [1].

In the data generation phase, factors such as instrument calibration, reagent lots, operator expertise, and laboratory environmental conditions contribute substantial technical variation. Finally, during data processing, the use of different analysis pipelines, software versions, normalization strategies, and quality control thresholds can introduce computational batch effects. The fundamental cause of batch effects can be partially attributed to the basic assumption in quantitative omics that instrument readout intensity (I) has a fixed relationship with analyte abundance (C), expressed as I = f(C). In practice, the function f fluctuates due to diverse experimental factors, making intensity measurements inherently inconsistent across batches [1].

Impact on Embryo Research

In embryo research, where subtle molecular signatures often differentiate developmental stages or treatment effects, batch effects can be particularly detrimental. The consequences manifest in several ways. Reduced statistical power occurs when batch-induced variation dilutes biological signals, requiring larger sample sizes to detect genuine effects. False discoveries arise when batch-correlated features are mistakenly identified as biologically significant, while masked biological signals occur when true biological differences are obscured by technical variation [1].

Perhaps most concerning is the confounding of biological and technical factors, especially problematic in longitudinal embryo studies where technical variables may affect outcomes in the same way as developmental timepoints. This makes it difficult or nearly impossible to distinguish whether detected changes are driven by development or by artifacts from batch effects [1]. A clinical example underscoring the seriousness of this issue involved a change in RNA-extraction solution that caused a shift in gene-based risk calculations, leading to incorrect classification outcomes for 162 patients, 28 of whom received incorrect or unnecessary chemotherapy regimens [1].

Comprehensive Benchmarking of BECAs: Methodologies and Metrics

Experimental Design for Benchmarking Studies

Robust benchmarking of BECAs requires carefully designed experiments that can objectively quantify algorithm performance. The Quartet Project has established comprehensive reference materials for multiomics profiling, providing matched DNA, RNA, protein, and metabolite reference materials derived from B-lymphoblastoid cell lines from a monozygotic twin family. These well-characterized materials enable objective assessment of BECA performance by providing ground truth data with known biological relationships [7].

Studies typically evaluate BECAs under two fundamental scenarios: balanced designs, where samples across biological groups are evenly distributed across batches, and confounded designs, where biological factors and batch factors are completely intertwined. The latter represents a more challenging but realistic scenario commonly encountered in practice, especially in embryo research where specific developmental stages might be processed in separate batches [7]. Benchmarking workflows generally involve applying multiple BECAs to datasets with known properties, then evaluating the corrected data using both qualitative visualization and quantitative metrics [28].

Performance Evaluation Metrics

Multiple metrics have been developed to quantitatively assess the performance of BECAs, each focusing on different aspects of correction quality:

- Signal-to-Noise Ratio (SNR): Quantifies the ability to separate distinct biological groups after data integration [28] [7].

- Average Silhouette Width (ASW): Measures both batch mixing (ASW Batch) and biological preservation (ASW Label) with values ranging from -1 to 1, where higher values indicate better separation of biological groups or better mixing of batches [6] [29].

- kBET (k-nearest neighbor batch-effect test): Evaluates batch mixing at a local level by comparing the batch label distribution in nearest neighbors to the expected distribution [29].

- LISI (Local Inverse Simpson's Index): Measures the effective number of batches or cell types in local neighborhoods, with higher values indicating better mixing [14] [29].

- Adjusted Rand Index (ARI): Quantifies the similarity between clustering results and known cell type annotations, evaluating biological structure preservation [29].

- Matthew's Correlation Coefficient (MCC): Used in simulated data with known differential expression patterns to evaluate the accuracy of identifying differentially expressed features [28].

Key Experimental Protocols in Benchmarking Studies

Benchmarking studies typically follow standardized protocols to ensure fair comparison across methods. For single-cell RNA-seq data, the standard protocol includes quality control, normalization, highly variable gene selection, application of BECAs, and evaluation using the metrics above [29]. The batchelor package in Bioconductor provides a standardized workflow for single-cell data integration, including common feature selection, multi-batch normalization, and mutual nearest neighbors (MNN) correction [30].