Navigating the Noise: Advanced Strategies for Handling Technical Variability in Sparse Embryo RNA-seq Data

Technical noise and data sparsity present significant challenges in single-cell RNA sequencing of embryonic samples, where material is precious and cellular diversity is vast.

Navigating the Noise: Advanced Strategies for Handling Technical Variability in Sparse Embryo RNA-seq Data

Abstract

Technical noise and data sparsity present significant challenges in single-cell RNA sequencing of embryonic samples, where material is precious and cellular diversity is vast. This article provides a comprehensive guide for researchers and drug development professionals, exploring the foundational sources of noise in embryo RNA-seq data, from stochastic transcription to batch effects. We review and compare cutting-edge methodological solutions, including the RECODE platform for dual noise reduction, Compositional Data Analysis (CoDA-hd), and deep learning models like scANVI specifically trained on preimplantation embryos. The content offers a practical workflow for troubleshooting and optimization, covering experimental design, normalization, and clustering. Finally, we present a framework for the rigorous validation and comparative analysis of denoising methods, ensuring biological fidelity is preserved. The goal is to empower scientists to extract robust, reproducible biological insights from their most complex embryonic datasets.

Understanding the Signal and the Static: The Core Challenges of Embryo RNA-seq

The Inherent Sparsity of Single-Cell Embryonic Transcriptomes

Frequently Asked Questions (FAQs)

FAQ 1: What causes the high number of zeros in my embryonic single-cell RNA-seq data? The zeros, or sparsity, in your data arise from two main sources:

- Biological Zeros: The true absence of transcript expression in a specific embryonic cell. Recent studies using nascent RNA sequencing reveal that individual cells, including pluripotent stem cells, transcribe only 0.02%–3.1% of the genome, demonstrating inherently limited genome engagement [1].

- Technical Zeros (Dropouts): Failures in detecting transcripts that are present due to technical limitations. These can occur from inefficiencies in cell lysis, reverse transcription, amplification, or limited sequencing depth. In embryonic cells, technical noise can explain a large fraction of what appears to be stochastic expression [2].

FAQ 2: How can I distinguish a biological zero from a technical dropout? Accurately distinguishing these is challenging but critical. No wet-lab method can definitively confirm a biological zero. Therefore, the primary approach is computational inference:

- Use of External Spike-Ins: Adding RNA spike-in molecules to the cell lysate helps model the technical noise. Since the spike-in concentration is known, any missing data for them is technical, allowing you to estimate the dropout rate for your endogenous genes [2].

- Statistical Modeling: Employ model-based imputation methods (e.g., SAVER, DCA) that use probabilistic models to identify which zeros are likely technical based on expression patterns in similar cells [3].

FAQ 3: My analysis pipeline struggles with the data size and sparsity. Are there efficient alternatives? Yes. For extremely large and sparse datasets, consider binarizing your data (0 for zero count, 1 for non-zero). This representation scales up to ~50-fold more cells using the same computational resources and has been shown to yield comparable results to count-based data for tasks like:

- Dimensionality reduction and visualization

- Cell type identification

- Data integration [4] However, caution is advised for analyses that require precise expression magnitude, such as certain differential expression tests.

FAQ 4: Can data imputation methods introduce artifacts into my analysis? Yes. While imputation can recover missing signals, it also risks:

- Introducing Spurious Correlations: Oversmoothing during imputation can artificially inflate gene-gene correlations, leading to false positives in network inference [5].

- Circularity: Relying solely on internal data structure for imputation can artificially amplify signals and mask true biological heterogeneity [3]. It is crucial to validate key findings with alternative methods and use imputation judiciously.

FAQ 5: How do I perform batch correction without losing biological signal in sparse data? Traditional batch correction methods that rely on dimensionality reduction can be confounded by high technical noise. For best practices:

- Use Integrated Tools: Employ methods specifically designed for simultaneous technical noise reduction and batch correction, such as iRECODE, which integrates batch correction within a high-dimensional statistical framework to preserve full-dimensional data [6].

- Benchmark Performance: Use integration metrics like the local inverse Simpson's index (LISI) to quantitatively assess batch mixing and cell-type separation after correction [6].

Troubleshooting Guides

Issue 1: Low Cell Capture Efficiency and High Dropout Rate

Problem: An unusually high percentage of zeros across all genes, suggesting poor transcript capture. Solution:

- Wet-Lab Protocol:

- Optimize Cell Lysis: Ensure complete and rapid lysis to release RNA. Inefficient lysis is a major source of irreversible RNA loss [2].

- Use Unique Molecular Identifiers (UMIs): Incorporate UMIs during library preparation to correct for amplification bias and accurately quantify transcript molecules [2].

- Microfluidic Platforms: Consider using nano-volume microfluidic platforms, which have reported capture efficiencies of up to 40%, significantly higher than manual protocols [2].

- Computational Protocol:

- Apply Noise-Reduction Tools: Use algorithms like RECODE or UNCURL that model the technical noise distribution (e.g., Negative Binomial) to denoise the data and compensate for dropouts [6] [7].

- Quality Control: Filter out cells with an extremely low number of detected genes or a high percentage of mitochondrial reads.

Issue 2: Inability to Identify Rare Cell Types in the Early Embryo

Problem: Subtle but biologically critical subpopulations are obscured by data sparsity and technical noise. Solution:

- Computational Protocol:

- Comprehensive Denoising: Apply a dual noise-reduction method like iRECODE, which is demonstrated to improve rare-cell-type detection by simultaneously mitigating technical and batch noise [6].

- Avoid Over-Imputation: Use methods that preserve data sparsity where appropriate. For rare cell types, the presence or absence of a marker (binary signal) can be more informative than an imputed count [4].

- Leverage Prior Knowledge: Use semi-supervised tools like UNCURL that can incorporate prior information (e.g., marker genes from bulk RNA-seq) to guide the factorization and improve cell state estimation [7].

Issue 3: Gene-Gene Correlation and Network Analysis Yields Unreiable Results

Problem: Gene co-expression networks built from preprocessed data contain many likely false-positive connections. Solution:

- Computational Protocol:

- Diagnose Oversmoothing: Check if your normalization or imputation method has inflated the overall correlation coefficients. Compare the distribution of correlations from processed data to that from raw data; a strong shift away from zero may indicate artifact introduction [5].

- Apply Noise Regularization: After imputation, add a noise-regularization step. This involves adding a small amount of noise scaled to each gene's expression range to penalize oversmoothed data and eliminate correlation artifacts while retaining true biological signals [5].

- Validate with PPI Databases: Check the enrichment of your top correlated gene pairs in protein-protein interaction databases (e.g., STRING). Low enrichment suggests a high degree of spurious correlation [5].

Key Quantitative Findings in Sparsity Research

Table 1: Quantifying Sparsity and Technical Noise in Single-Cell Transcriptomes

| Metric | Finding | Experimental System | Citation |

|---|---|---|---|

| Genome Usage per Cell | ~0.02% - 3.1% of the genome is transcribed | Mouse embryonic stem cells, splenic lymphocytes | [1] |

| Biological vs. Technical Noise | ~17.8% of stochastic allele-specific expression is biological; the remainder is technical | Mouse embryonic stem cells | [2] |

| Binarized Data Correlation | Point-biserial correlation ≥ 0.93 with normalized counts | Aggregated data from 1.5 million cells across 56 datasets | [4] |

| Capture Efficiency | Up to 40% with microfluidic platforms vs. ~10% with manual protocols | Mouse embryonic stem cells | [2] |

Experimental Protocols for Characterizing Noise

Protocol 1: Decomposing Biological and Technical Noise with Spike-Ins

This protocol is used to quantitatively estimate how much of the variability in your embryonic data is genuine biological noise versus technical artifact [2].

- Wet-Lab Protocol:

- Spike-In Addition: Add a known quantity of external RNA control consortium (ERCC) spike-in molecules to the lysis buffer of every single cell.

- Library Preparation: Proceed with your standard single-cell RNA-seq protocol (e.g., using UMIs).

- Computational Protocol:

- Normalization: Normalize the raw sequenced ERCC transcript counts by the estimated capture efficiency for each cell/batch to remove batch effects.

- Generative Modeling: Use a probabilistic model (e.g., as in [2]) that uses the observed mean-variance relationship of the spike-ins to model the expected technical noise across the entire dynamic range of expression.

- Variance Decomposition: For each endogenous gene, subtract the estimated technical variance from the total observed variance to derive the biological variance.

Protocol 2: Embracing Sparsity via Data Binarization for Scalable Analysis

This protocol is for when computational resources or data sparsity prevent the use of traditional count-based models [4].

- Computational Protocol:

- Create Binary Matrix: Transform your count matrix into a binary matrix where any value greater than 0 becomes 1, and zeros remain 0.

- Dimensionality Reduction: Perform dimensionality reduction on the binary matrix using methods specifically designed for it, such as scBFA, or standard PCA.

- Downstream Analysis: Use the low-dimensional representation for clustering, visualization, and integration. For differential expression, use binary-based methods like Binary Differential Analysis (BDA) or generate pseudobulk data based on the detection rate (fraction of non-zero cells) per gene.

Research Reagent Solutions

Table 2: Essential Reagents and Tools for Sparse scRNA-seq Research

| Reagent / Tool | Function | Key Feature |

|---|---|---|

| ERCC Spike-In Mix | Models technical noise and enables quantitative variance decomposition. | Known concentrations of exogenous RNA transcripts. |

| Unique Molecular Identifiers (UMIs) | Corrects for amplification bias and provides absolute molecular counts. | Random barcodes that tag individual mRNA molecules. |

| 5-Ethynyl Uridine (5-EU) | Metabolic label for capturing nascent transcription; reduces bias towards stable RNAs. | Allows for very short (e.g., 10-minute) pulse-labeling. |

| RECODE / iRECODE Algorithm | Reduces technical noise and batch effects using high-dimensional statistics. | Preserves full-dimensional data; applicable to multiple omics modalities. |

| UNCURL Framework | Preprocesses data using non-negative matrix factorization (NMF) tailored for scRNA-seq distributions. | Scalable to millions of cells; can incorporate prior knowledge. |

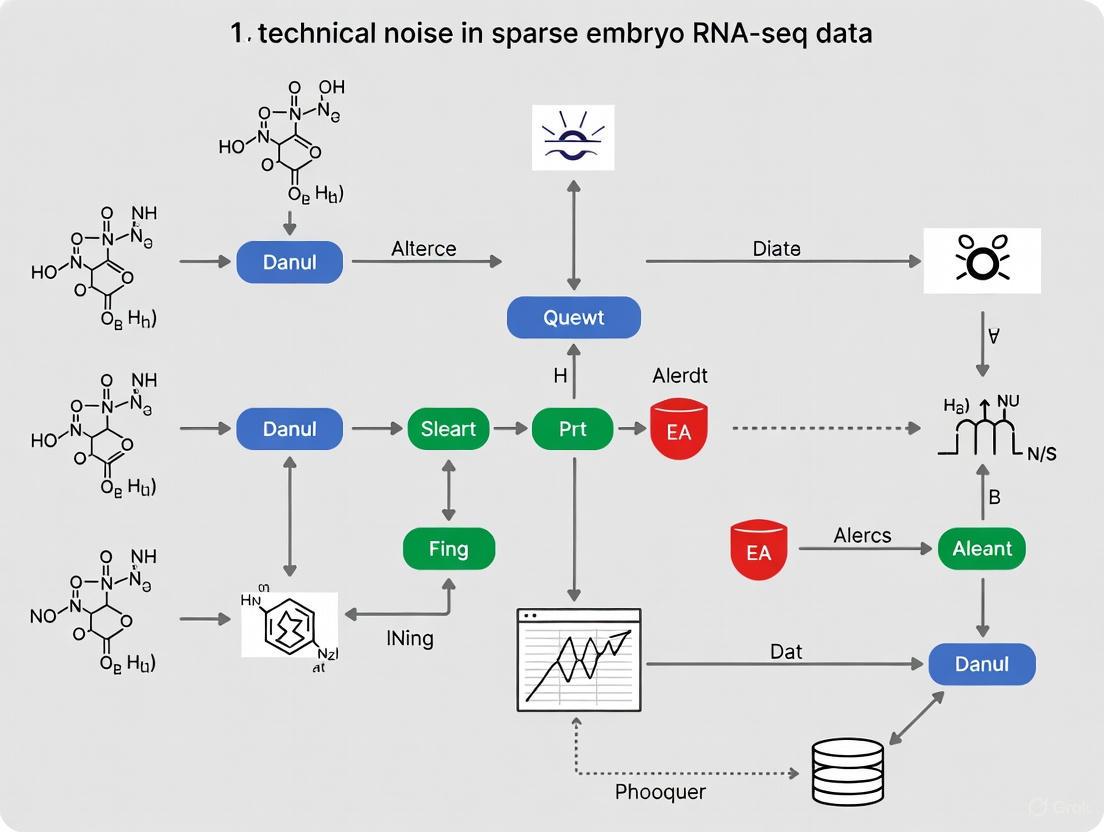

Workflow and Pathway Diagrams

scFLUENT-seq Workflow for Nascent Transcription

Decision Pathway for Addressing Data Sparsity

Frequently Asked Questions (FAQs)

Q1: What are the main types of technical noise in single-cell and low-input RNA-seq experiments? Technical noise in RNA-seq data, particularly from sparse samples like embryos, primarily stems from:

- Dropouts (Zero-inflation): Events where a transcript is expressed in a cell but not detected, resulting in an excess of zero values in the data. This is often due to the stochastic nature of capturing low-abundance mRNAs when starting material is scarce [8] [9] [10].

- Batch Effects: Systematic technical variations introduced when samples are processed in different batches, using different reagents, sequencing platforms, or at different times. These can confound biological signals [11] [12].

- Amplification Bias: Inefficient or biased amplification during library preparation, which can distort the true representation of transcript abundances [10].

- Process Noise: Variability inherent to the wet-lab pipeline, including molecular handling (pipetting, technician differences), sequencing machine variability, and bioinformatics analysis choices [13].

Q2: How do high dropout rates impact the analysis of scRNA-seq data? High dropout rates break the fundamental assumption that similar cells are close to each other in gene expression space. This has two major consequences [8]:

- Reduced Cluster Stability: While cluster homogeneity (cells of the same type grouping together) may remain, the stability of these clusters decreases. This means the same cell might be assigned to different clusters in repeated analyses, making sub-population identification difficult.

- Compromised Local Neighborhoods: Graph-based clustering methods (common in tools like Seurat and Scanpy) rely on identifying dense local neighborhoods of cells. High sparsity makes these neighborhoods less reliable and identifiable.

Q3: Can the dropout events themselves be useful? Yes, an emerging perspective is to "embrace" dropouts. Instead of treating all zeros as missing data, the binary dropout pattern (0 for non-detection, 1 for detection) can be a useful signal. Genes within the same biological pathway often exhibit similar dropout patterns across cell types. Clustering cells based on these binary co-occurrence patterns has been shown to identify cell types as effectively as using quantitative expression of highly variable genes [9] [4].

Q4: What is the difference between normalization and batch effect correction? These are distinct but related preprocessing steps [12]:

- Normalization operates on the raw count matrix and primarily addresses differences in sequencing depth and library size across cells or samples. It does not remove batch effects.

- Batch Effect Correction typically operates on a normalized (and often dimensionally-reduced) dataset. It specifically aims to remove systematic technical variations associated with different experimental batches, allowing data from multiple batches to be combined and analyzed together.

Q5: How can I identify if my dataset has a batch effect? You can use a combination of visual and quantitative methods [12]:

- Visual Inspection: Perform PCA or UMAP/t-SNE visualization. If cells or samples cluster strongly by batch (e.g., sequencing run) rather than by biological condition, a batch effect is likely present.

- Quantitative Metrics: Metrics like the k-nearest neighbor batch effect test (kBET) or Local Inverse Simpson's Index (LISI) can quantitatively measure the degree of batch mixing. An improvement in these scores after correction indicates successful mitigation.

Q6: What are the signs of overcorrecting my data during batch effect removal? Overcorrection occurs when biological signal is erroneously removed along with technical noise. Key signs include [12]:

- The loss of known, canonical cell-type-specific markers.

- A significant overlap in the marker genes identified for different cell clusters.

- Cluster-specific markers being dominated by universally highly expressed genes (e.g., ribosomal genes).

- A scarcity of differential expression hits in pathways where they are biologically expected.

Troubleshooting Guides

Problem: High Data Sparsity and Dropouts

Symptoms: An extremely high number of zero counts in your count matrix, making it difficult to distinguish cell types or identify differentially expressed genes.

Solutions:

- Leverage Binary Representation: For large, sparse datasets, consider converting your count data to a binary matrix (0 for no expression, 1 for expressed) for analyses like clustering and dimensionality reduction. This approach is computationally efficient and can be as informative as using quantitative counts for cell identity [4].

- Employ Co-occurrence Clustering: Use clustering algorithms specifically designed for binary dropout patterns. These methods identify groups of genes that are consistently detected together across cells, forming meaningful "pathway signatures" for cell type identification [9].

- Apply Informed Filtering: Filter out genes with consistently low counts across all samples, as these are more likely to be technical noise. The threshold can be set by finding the value that maximizes the similarity (e.g., Multiset Jaccard Index) between samples of the same biological condition [14].

Table 1: Impact of Increasing Dropout Rates on scRNA-seq Clustering

| Metric | Impact of Low Dropouts | Impact of High Dropouts |

|---|---|---|

| Cluster Homogeneity | High (cells of same type cluster together) | Remains relatively high [8] |

| Cluster Stability | High (consistent cluster assignments) | Significantly decreases [8] |

| Sub-population Identification | Reliable | Becomes difficult and unreliable [8] |

Problem: Batch Effects in Multi-Batch Experiments

Symptoms: Samples or cells cluster by processing date, sequencing lane, or operator in PCA/UMAP plots, rather than by biological condition or cell type.

Solutions:

- Select an Appropriate Correction Algorithm: Choose a batch effect correction method suited to your data type and size. Popular and effective methods include:

- Harmony: Uses PCA and iterative clustering to integrate cells across datasets [12].

- ComBat-ref: A refinement of ComBat-seq that uses a negative binomial model and selects a low-dispersion reference batch for adjustment, improving sensitivity in differential expression analysis [11].

- Seurat CCA/MNN: Uses canonical correlation analysis and mutual nearest neighbors to find cross-dataset "anchors" for correction [12].

- Scanorama: Efficiently integrates datasets by finding mutual nearest neighbors in reduced dimensional spaces [12].

- Always Validate Correction: After applying a method, re-inspect your PCA/UMAP plots. Cells from the same cell type but different batches should now mix well. Use quantitative metrics (e.g., kBET, LISI) to confirm improved integration [12].

- Check for Overcorrection: Verify that known biological differences and cell-type-specific markers are retained after correction [12].

Table 2: Comparison of Common Batch Effect Correction Methods

| Method | Underlying Model/Technique | Key Strength | Output |

|---|---|---|---|

| ComBat-ref [11] | Negative Binomial GLM; reference batch | High power for DE analysis; preserves count data | Corrected count matrix |

| Harmony [12] | PCA + Iterative Clustering | Fast, good for large datasets; avoids overcorrection | Integrated embedding |

| Seurat CCA/MNN [12] | Canonical Correlation Analysis + Mutual Nearest Neighbors | Robust for diverse cell types | Integrated embedding or matrix |

| Scanorama [12] | Mutual Nearest Neighbors in PCA space | Efficient for very large datasets | Corrected embedding or matrix |

Experimental Protocols & Methodologies

Protocol 1: Co-occurrence Clustering on Binarized scRNA-seq Data

This protocol identifies cell types based on the pattern of gene dropouts, as described by Qui et al. [9].

- Input: Raw UMI count matrix from a sparse scRNA-seq dataset.

- Binarization: Convert the count matrix to a binary matrix. All non-zero counts are set to 1, representing gene detection.

- Gene-Gene Graph Construction: For all cells in a cluster, compute a co-occurrence measure (e.g., Jaccard index) for each pair of genes, assessing if they are frequently detected together.

- Identify Gene Pathways: Use community detection (e.g., the Louvain algorithm) on the gene-gene graph to partition genes into clusters ("pathways") that exhibit significant co-detection.

- Calculate Pathway Activity: For each cell, calculate the percentage of detected genes within each identified gene pathway. This creates a low-dimensional "pathway activity" representation of the cells.

- Cell-Cell Graph and Clustering: Build a cell-cell graph using distances in the pathway activity space. Apply community detection to this graph to partition cells into clusters.

- Iterate: Repeat steps 3-6 hierarchically on each new cell cluster to identify finer sub-populations until no further subdivisions are statistically supported.

Protocol 2: Benchmarking scRNA-seq Normalization Methods for Noise Quantification

This protocol outlines steps to evaluate different normalization algorithms for their accuracy in quantifying biological noise, based on Khetan et al. [15].

- Experimental Perturbation: Treat a cell population (e.g., mESCs) with a noise-enhancer molecule like IdU and a control (DMSO).

- scRNA-seq: Perform deep-coverage scRNA-seq on both treated and control cells.

- Multiple Normalizations: Process the raw count data through several common normalization algorithms (e.g., SCTransform, scran, Linnorm, BASiCS, a simple "raw" normalization).

- Noise Metric Calculation: For each gene in each normalized dataset, calculate a noise metric (e.g., squared coefficient of variation, CV²; or Fano Factor σ²/μ).

- Compare Noise Amplification: Assess the percentage of genes showing increased noise (ΔFano > 1 or ΔCV² > 1) under IdU treatment versus control for each method.

- smFISH Validation: Validate the findings for a panel of representative genes using single-molecule RNA FISH (smFISH), the gold standard for absolute mRNA quantification.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Reagents and Tools for Managing Technical Noise

| Item | Function & Utility |

|---|---|

| UMIs (Unique Molecular Identifiers) | Short random barcodes attached to each mRNA molecule during library prep. They allow precise quantification by correcting for PCR amplification bias, enabling more accurate distinction of technical noise from biological variation [10]. |

| ERCC Spike-in Controls | Synthetic, pre-defined RNA transcripts added to each cell's lysate in known quantities. They are used to trace technical variability, model amplification efficiency, and accurately estimate gene-specific capture rates and dropout probabilities [10]. |

| Noise-Enhancer Molecules (e.g., IdU) | Small molecules that orthogonally amplify transcriptional noise without altering mean expression levels. They serve as a positive control and tool for benchmarking the performance of scRNA-seq pipelines in quantifying transcriptional noise [15]. |

| Validated Batch Effect Correction Software (e.g., Harmony, ComBat-ref) | Computational tools specifically designed to remove non-biological variation from multi-batch datasets. Their use is critical for integrating data from different experiments or platforms reliably [11] [12]. |

| Binary Analysis Algorithms (e.g., scBFA, Co-occurrence Clustering) | Specialized computational methods that analyze binarized (0/1) expression data. They are highly efficient and effective for clustering and visualizing very large, sparse scRNA-seq datasets [9] [4]. |

The Impact of Stochastic Transcription on Data Interpretation

Technical Support Center

Troubleshooting Guides

Guide 1: Addressing High Cell-to-Cell Variability in Embryo RNA-seq Data

Problem: High observed variability in gene expression across cells in a developing embryo. Is this biological noise (genuine stochastic transcription) or technical noise?

Investigation Steps:

- Assess Gene Expression Level: Technical noise disproportionately affects lowly and moderately expressed genes. For genes with average counts below the 20th percentile in your data, a larger fraction of the observed variance is likely technical [2].

- Utilize Spike-In Controls: Use data from external RNA spike-ins (e.g., ERCC controls) added to your lysis buffer. Since these are added at the same quantity to each cell, their variance is purely technical. Fit a generative model to quantify the expected technical noise across the expression dynamic range [2] [16].

- Decompose the Variance: Use a statistical model to decompose the total variance of each endogenous gene into biological and technical components. The technical component is inferred from the spike-in data [2].

- Validate with an Independent Method: If possible, validate findings for a subset of genes using single-molecule RNA FISH (smFISH), which is considered a gold standard for absolute mRNA quantification with high sensitivity [15].

Solution:

- If technical noise explains most of the variance, apply a noise-reduction method. For example, a Gamma Regression Model (GRM) trained on spike-in data can be used to compute de-noised gene expression concentrations from raw counts (FPKM/TPM), significantly reducing technical noise [16].

- If biological noise is significant, consider that for lowly expressed genes, only about 17.8% of stochastic allelic expression patterns may be genuinely biological, with the remainder attributable to technical effects [2].

Guide 2: scRNA-seq Analysis Shows Noise Amplification, but You Suspect Algorithmic Bias

Problem: Your analysis of a scRNA-seq dataset, perhaps after a perturbation like IdU treatment, suggests widespread noise amplification. You are concerned that the scRNA-seq analysis pipeline itself may be underestimating or misrepresenting the true biological effect.

Investigation Steps:

- Benchmark Normalization Methods: Different normalization algorithms (e.g., SCTransform, scran, BASiCS) have varying sensitivities and can report different proportions of genes with amplified noise, even on the same dataset [15].

- Compare to a Gold Standard: For a panel of representative genes spanning different expression levels, perform smFISH. Directly compare the fold-change in noise (e.g., Fano factor) measured by smFISH to that reported by the scRNA-seq algorithms [15].

- Check for Homeostatic Changes: A true "noise enhancer" perturbation amplifies noise without systematically altering mean expression levels. Verify that the mean expression for most genes is unchanged across algorithms [15].

Solution:

- Studies show that most scRNA-seq algorithms systematically underestimate the fold change in noise amplification compared to smFISH. If your results show a strong effect with scRNA-seq, the true biological effect might be even larger [15].

- Consider using a model-based approach like Monod, which fits biophysical models of transcription to nascent and mature RNA counts present in many scRNA-seq datasets. This provides a more biophysically interpretable framework that minimizes reliance on opaque normalization techniques [17].

Guide 3: Distinguishing Continuous Cell States from Technical Artifacts in Developmental Trajectories

Problem: When analyzing embryonic development, it is difficult to determine if a continuous spread of cells in a low-dimensional embedding (e.g., UMAP/t-SNE) represents a genuine differentiation trajectory or is an artifact of technical noise and data sparsity.

Investigation Steps:

- Apply Denoising: Apply a denoising method like GRM to your raw count data. Then, re-cluster and re-embed the de-noised data. Genuine biological trajectories should become more distinct and align better with known developmental stages [16].

- Leverage Structured Data: If available, use datasets with temporal or spatial information. These provide constraints that help resolve identifiability problems common in "snapshot" data [18].

- Use Topology-Preserving Maps: Employ analysis tools like PAGA that generate structure-rich topologies of cell types and states. These can more robustly represent continuous trajectories and transitions between discrete types, helping to distinguish true structure from noise [19].

Solution:

- After de-noising, if cells from different embryonic stages (e.g., E14.5, E16.5, E18.5) form distinct clusters that reflect the known developmental hierarchy, the observed continuum is likely biologically real [16].

- Adopt a mechanistic inference approach that fits stochastic models of gene expression to the data, which can explicitly reveal the transcriptional dynamics underlying cell state transitions [18] [17].

Frequently Asked Questions (FAQs)

FAQ 1: What is the most reliable method to quantify technical noise in my scRNA-seq experiment? The most robust method involves using external RNA spike-ins (e.g., ERCC molecules). These are synthetic RNAs added at known, constant concentrations to each cell's lysate. Because their true expression level is known and identical across cells, any variability observed in their measurements is technical noise. This information can be used to build a cell-specific model of technical noise that can be applied to endogenous genes [2] [16].

FAQ 2: My research focuses on stochastic allelic expression in early embryos. How much of what I observe is real? A study applying a rigorous generative model to single-cell data demonstrated that technical noise can explain the majority of observed stochastic allelic expression, particularly for lowly and moderately expressed genes. The model predicted that only about 17.8% of such patterns were attributable to genuine biological noise. It is crucial to model technical noise with spike-ins before making biological conclusions about allelic expression stochasticity [2].

FAQ 3: Why do I get different results for noise amplification when I use different scRNA-seq analysis algorithms? Different normalization and analysis algorithms (SCTransform, scran, BASiCS, etc.) are designed with different statistical assumptions and are sensitive to different aspects of the data. They can disagree on the exact proportion of genes showing significant noise changes. Therefore, it is a best practice to benchmark several algorithms and, where possible, validate key findings with an orthogonal method like smFISH [15].

FAQ 4: What is a "noise-enhancer" molecule and how can I use it in my research? A noise-enhancer molecule, such as 5′-iodo-2′-deoxyuridine (IdU), is a perturbation that orthogonally amplifies transcriptional noise without altering the mean expression level of most genes. This property, known as homeostatic noise amplification, makes it a powerful tool for probing the physiological impacts of pure expression noise across the transcriptome [15].

FAQ 5: How can I move beyond descriptive analysis to understand the mechanism of stochastic transcription? Instead of relying solely on descriptive clustering, consider model-based analysis. Tools like the Monod package allow you to fit biophysical models of stochastic transcription (e.g., the two-state or "telegraph" model) directly to your scRNA-seq data. This allows you to infer mechanistic parameters, such as transcription and switching rates, providing a more quantitative and interpretable understanding of gene regulation [17] [20].

Data Presentation

Table 1: Quantifying Technical vs. Biological Noise in scRNA-seq Data

Table based on an analysis of mouse embryonic stem cells using a generative model and ERCC spike-ins [2].

| Gene Expression Percentile | Average Proportion of Variance Attributable to Biological Variability |

|---|---|

| Lowly expressed (<20th) | 11.9% |

| Highly expressed (>80th) | 55.4% |

| Specific Case: Stochastic Allelic Expression | Proportion Attributable to Biological Noise |

| All genes (model prediction) | 17.8% |

Table 2: Performance Comparison of scRNA-seq Noise Quantification Algorithms

Summary of algorithm performance in detecting genome-wide noise amplification after IdU treatment in mESCs, as compared to smFISH validation [15].

| Algorithm | Key Principle | % of Genes with Increased Noise (CV²) | Systematically vs. smFISH? |

|---|---|---|---|

| SCTransform | Negative binomial model with regularization and variance stabilization | ~88% | Underestimates fold-change |

| scran | Pool-based size factor estimation for normalization | ~82% | Underestimates fold-change |

| BASiCS | Hierarchical Bayesian model to separate technical and biological noise | ~85% | Underestimates fold-change |

| Linnorm | Normalization and variance stabilization using homogenous genes | ~80% | Underestimates fold-change |

| SCnorm | Quantile regression for gene group-specific normalization | ~73% | Underestimates fold-change |

| smFISH (Gold Standard) | Direct RNA counting via fluorescence microscopy | >90% (for tested genes) | N/A |

Experimental Protocols

Protocol 1: Using ERCC Spike-Ins and a Gamma Regression Model for Noise Reduction

Purpose: To explicitly calculate de-noised gene expression levels from scRNA-seq data, reducing technical noise [16].

Materials:

- scRNA-seq library prepared with added ERCC spike-in mix.

- Software: R and the GRM script (formerly available at http://wanglab.ucsd.edu/star/GRM).

Methodology:

- Data Preparation: Obtain read counts (e.g., FPKM, TPM) for both endogenous genes and ERCC spike-ins for each single cell.

- Log Transformation: Perform log transformation of the data: Let ( x = log(FPKM) ) and ( y = log(known_concentration) ) for each ERCC spike-in.

- Model Fitting: For each cell, fit a Gamma Regression Model (GRM) between the log-transformed FPKM and the log-transformed known concentration of the ERCCs. The model is: ( y \sim Gamma(y; μ(x), φ) ), where ( μ(x) = \sum{i=0}^{n} βi x^i ) is a polynomial function.

- Parameter Estimation: Use maximum likelihood estimation to determine the parameters (( β_i )) and the dispersion (( φ )). The optimal polynomial degree ( n ) is found by empirical search (n=1 to 4), selecting the model that minimizes the average technical noise of the ERCCs.

- De-noising Expression: For each endogenous gene in the cell, input its ( x{gene} = log(FPKM) ) into the trained model. The de-noised, true expression level is calculated as ( \hat{y}{gene} = E(y{gene}) = μ(x{gene}) ).

Validation: Apply hierarchical clustering or PCA to the de-noised data. Successful noise reduction should yield clearer separation of biological groups (e.g., embryonic developmental stages) that align with known biology [16].

Protocol 2: Fitting a Biophysical Model of Transcription with Monod

Purpose: To infer mechanistic parameters of stochastic transcription from standard scRNA-seq data [17].

Materials:

- scRNA-seq data quantified with a tool that provides nascent (unprocessed) and mature (spliced) RNA counts (e.g., kallisto | bustools).

- Python and the Monod package (available via pip).

Methodology:

- Data Quantification: Pre-process your raw sequencing data to obtain count matrices for nascent and mature RNA for each gene and each cell.

- Model Specification: Monod incorporates a stochastic model of gene expression, such as the two-state model. This model describes genes as switching between inactive and active states, with transcription occurring in bursts from the active state.

- Model Fitting: Provide the nascent and mature RNA count matrices to Monod. The software will fit the model to the data, leveraging the variation in these two modalities.

- Parameter Inference: Monod returns estimated parameters for each gene, which may include:

- Activation rate (( k{on} )): The rate at which the gene switches from inactive to active.

- Inactivation rate (( k{off} )): The rate at which the gene switches from active to inactive.

- Transcription rate (( k{transcribe} )): The rate of RNA production when the gene is active.

- Splicing rate (( k{splice} )): The rate at which nascent RNA is processed into mature RNA.

- Analysis: Use the inferred parameters to compare transcriptional mechanisms across genes, cell types, or in response to perturbations. This moves beyond simple mean expression to understand the dynamic regulation of genes.

Mandatory Visualization

Diagram 1: Technical Noise Identification Workflow

Diagram 2: Model-Based Analysis Pipeline (Monod)

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item | Type | Function / Application |

|---|---|---|

| ERCC Spike-In Mix | Research Reagent | A set of synthetic RNA controls at known concentrations used to model and quantify technical noise in scRNA-seq experiments [2] [16]. |

| Unique Molecular Identifiers (UMIs) | Molecular Barcode | Short random nucleotide sequences added to each molecule during library prep to correct for amplification bias and enable absolute molecule counting [2]. |

| IdU (5′-Iodo-2′-deoxyuridine) | Small Molecule Perturbation | A "noise-enhancer" molecule used to orthogonally amplify transcriptional noise across the transcriptome without altering mean expression, useful for studying noise physiology [15]. |

| smFISH Probe Sets | Imaging Reagent | Fluorescently labeled DNA probes used for single-molecule RNA fluorescence in situ hybridization, the gold standard for validating mRNA abundance and localization [15]. |

| Monod Python Package | Computational Tool | A software package for fitting biophysical models of stochastic transcription to scRNA-seq data to infer mechanistic parameters and minimize opaque normalization [17]. |

| BASiCS R Package | Computational Tool | A Bayesian statistical tool that uses spike-in information to decompose the total variability of gene expression into technical and biological components [15]. |

Frequently Asked Questions (FAQs)

FAQ 1: What makes early human embryonic material so scarce for research? The scarcity stems from a combination of ethical regulations and biological reality. A significant gap exists for embryos between approximately week 2 and week 4 of development. Material from early pregnancy terminations (a key source for later stages) is not available this early, and the culture of human embryos beyond day 14 is prohibited in most jurisdictions [21]. Furthermore, research relies on donated embryos from in vitro fertilization (IVF) processes, where embryos of the highest quality are typically prioritized for reproductive purposes, leaving those of lesser quality for research [21].

FAQ 2: What are the major technical sources of noise in single-cell embryo RNA-seq data? Technical noise arises from the entire data generation process. Key sources include:

- Stochastic Dropout: The minute amount of mRNA in a single cell is prone to stochastic loss during cell lysis, reverse transcription, and amplification [2].

- Amplification Bias: The necessary amplification of cDNA can introduce substantial technical noise, especially for lowly expressed genes [2].

- Batch Effects: Systematic non-biological variations are introduced when samples are processed in different batches, sequencing runs, or by different labs [6] [11]. These effects can be on a similar scale as the biological differences of interest, obscuring true results.

FAQ 3: How can I benchmark my embryo model or dataset against a true human embryo? An integrated human embryo scRNA-seq reference dataset is now available. This tool combines data from six published studies, covering development from the zygote to the gastrula stage. You can project your query dataset onto this reference to annotate cell identities and assess fidelity. Using a universal reference is crucial, as benchmarking against irrelevant or incomplete data carries a high risk of misannotation [22].

FAQ 4: Are there methods to correct for batch effects in RNA-seq count data?

Yes, several methods exist. ComBat-seq uses a negative binomial model to adjust batch effects while preserving the integer nature of count data, making it suitable for downstream differential expression analysis [11]. Recent refinements like ComBat-ref build on this by selecting the batch with the smallest dispersion as a reference and adjusting other batches towards it, reportedly improving performance [11].

FAQ 5: How much of the variability in single-cell data is genuine biological noise? This is gene-dependent. One study using a generative statistical model and external RNA spike-ins found that for lowly expressed genes, only about 11.9% of the variance in expression across cells could be attributed to biological variability. In contrast, for highly expressed genes, biological variability accounted for an average of 55.4% of the variance [2]. This highlights that a large fraction of observed variability, particularly for low-abundance transcripts, can be technical in origin.

Troubleshooting Guides

Problem 1: High Technical Noise and Dropouts in scRNA-seq Data

Issue: Your single-cell data from embryonic material is excessively sparse, with many genes not detected in many cells, making biological interpretation difficult.

Solution: Implement a noise reduction strategy that distinguishes technical artifacts from biological signals.

- Step 1: Characterize the Noise. Use external RNA spike-in controls added to the cell lysis buffer. These spike-ins are not subject to biological variation within the cells and thus provide a pure measure of technical noise across the dynamic range of expression [2].

- Step 2: Apply a Dedicated Noise-Reduction Algorithm. Utilize computational tools designed to model and reduce this noise.

- RECODE/iRECODE: This method uses high-dimensional statistics to model technical noise and can simultaneously reduce technical noise and batch effects while preserving the full dimensionality of the data [6].

- Generative Modeling: Models that incorporate cell-specific capture efficiency and amplification noise can decompose total variance into technical and biological components [2].

- Step 3: Validate with Gold-Standard Methods. Whenever possible, validate findings for key genes using an orthogonal method like single-molecule RNA fluorescence in situ hybridization (smFISH), which has high sensitivity and is considered a gold standard for mRNA quantification [15] [2].

Essential Reagents:

- ERCC Spike-In Mix: A defined mix of exogenous RNA transcripts used to model technical noise.

Problem 2: Batch Effects Across Different Experimental Runs

Issue: When integrating data from multiple embryo samples processed in different batches, cells cluster by batch instead of by biological condition or developmental stage.

Solution: Apply a robust batch-effect correction method before any integrative analysis.

- Step 1: Preprocessing. Ensure all datasets are processed through the same alignment and gene quantification pipeline using the same genome reference and annotation to minimize initial technical discrepancies [22].

- Step 2: Select a Correction Method. Choose a method appropriate for RNA-seq count data.

- Using a Reference Batch (

ComBat-ref): This method selects the batch with the smallest dispersion as a reference and adjusts all other batches towards it, preserving the count data of the reference batch. It has been shown to maintain high sensitivity in differential expression analysis [11]. - Mutual Nearest Neighbors (MNN): Methods like

fastMNNidentify pairs of cells across batches that are in a similar biological state and use them to anchor the correction, effectively merging datasets [22].

- Using a Reference Batch (

- Step 3: Evaluate Correction. After correction, check that cells from different batches but similar biological states (e.g., the same cell lineage) mix well in a low-dimensional projection like UMAP, while distinct cell types remain separable [6] [22].

Problem 3: Authenticating Stem Cell-Based Embryo Models

Issue: You have generated a stem cell-based embryo model and need to objectively evaluate its fidelity to in vivo human development.

Solution: Benchmark your model's transcriptome against a comprehensive, integrated reference of real human embryogenesis.

- Step 1: Access the Reference Tool. Utilize the published integrated human embryo reference, which spans the zygote to gastrula stages [22].

- Step 2: Project Your Data. Use the provided prediction tool to project your model's scRNA-seq data onto the reference UMAP.

- Step 3: Analyze Cell Identity and Patterning. Assess the co-localization of your cells with the annotated cell types (e.g., epiblast, hypoblast, trophoblast, primitive streak) in the reference. A high-fidelity model will show cells falling within the appropriate in vivo clusters with similar transcriptional profiles, rather than forming separate, off-target clusters [22].

Quantitative Data in Embryo Research

Table 1: Key Sources of Embryonic Material and Associated Challenges

| Material Source | Developmental Stage Coverage | Key Challenges & Limitations |

|---|---|---|

| Donated IVF Embryos | Pre-implantation (Week 1) | - "Lower quality" embryos available for research [21]- Significant regulatory and logistical hurdles [21] |

| Biobanked Fetal Tissues | Post-implantation (Weeks 4-20) | - Limited supply and sustainable access [21]- Static, archived samples [21] |

| Human Embryo Reference Atlas | Zygote to Gastrula (CS7) | - Integrated data from 3,304 cells across 6 studies [22]- Serves as a computational benchmark, not physical material [22] |

Table 2: Performance Comparison of scRNA-seq Noise Quantification Methods

| Method / Finding | Key Principle | Performance Insight |

|---|---|---|

| Generative Model + Spike-Ins [2] | Decomposes variance using external RNA controls. | For lowly expressed genes, only ~12% of variance is biological; for high-expression genes, it rises to ~55% [2]. |

| Multiple Algorithms (SCTransform, scran, etc.) [15] | Different normalization and modeling approaches. | All algorithms systematically underestimate the true fold-change in biological noise compared to smFISH validation [15]. |

| IdU Perturbation [15] | Uses a small molecule to orthogonally amplify transcriptional noise. | Confirmed that most scRNA-seq algorithms are appropriate for detecting noise changes, validating their use for perturbation studies [15]. |

Experimental Protocols & Workflows

Protocol 1: Creating an Integrated Embryo Transcriptome Reference

This methodology is derived from the creation of a comprehensive human embryo reference tool [22].

- Data Collection: Gather multiple publicly available scRNA-seq datasets from human embryos across desired developmental stages.

- Standardized Reprocessing: Re-process all raw data through a uniform pipeline. This includes:

- Alignment: Map reads to a consistent genome reference (e.g., GRCh38) using a standard aligner like STAR.

- Quantification: Generate gene counts using the same annotation file for all datasets.

- Batch Correction and Integration: Apply an integration algorithm such as fastMNN to correct for technical batch effects between the different studies and embed all cells into a common space.

- Annotation and Validation: Annotate cell lineages based on known marker genes and contrast these annotations with independent human and non-human primate datasets for validation.

- Tool Deployment: Build a user-friendly projection tool (e.g., using UMAP) that allows researchers to map new query datasets onto the reference for annotation.

Diagram Title: Workflow for Creating an Integrated Embryo Reference

Protocol 2: Differentiating Biological from Technical Noise

This protocol outlines the use of spike-in controls to quantify technical noise [2].

- Spike-In Addition: During single-cell library preparation, add a known quantity of external RNA spike-in molecules (e.g., ERCC spike-ins) to the cell lysis buffer of each individual cell.

- Sequencing and Data Generation: Sequence the libraries and obtain count matrices for both endogenous genes and spike-in transcripts.

- Generative Modeling: Fit a probabilistic model that uses the spike-in data to estimate cell-specific parameters, such as capture efficiency and amplification noise, across the dynamic range of expression.

- Variance Decomposition: For each endogenous gene, subtract the estimated technical variance (learned from the spike-ins) from the total observed variance to calculate the biological variance component.

Diagram Title: Workflow for Quantifying Technical Noise with Spike-ins

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for Embryo Transcriptomics

| Reagent / Tool | Function in Research | Key Consideration |

|---|---|---|

| ERCC Spike-In RNA [2] | Models technical noise and enables variance decomposition in scRNA-seq data. | Must be added to the lysis buffer to control for all technical steps except cell lysis inefficiency. |

| Unique Molecular Identifiers (UMIs) [2] | Tags individual mRNA molecules to correct for amplification bias and count absolute transcript numbers. | Greatly reduces technical noise from PCR amplification. |

| ComBat-ref / ComBat-seq [11] | Computational tool for batch effect correction of RNA-seq count data using a negative binomial model. | Preserves integer count data, making it suitable for downstream DE tools like edgeR and DESeq2. |

| Integrated Human Embryo Reference [22] | A universal transcriptomic roadmap for authenticating stem cell-based embryo models. | Critical for unbiased benchmarking; using an irrelevant reference risks cell type misannotation. |

| Endometrial Cell Co-culture Systems [21] | Provides maternal signaling cues to improve the physiological relevance of in vitro embryo cultures. | Helps recapitulate the implantation environment, a major challenge in embryo model research. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary source of technical noise in my embryo RNA-seq data? Technical noise primarily arises from the stochastic dropout of transcripts during sample preparation and amplification biases. In single-cell RNA-seq protocols, the minute amount of mRNA from an individual cell must be amplified, leading to substantial technical noise. Major sources include stochastic RNA loss during cell lysis and reverse transcription, inefficiencies in amplification (PCR or in vitro transcription), and 3'-end bias. These factors contribute to a high number of "dropout" events, where a gene is expressed in the cell but not detected by sequencing [2] [23].

FAQ 2: How can I distinguish a genuine biological signal from technical noise? The most effective strategy is to use a generative statistical model calibrated with external RNA spike-ins. These spike-ins, added in the same quantity to each cell's lysate, allow you to model the expected technical noise across the entire dynamic range of gene expression. By decomposing the total variance of a gene's expression across cells into biological and technical components, you can subtract the technical variance estimated from the spike-ins from the total observed variance to isolate the biological variance [2].

FAQ 3: A large proportion of my data is zeros. Is this a problem? A high number of zeros (sparsity) is characteristic of single-cell RNA-seq data. However, it is crucial to recognize that these zeros are a mixture of true biological absence (the gene was not expressing RNA) and technical dropouts (the gene was expressing but not detected). This sparsity can increase complexity, consume more storage, lead to longer processing times, and cause models to overfit or avoid important data. Techniques like unique molecular identifiers (UMIs) and careful modeling are essential to handle this sparsity correctly [24] [23] [25].

FAQ 4: My data shows strong batch effects. How did this happen and how can I fix it? Batch effects are a pervasive systematic error in high-throughput data. In scRNA-seq, they occur when cells from different biological groups or conditions are cultured, captured, or sequenced separately. This can be exacerbated by unbalanced experimental designs that are sometimes unavoidable with certain scRNA-seq protocols. To address this, tools like iRECODE have been developed to simultaneously reduce both technical noise (dropouts) and batch effects. iRECODE integrates batch correction within a denoised "essential space" of the data, effectively mitigating batch effects while preserving biological signals and improving computational efficiency [6].

FAQ 5: Are there specific metrics to quantify sparsity and noise in my dataset? Yes, key metrics include:

- Cell-specific Detection Rate: The proportion of genes detected (non-zero) in each cell. High variability in this rate across cells can indicate technical issues.

- Coefficient of Variation (CV): Helps gauge the dispersion of gene expression across cells.

- Variance Decomposition: Using models to attribute the total variance of each gene to technical and biological components.

- Trendline Analysis: Scaling and rank-ordering gene counts across samples within a group can help visualize and quantify dispersion. Genes with highly skewed, non-linear trendlines often indicate high variability that may warrant further investigation [26] [23].

Troubleshooting Guides

Problem: High Technical Noise Masking Biological Variability

Symptoms:

- An unexpectedly high fraction of stochastic allele-specific expression.

- Poor concordance between your RNA-seq data and validation methods like smFISH, especially for lowly expressed genes.

- Overestimation of biological noise for genes with low to moderate expression.

Solutions:

- Use External RNA Spike-Ins: Spike-in molecules (e.g., from the ERCC) should be added to your cell lysate. They are not subject to biological variation and thus provide a direct measurement of technical noise across the expression dynamic range [2].

- Implement a Generative Noise Model: Employ a statistical model that uses the spike-in data to quantify technical noise. The model should account for:

- Stochastic dropout of transcripts.

- Shot noise (library sampling depth).

- Cell-to-cell differences in capture efficiency [2].

- Apply Advanced Noise-Reduction Tools: Use algorithms like RECODE or its upgraded version, iRECODE, which are based on high-dimensional statistics. RECODE models technical noise from the entire data generation process and reduces it using eigenvalue modification, effectively mitigating the "curse of dimensionality" inherent in single-cell data [6].

Experimental Protocol: Using Spike-Ins to Model Technical Noise

- Reagent: External RNA Control Consortium (ERCC) spike-in mix.

- Procedure: Add a defined quantity of the ERCC spike-in mix to the lysis buffer of each individual cell [2].

- Computational Analysis:

- Normalization: Normalize the raw sequenced spike-in transcripts by the estimated capture efficiency for each batch to remove batch effects.

- Model Fitting: Use a generative model to fit the observed mean-variance relationship of the spike-ins.

- Variance Decomposition: For each endogenous gene, subtract the technical variance (estimated from the spike-ins) from the total observed variance to estimate the biological variance [2].

Problem: Excessive Data Sparsity (Dropout Events)

Symptoms:

- A large fraction of genes in each cell report zero counts.

- The proportion of zeros varies substantially from cell to cell.

- Clustering or dimensionality reduction results are dominated by differences in the number of detected genes rather than biological state.

Solutions:

- Utilize Unique Molecular Identifiers (UMIs): During library preparation, use UMIs to label individual mRNA molecules. This allows for the correction of amplification bias and provides more accurate digital counts of transcript abundance, reducing sparsity caused by technical duplicates [2] [27].

- Employ Dimensionality Reduction: Apply techniques like Principal Component Analysis (PCA) to reduce the feature space and potentially increase data density. This can help mitigate the impact of sparsity on downstream analyses [24] [25].

- Leverage Noise-Reduction/Imputation Methods: Tools like RECODE are explicitly designed to address the sparsity in single-cell data. By reducing technical noise, they effectively "fill in" dropout events, leading to clearer and more continuous expression patterns without compromising the high-dimensional nature of the data [6].

Problem: Batch Effects Confounding Biological Results

Symptoms:

- Cells cluster strongly by batch (e.g., date of preparation, sequencing lane) instead of by culture condition or cell type.

- Inability to integrate or compare datasets from different experimental batches.

Solutions:

- Plan a Balanced Design: Whenever possible, process cells from different biological conditions across all batches to avoid confounding.

- Use Integrated Correction Tools: Implement a tool like iRECODE, which performs simultaneous reduction of technical and batch noise. It integrates established batch-correction algorithms (like Harmony, MNN-correct, or Scanorama) within its noise-reduction framework, leading to improved cell-type mixing across batches without the need for prior dimensionality reduction that can lose gene-level information [6].

Table 1: Attribution of Stochastic Allelic Expression in Single Cells [2]

| Source of Variation | Percentage Attributable | Notes |

|---|---|---|

| Technical Noise | ~82.2% | Explains the majority of observed stochastic allele-specific expression, particularly for lowly and moderately expressed genes. |

| Biological Noise | ~17.8% | Represents the genuine biological variation in allele-specific expression. |

Table 2: Biological Variance Explained Across Gene Expression Levels [2]

| Gene Expression Level | Average % of Variance Attributable to Biological Variability |

|---|---|

| Lowly Expressed Genes (<20th percentile) | 11.9% |

| Highly Expressed Genes (>80th percentile) | 55.4% |

The Scientist's Toolkit

Table 3: Key Research Reagents and Computational Tools

| Item | Function / Explanation |

|---|---|

| ERCC Spike-Ins | A set of synthetic RNA molecules used to model technical noise. Added in known quantities to cell lysates to calibrate and distinguish technical artifacts from biological signals [2]. |

| Unique Molecular Identifiers (UMIs) | Short random nucleotide sequences that label individual mRNA molecules before amplification. UMIs allow for accurate counting of original transcripts and correction for amplification bias [2] [27]. |

| 4-Thiouridine (4sU) | A nucleoside analog incorporated into newly synthesized RNA during a pulse-labeling period. Enables temporal resolution of transcription, allowing separation of "new" from "pre-existing" RNA in methods like NASC-seq2 [27]. |

| RECODE/iRECODE | A computational platform for technical noise and batch-effect reduction in single-cell data. It is parameter-free, preserves full-dimensional data, and is applicable to transcriptomic, epigenomic, and spatial data [6]. |

| Generative Statistical Model | A probabilistic model that represents the process generating scRNA-seq data, used to decompose total variance into technical and biological components [2]. |

Workflow and Relationship Visualizations

Diagram 1: Workflow for Technical Noise Identification and Reduction

Diagram 2: Relationship Between Data Issues and Solutions

A Toolkit for Denoising: From High-Dimensional Statistics to Deep Learning

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between RECODE and iRECODE?

RECODE is a high-dimensional statistical method specifically designed to reduce technical noise, such as the "dropout" effect where genes expressed in a cell are not detected during sequencing [28]. iRECODE (Integrative RECODE) is an enhanced version that simultaneously reduces both technical and batch noise with high accuracy and low computational cost [29] [28]. Batch noise refers to variations introduced by differences in experimental conditions, reagents, or sequencing equipment across datasets [28].

Q2: On what types of single-cell data can the RECODE platform be applied?

The RECODE platform is highly versatile. It has been successfully applied to:

- Single-cell RNA-sequencing (scRNA-seq), including data from Drop-seq, Smart-Seq, and 10x Genomics protocols [28].

- Single-cell Hi-C (scHi-C), where it reduces sparsity to uncover meaningful chromosomal interactions [29] [28].

- Spatial transcriptomics, clarifying signals and reducing sparsity across various platforms, species, and tissue types [29] [28].

Q3: What are the main advantages of using iRECODE for data integration?

iRECODE achieves superior cell-type mixing across batches while preserving each cell type's unique biological identity [28]. Furthermore, it is computationally efficient, reported to be approximately 10 times more efficient than using a combination of separate technical noise reduction and batch correction methods [28].

Q4: How does RECODE handle the "curse of dimensionality" in single-cell data?

Single-cell data, measuring thousands of genes per cell, creates a high-dimensional space where random technical noise can overwhelm true biological signals [28]. RECODE (Resolution of the Curse of Dimensionality) uses advanced high-dimensional statistics to mitigate this problem, revealing clear gene activation patterns without relying on complex parameters or machine learning [28].

Q5: Why is sparsity a major challenge in scRNA-seq data, and how does RECODE address it?

Sparsity, characterized by a high proportion of zero counts, arises from both biological factors (a gene is truly not expressed) and technical factors (a gene is expressed but not detected) [3]. RECODE tackles this by distinguishing these sources and reducing the technical zeros, thereby reconstructing a less sparse and more biologically accurate data matrix [29] [28].

Troubleshooting Guide

Preprocessing and Data Integration Issues

Problem: Ineffective Batch Correction After Applying iRECODE

- Potential Cause: The batch effect is confounded with strong biological signals, such as major cell type differences between batches.

- Solution: Ensure that the major cell populations are represented in all batches. If not, consider correcting batches within each cell type separately after initial clustering. Use known marker genes to verify that biological differences are preserved after correction [28].

Problem: High Computational Resource Usage with Large Datasets

- Potential Cause: The dataset is extremely large (e.g., >1 million cells), and default parameters are not optimized for speed.

- Solution: Leverage iRECODE's inherent computational efficiency. The underlying algorithm is designed to be scalable and parallelizable. For very large datasets, ensure you are using the latest version, which includes improvements for computational efficiency [7] [29] [28].

Interpretation and Analysis Issues

Problem: Suspected Over-imputation or Introduction of Spurious Signals

- Potential Cause: Circularity in the analysis, where the same data is used for both imputation and downstream analysis, can artificially inflate correlations.

- Solution: Always validate key findings using alternative methods or datasets. Be cautious when interpreting strongly inflated gene-gene correlations post-imputation. Where possible, use external validation from sources like smFISH or bulk RNA-seq [3] [2].

Problem: Poor Identification of Rare Cell Types

- Potential Cause: The noise from abundant cell types is dominating the signal, masking subtle rare cell populations.

- Solution: RECODE and iRECODE are specifically designed to address this. Ensure that the data is not over-corrected. The methods should reduce technical variation while preserving biological heterogeneity, making rare cell types more discernible [28].

Key Experimental Protocols

Workflow for scRNA-seq Noise Reduction with RECODE/iRECODE

The following diagram illustrates the standard workflow for applying the RECODE platform to scRNA-seq data.

Protocol: Validating Biological Noise Estimates with smFISH

Purpose: To validate the biological variance estimated by RECODE using single-molecule fluorescent in situ hybridization (smFISH) as a gold standard [2].

Procedure:

- Apply RECODE: Process your scRNA-seq dataset using RECODE to obtain estimates of biological variance for a set of target genes.

- Perform smFISH: Conduct smFISH on the same cell type or population for the same set of target genes. smFISH provides a direct, quantitative measure of transcript abundance with minimal technical noise.

- Correlate Estimates: Calculate the concordance between the biological noise estimates from RECODE and the observed variance from smFISH data.

- Benchmark: Compare the performance of RECODE against other noise-estimation methods. Studies have shown that RECODE outperforms previous methods, especially for lowly and moderately expressed genes, by not systematically overestimating biological noise [2].

Protocol: Integrating Multi-Batch scRNA-seq Data with iRECODE

Purpose: To integrate multiple scRNA-seq datasets generated in different batches to enable a unified analysis without batch-specific artifacts [28].

Procedure:

- Data Collection: Compile all scRNA-seq count matrices from different batches.

- Run iRECODE: Input the multi-batch data into iRECODE. The method will simultaneously model and reduce both technical noise (e.g., dropouts) and batch-specific noise.

- Assess Integration:

- Visual Inspection: Use UMAP or t-SNE plots to check if cells from different batches but of the same type are mixed together.

- Quantitative Metrics: Calculate integration metrics such as the Local Inverse Simpson's Index (LISI) to confirm improved mixing scores [28].

- Validate Biology: Ensure that known biological distinctions (e.g., different cell types) are preserved in the integrated output. Check the expression of key marker genes.

Research Reagent Solutions

Table 1: Essential reagents and resources for experiments involving RECODE and single-cell RNA-seq.

| Reagent/Resource | Function in Experiment | Key Considerations |

|---|---|---|

| External RNA Controls (ERCC) | Used to model technical noise and capture efficiency. Spike-ins are added to cell lysates in known quantities [2]. | Crucial for validating the technical noise model. Ensure they are added at the correct stage (e.g., to lysis buffer). |

| Unique Molecular Identifiers (UMIs) | Tag individual mRNA molecules to correct for amplification bias and accurately quantify transcript counts [2]. | Now standard in most scRNA-seq protocols. Essential for accurate initial count matrices. |

| Cell Hashing/Optimal Multliplexing | Labels cells from different samples/batches with barcoded antibodies, allowing multiple samples to be pooled for a single run [28]. | Reduces batch effects caused by library preparation. Compatible with iRECODE for downstream batch integration. |

| Viability Stains/Dyes | To select live cells for sequencing, reducing background noise from dead or dying cells. | Improves data quality at the source, which facilitates more effective noise reduction. |

Data Presentation and Analysis

Table 2: Comparative analysis of RECODE and iRECODE features and performance.

| Feature | RECODE | iRECODE |

|---|---|---|

| Primary Function | Technical noise reduction (e.g., dropout) [28]. | Simultaneous technical and batch noise reduction [29] [28]. |

| Core Methodology | High-dimensional statistics to resolve the "curse of dimensionality" [28]. | Enhanced high-dimensional statistical framework [28]. |

| Input Data | Single scRNA-seq, scHi-C, or spatial transcriptomics dataset [29] [28]. | Multiple datasets from different batches or platforms [28]. |

| Computational Efficiency | Highly scalable; ran on 1.3 million cells [7]. | ~10x more efficient than combining separate noise reduction and batch correction tools [28]. |

| Key Output | Denoised expression matrix with reduced sparsity [28]. | Integrated, batch-corrected, and denoised expression matrix [28]. |

| Validation | Improved concordance with smFISH, especially for lowly expressed genes [2]. | Better cell-type mixing across batches (quantified by LISI) while preserving biological identity [28]. |

Applying Compositional Data Analysis (CoDA-hd) to Sparse scRNA-seq Matrices

Frequently Asked Questions (FAQs)

Fundamental Concepts

Q1: What is CoDA-hd and how does it differ from traditional scRNA-seq normalization? CoDA-hd extends the Compositional Data Analysis (CoDA) framework to high-dimensional single-cell RNA-sequencing data. Unlike traditional methods like log-normalization, it explicitly treats gene expression data as relative abundances between components (genes) and transforms them into log-ratios (LRs). This approach provides three intrinsic properties: scale invariance, sub-compositional coherence, and permutation invariance, making it more robust to technical noise and data sparsity [30].

Q2: Why is CoDA-hd particularly suited for sparse embryo RNA-seq data? Embryo RNA-seq data often exhibits high technical noise and dropout rates. CoDA-hd's log-ratio transformations help reduce data skewness and make the data more balanced for downstream analyses. The centered-log-ratio (CLR) transformation specifically provides more distinct and well-separated clusters in dimension reductions and can eliminate suspicious trajectories caused by dropouts, which is crucial for accurately interpreting developmental processes [30].

Practical Implementation

Q3: How does CoDA-hd handle the pervasive zero counts (dropouts) in sparse scRNA-seq matrices? CoDA-hd employs innovative count addition schemes to enable application to high-dimensional sparse data. These methods add a minimal, consistent value to all counts, making the data amenable to log-ratio transformations without significantly distorting the underlying biological signal. This approach is more effective than prior-log-normalization or imputation for handling zeros in compositional frameworks [30].

Q4: What are the main log-ratio transformations used in CoDA-hd? The primary transformation is the centered-log-ratio (CLR) transformation. This method centers the log-transformed data, making it compatible with Euclidean space-based downstream analyses like clustering and trajectory inference. CLR has demonstrated advantages in dimension reduction visualization and improving trajectory inference accuracy [30].

Q5: How do I implement CoDA-hd in my analysis workflow? An R package called 'CoDAhd' has been specifically developed for conducting CoDA LR transformations for high-dimensional scRNA-seq data. The package, along with example datasets, is available at: https://github.com/GO3295/CoDAhd [30].

Comparison with Other Methods

Q6: How does CoDA-hd compare to other noise reduction methods like RECODE? While both address technical noise, they use different approaches. CoDA-hd uses a compositional framework with log-ratio transformations, whereas RECODE uses high-dimensional statistics and eigenvalue modification to model technical noise from the entire data generation process. RECODE has recently been upgraded to iRECODE to simultaneously reduce both technical and batch noise while preserving full-dimensional data [6].

Q7: When should I choose CoDA-hd over deep learning imputation methods like DGAN? CoDA-hd is preferable when you want to maintain the compositional nature of the data without extensive imputation. Deep generative autoencoder networks (DGAN) are evolved variational autoencoders designed to robustly impute data dropouts manifested as sparse gene expression matrices. DGAN outperforms baseline methods in downstream functional analysis including cell data visualization, clustering, classification, and differential expression analysis [31].

Troubleshooting Guides

Common Error Scenarios and Solutions

Problem 1: Poor Cluster Separation After CoDA-hd Transformation

Table 1: Troubleshooting Poor Cluster Separation

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient count addition | Check distribution of zeros in raw matrix | Increase the pseudocount value incrementally |

| Incompatible downstream analysis | Verify Euclidean space compatibility | Ensure CLR transformation is properly applied |

| High ambient RNA contamination | Examine mitochondrial gene percentages | Apply ambient RNA removal (SoupX, CellBender) pre-processing |

Problem 2: Computational Performance Issues with Large Datasets

Table 2: Performance Optimization Strategies

| Bottleneck | Symptoms | Mitigation Approaches |

|---|---|---|

| Memory constraints | System slowdown or crashes | Process data in batches; use sparse matrix representations |

| Long processing times | Transformations taking hours | Optimize matrix operations; parallelize where possible |

| Storage issues | Large intermediate files | Implement on-the-fly computation; use efficient file formats |

Data Quality Assessment Framework

Pre-CoDA-hd Implementation Checks:

- Data Sparsity Evaluation: Calculate the percentage of zeros in your count matrix. CoDA-hd is specifically designed for sparse data, but extreme sparsity (>95% zeros) may require specialized handling [30].

- Batch Effect Detection: Use PCA to visualize batch effects before application. While CoDA-hd addresses compositional nature, pronounced batch effects may require complementary methods like Harmony integration [6].

- Mitochondrial Content Assessment: Check percentage of mitochondrial reads as a quality metric. High values may indicate poor cell quality that could confound results [32].

Post-Transformation Validation Metrics:

- Cluster Separation Index: Measure silhouette scores before and after transformation to quantify improvement.

- Trajectory Reliability: Evaluate whether trajectories align with biological expectations and don't reflect technical artifacts.

- Gene Correlation Preservation: Ensure biological correlations are maintained while technical noise is reduced.

Integration with Existing Workflows

Seurat Compatibility: CoDA-hd transformed data can be seamlessly integrated into standard Seurat workflows. The CLR-transformed data functions effectively in standard Euclidean space-based analyses including PCA, UMAP, and clustering algorithms [30].

Scanpy Interoperability: For Python users, the CoDA-hd transformed matrices can be incorporated into AnnData objects and processed through standard Scanpy pipelines for visualization and clustering [33].

Experimental Protocols and Methodologies

Core CoDA-hd Transformation Protocol

Step-by-Step Implementation:

- Input Data Preparation: Start with raw count matrices from embryo RNA-seq experiments. Avoid using pre-normalized data when possible [30].

- Zero Handling: Apply count addition scheme (pseudocount) to address sparse nature. The specific method should be chosen based on data characteristics.

- CLR Transformation: Transform the count-added data using centered-log-ratio transformation to project from simplex to Euclidean space.

- Quality Assessment: Validate transformation using visualization and cluster metrics.

- Downstream Analysis: Proceed with standard dimensionality reduction, clustering, and trajectory inference methods.

Comparative Evaluation Framework

When benchmarking CoDA-hd against other methods in embryo RNA-seq studies, include these key metrics:

Table 3: Evaluation Metrics for Method Comparison

| Metric Category | Specific Measures | Interpretation |

|---|---|---|

| Cluster Quality | Silhouette width, Davies-Bouldin index | Higher values indicate better separation |

| Trajectory Accuracy | Pseudotime consistency, branching accuracy | Alignment with biological expectations |

| Computational Efficiency | Memory usage, processing time | Practical implementation considerations |

| Biological Validation | Marker gene expression, known cell type identification | Confirmation of biological relevance |

Essential Research Reagent Solutions

Table 4: Key Computational Tools for CoDA-hd Implementation

| Tool/Resource | Function | Implementation |

|---|---|---|

| CoDAhd R Package | Core CoDA-hd transformations | R implementation for high-dimensional scRNA-seq |

| Seurat | Downstream analysis and visualization | Compatible with CoDA-hd transformed data |

| Scanpy | Python-based single-cell analysis | Accepts CoDA-hd processed matrices |

| RECODE/iRECODE | Complementary noise reduction | Simultaneous technical and batch noise reduction |

| CellBender | Ambient RNA removal | Pre-processing before CoDA-hd application |

| Harmony | Batch effect correction | Integration with CoDA-hd processed data |

This technical support center provides targeted guidance for researchers employing deep learning models, specifically scANVI and Transformer-based architectures, for cell classification in embryo RNA-seq data. A primary challenge in this domain is handling the inherent technical noise and sparsity of single-cell data, which can obscure subtle biological signals crucial for identifying early developmental cell states. The content herein is framed within a broader thesis on managing these technical complexities to achieve robust, reproducible cell type annotation.

The Scientist's Toolkit: Research Reagent Solutions