NGS vs. Sanger Sequencing for CRISPR Validation: A Strategic Guide for Researchers

Accurately quantifying CRISPR editing efficiency is critical for successful gene editing in research and therapeutic development.

NGS vs. Sanger Sequencing for CRISPR Validation: A Strategic Guide for Researchers

Abstract

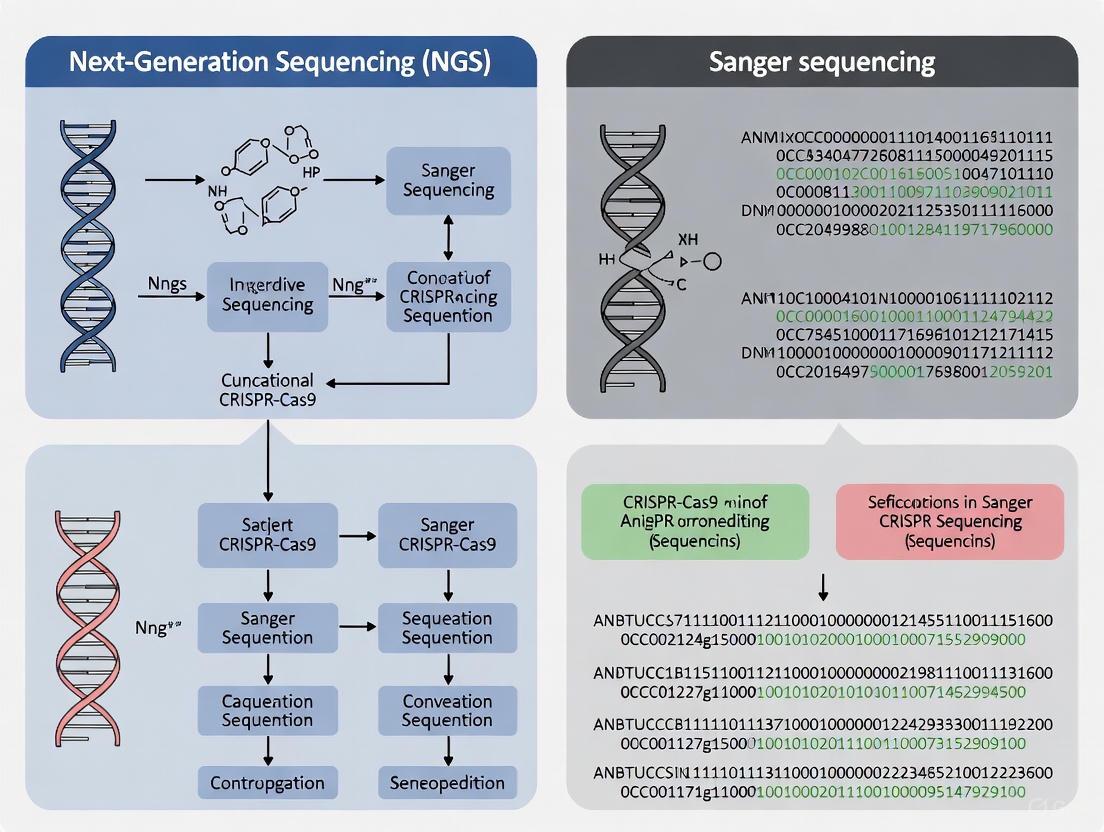

Accurately quantifying CRISPR editing efficiency is critical for successful gene editing in research and therapeutic development. This article provides a comprehensive comparison of Next-Generation Sequencing (NGS) and Sanger sequencing-based methods for validating CRISPR edits. Tailored for researchers and drug development professionals, it covers the foundational principles of each technology, their practical applications, and strategic guidance for method selection. By synthesizing recent benchmarking studies, we outline the superior accuracy and sensitivity of NGS as a gold standard, while also exploring the cost-effective utility of Sanger sequencing combined with sophisticated analysis software like ICE and TIDE for specific experimental contexts.

The Critical Role of Validation in CRISPR Workflows: From Double-Strand Breaks to Quantifiable Data

The advent of CRISPR-Cas9 technology has revolutionized biological research and therapeutic development by providing an efficient, convenient, and programmable system for making precise changes to specific nucleic acid sequences. However, a major concern in its application remains the potential for off-target effects—unintended, unwanted, or even adverse alterations to the genome occurring at sites other than the intended target. These off-target events can lead to misleading experimental results in research and serious adverse outcomes in clinical applications [1]. Similarly, accurately quantifying on-target efficiency is equally crucial, as insufficient editing at the target locus can compromise experimental outcomes and therapeutic efficacy.

This guide objectively compares the performance of validation methodologies, primarily focusing on next-generation sequencing (NGS) and Sanger sequencing, within the context of a broader thesis on verifying CRISPR editing outcomes. We provide supporting experimental data, detailed protocols, and analytical frameworks to equip researchers, scientists, and drug development professionals with the knowledge to implement a rigorous, non-negotiable validation strategy for their genome editing work.

Understanding CRISPR Editing and the Imperative for Validation

The Basics of CRISPR-Cas9 Activity

The CRISPR-Cas9 system functions as a ribonucleoprotein complex composed of a Cas9 nuclease and a single guide RNA (sgRNA). This complex creates site-specific DNA double-strand breaks (DSBs) at genomic positions specified by the sgRNA's complementarity to the DNA, which must be adjacent to a protospacer-adjacent motif (PAM) [1]. The cellular repair of these breaks leads to the desired genomic alterations:

- Non-Homologous End Joining (NHEJ): An error-prone repair mechanism that often introduces small insertions or deletions (indels). When these indels occur within a gene's coding sequence and are not multiples of three base pairs, they can cause frameshift mutations, resulting in non-sense-mediated mRNA decay and effective gene silencing [1].

- Homology-Directed Repair (HDR): A more precise but less frequent mechanism that uses a donor DNA template to repair the break, enabling specific nucleotide changes or gene insertions [1] [2].

The Dual Challenge: On-Target Efficiency and Off-Target Effects

The primary goals of CRISPR validation are to confirm success at the intended target and to exclude significant activity at unintended sites.

- On-Target Efficiency: Not all transfected cells will exhibit the desired edit. Efficiency must be quantified to determine whether the editing experiment was successful enough to proceed, for instance, to the isolation of clonal cell lines. Low efficiency may necessitate optimizing delivery methods or selecting alternative gRNAs [2].

- Off-Target Effects: These can be sgRNA-dependent, where Cas9 cleaves at genomic sites with sequence similarity to the sgRNA (often tolerating 3-5 mismatches), or sgRNA-independent, which are more challenging to predict and relate to cellular context like chromatin organization [1]. Unchecked, these can confound research results and pose significant safety risks in therapeutic contexts.

Methodological Comparison: NGS vs. Sanger-Based Approaches

Choosing the appropriate validation method depends on the experimental needs, including the required sensitivity, throughput, and budget. The table below summarizes the core characteristics of the primary technologies.

Table 1: Comparison of Key CRISPR Validation Methods

| Method | Key Principle | Best For | Advantages | Disadvantages/Limitations |

|---|---|---|---|---|

| Next-Generation Sequencing (NGS) [3] [4] | Massively parallel sequencing of PCR amplicons from the target site(s). | Gold-standard, comprehensive analysis; detecting complex indels and low-frequency events; high-sample throughput. | High sensitivity (can detect edits down to ~1%); quantitative; provides full indel spectrum; enables off-target discovery. | Higher cost and time; complex data analysis requiring bioinformatics support. |

| Sanger Sequencing + Computational Tools (ICE, TIDE, DECODR) [5] [4] [2] | Sanger sequencing of edited bulk PCR products, followed by algorithmic deconvolution of sequence traces. | Rapid, cost-effective assessment of on-target editing efficiency in bulk cell populations. | Low cost; simple workflow; provides efficiency and some indel information. | Lower sensitivity (~15-20% detection limit); less accurate for complex indel mixtures. |

| T7 Endonuclease 1 (T7E1) Assay [4] | Enzyme cleavage of heteroduplex DNA formed by re-annealing wild-type and edited PCR products. | Quick, low-cost preliminary check for the presence of editing. | Very fast and inexpensive; no sequencing required. | Not quantitative; provides no sequence-level information. |

| GeneArt Genomic Cleavage Detection (GCD) [3] | Similar principle to T7E1, using a proprietary enzyme and kit format. | Estimating indel formation efficiency in a pooled population. | Rapid; kit-based standardized protocol. | Less accurate than sequencing-based methods. |

Next-Generation Sequencing (NGS): The Gold Standard

Experimental Protocol for Targeted Amplicon Sequencing:

- DNA Extraction: Isolate genomic DNA from CRISPR-treated and control cells.

- PCR Amplification: Design primers to amplify the genomic region spanning the on-target site (and predicted off-target sites, if applicable). The amplicon size should be compatible with your NGS platform (e.g., 300-500 bp for Illumina MiSeq) [4].

- Library Preparation: Attach platform-specific adapters and sample barcodes to the PCR amplicons to create a sequencing library. This allows multiple samples to be pooled and sequenced in a single run [3].

- Sequencing: Run the pooled library on a benchtop NGS sequencer (e.g., Illumina MiSeq, Ion GeneStudio S5 Series) [6] [3].

- Data Analysis: Process the raw sequencing data through a bioinformatics pipeline:

- Alignment: Map sequence reads to the reference genome.

- Variant Calling: Identify insertions, deletions, and substitutions compared to the reference.

- Quantification: Calculate the percentage of reads containing indels (editing efficiency) and characterize the spectrum of different indel sequences [4].

NGS is considered the gold standard because its high depth of coverage (often thousands of reads per amplicon) allows for the detection of low-frequency editing events and provides a complete, quantitative picture of the editing outcomes in a heterogeneous cell population [4].

Sanger Sequencing with Computational Deconvolution

Sanger sequencing of a bulk PCR product from an edited cell population produces a complex chromatogram with overlapping signals past the cut site. Computational tools deconvolute these traces to estimate editing efficiency.

Experimental Protocol for ICE/TIDE Analysis:

- PCR Amplification: Amplify the target region from both control (wild-type) and edited cell populations. Ensure the amplicon includes at least ~200 base pairs of sequence flanking the edit site on either side [2].

- Sanger Sequencing: Perform Sanger sequencing in the forward and/or reverse direction.

- Data Analysis:

- For ICE (Inference of CRISPR Edits): Upload the wild-type and edited sample sequence trace files (.ab1) along with the sgRNA sequence to the ICE web tool (Synthego). The software aligns the sequences and calculates an ICE score (indel frequency) and a knockout score (proportion of frameshifting indels) [4].

- For TIDE (Tracking of Indels by Decomposition): Similarly, upload the wild-type and edited trace files and the sgRNA sequence to the TIDE web tool. It decomposes the sequencing trace data to estimate the relative abundance of major indels and provides a statistical goodness-of-fit (R²) [2].

Table 2: Performance Comparison of Sanger-Based Computational Tools [5]

| Tool | Reported Strengths | Reported Limitations |

|---|---|---|

| DECODR | Most accurate estimation of indel frequencies for most samples; useful for identifying specific indel sequences. | Performance can vary with indel complexity. |

| ICE | User-friendly interface; results highly comparable to NGS (R² = 0.96); detects large indels. | Estimates can become variable with very complex indel mixtures. |

| TIDE | Effective for simple indels; can predict the identity of single-base insertions. | Struggles with complex edits; requires manual parameter tuning for non-+1 insertions. |

| SeqScreener | Integrated into a commercial vendor's platform. | Performance similar to others, variable with complexity. |

A systematic comparison using artificial sequencing templates with predetermined indels found that while these tools are accurate for simple indels, their estimates diverge when the indel patterns are more complex. Among them, DECODR provided the most accurate estimations for the majority of samples, while TIDE-based TIDER was more effective for analyzing knock-in efficiency [5].

Decision Guide for CRISPR Validation Methods

Advanced Considerations: Off-Target Assessment and NGS Validation

Methods for Detecting Off-Target Effects

While in silico prediction tools (e.g., Cas-OFFinder, CCTop) are a useful first step for nominating potential off-target sites based on sequence similarity to the sgRNA, they can miss sites affected by chromatin structure and other cellular factors. Therefore, empirical methods are essential for a comprehensive off-target profile [1].

Table 3: Methods for Experimental Detection of Off-Target Effects [1]

| Method | Category | Key Principle | Advantages | Disadvantages |

|---|---|---|---|---|

| GUIDE-seq [1] | Cell-based | Integrates double-stranded oligodeoxynucleotides (dsODNs) into DSBs in living cells, followed by NGS. | Highly sensitive; low false positive rate; genome-wide. | Limited by dsODN transfection efficiency. |

| CIRCLE-seq [1] | Cell-free | Circularizes sheared genomic DNA, incubates with Cas9-sgRNA RNP, and sequences linearized DNA. | Highly sensitive; works without cells; low background. | Does not account for cellular chromatin context. |

| Digenome-seq [1] | Cell-free | Digests purified genomic DNA with Cas9-sgRNA RNP and performs whole-genome sequencing (WGS). | Highly sensitive; identifies cleavage sites directly. | Expensive; requires high sequencing coverage. |

| SITE-Seq [1] | Biochemical | Uses selective biotinylation and enrichment of fragments after Cas9-sgRNA RNP digestion. | Minimal read depth; no reference genome needed. | Lower sensitivity and validation rate. |

| Discover-seq [1] | In vivo | Utilizes the DNA repair protein MRE11 as bait to perform ChIP-seq on DSB sites. | High sensitivity and precision in cells. | Can have false positives. |

For most researchers, using Sanger sequencing to screen a shortlist of top in silico-predicted off-target sites is a practical and cost-effective approach, provided the list is manageable [2]. However, for preclinical therapeutic development, a more comprehensive method like GUIDE-seq or CIRCLE-seq is recommended to ensure an unbiased assessment.

The Evolving Role of Sanger Validation for NGS

A critical question in modern genomics is whether Sanger sequencing is still required to validate variants detected by NGS. A large-scale 2021 study of 1109 variants from 825 clinical exomes found a 100% concordance for high-quality NGS variants, leading the authors to conclude that Sanger confirmation has limited utility for these variants, adding unnecessary time and cost [7]. This finding is supported by a earlier study from the ClinSeq project, which measured a validation rate of 99.965% for NGS variants and found that a single round of Sanger sequencing was more likely to incorrectly refute a true positive than to correctly identify a false positive [8].

Therefore, the standard of care is shifting. Rather than universally requiring orthogonal Sanger validation, best practice is for laboratories to establish their own quality thresholds (e.g., read depth ≥20-30x, variant frequency ≥20%, high quality scores) for NGS data, beyond which variants can be reported without Sanger confirmation [8] [7]. Sanger sequencing remains crucial for validating low-quality NGS calls, resolving complex regions, or confirming critical findings prior to publication or clinical reporting.

Table 4: Key Research Reagent Solutions for CRISPR Validation

| Reagent / Tool | Function | Example Use Case |

|---|---|---|

| High-Fidelity DNA Polymerase [9] | Accurate amplification of the target locus for sequencing. | PCR amplification before Sanger sequencing or NGS library prep. |

| Sanger Sequencing Kit (e.g., BigDye) [8] | Fluorescent dideoxy chain-terminator sequencing. | Generating sequence trace files for ICE, TIDE, or direct analysis. |

| NGS Library Prep Kit (e.g., Illumina, Ion Torrent) [6] [3] | Preparation of PCR amplicons for massively parallel sequencing. | Creating barcoded libraries for targeted amplicon sequencing on NGS platforms. |

| GeneArt Genomic Cleavage Detection Kit [3] | Enzyme-based detection of indel formation in pooled cells. | Rapid, non-sequencing estimation of editing efficiency. |

| CRISPR gRNA Controls (e.g., TrueGuide Synthetic gRNA) [3] | Validated positive and negative control gRNAs. | Optimizing transfection and editing protocols; experimental controls. |

| In Silico Prediction Tools (e.g., Cas-OFFinder, CRISPOR) [1] [2] | Computational nomination of potential off-target sites. | Generating a list of genomic loci for targeted off-target assessment. |

CRISPR Validation Experimental Workflow

Validating the outcomes of CRISPR genome editing is a fundamental and non-negotiable step in responsible research and therapeutic development. The choice between NGS and Sanger-based approaches is not a matter of which is universally superior, but which is most appropriate for the specific experimental context.

- NGS provides the most comprehensive and sensitive data for both on-target and off-target analysis and is indispensable for rigorous preclinical validation and clonal characterization.

- Sanger sequencing, especially when coupled with modern computational tools like ICE or DECODR, offers a powerful, cost-effective, and accessible means to quantify on-target efficiency in bulk populations.

The evidence demonstrates that for high-quality NGS data, routine orthogonal Sanger validation is becoming unnecessary. Instead, the field is moving toward validation through robust, quality-controlled NGS workflows alone. By strategically applying these tools and adhering to rigorous experimental protocols, researchers can confidently advance their CRISPR-based projects, ensuring that their results are reliable, reproducible, and safe for translation into future therapies.

The advent of CRISPR-Cas9 technology has revolutionized biological research, enabling precise modifications to the genome with unprecedented ease. This powerful gene-editing tool functions by introducing targeted double-strand breaks (DSBs) in DNA, which the cell's innate repair machinery then resolves. The two primary pathways for repairing these breaks—non-homologous end joining (NHEJ) and homology-directed repair (HDR)—are fundamental to the editing process. However, their interplay and competition often lead to a complex mixture of editing outcomes within a single sample or even a single organism, a phenomenon known as genetic mosaicism [10] [11]. For researchers aiming to create precise genetic models or develop therapeutic interventions, this mosaicism presents a significant challenge. Accurate characterization of these diverse edits is therefore critical, and the choice of validation method—ranging from Sanger sequencing-based tools to more comprehensive next-generation sequencing (NGS)—profoundly impacts the interpretation of experimental results [5] [12]. This guide explores the biological basis of editing outcomes and provides a comparative analysis of the methods used to detect them.

The Core DNA Repair Pathways in CRISPR Editing

When the CRISPR-Cas9 system, comprised of a Cas nuclease and a guide RNA (gRNA), introduces a DSB, the cell activates several competing repair pathways. The outcome depends on factors such as the cell type, cell cycle stage, and the presence of an exogenous repair template [13].

Figure 1: Key DNA Repair Pathways Activated by CRISPR-Cas9. DSB: Double-Strand Break. NHEJ is the most active but error-prone pathway, while HDR requires a donor template for precision. Alternative pathways like MMEJ and SSA contribute to complex indel patterns [11] [13].

Non-Homologous End Joining (NHEJ)

NHEJ is the dominant and most error-prone DSB repair pathway in somatic cells. It functions throughout the cell cycle by directly ligating the broken DNA ends together. This process often results in small insertions or deletions (indels) at the junction site [13]. In the context of CRISPR editing, these indels can disrupt the coding sequence of a gene, leading to frameshifts and premature stop codons, effectively creating a gene knockout. While efficient for disrupting gene function, the randomness of NHEJ makes it unsuitable for applications requiring precise sequence changes.

Homology-Directed Repair (HDR)

HDR is a more precise, albeit less efficient, pathway that uses a homologous DNA template—such as a sister chromatid or an exogenously supplied donor DNA—to accurately repair the break. This allows for specific genetic alterations, including gene knock-ins (e.g., inserting a fluorescent protein tag) or the correction of pathogenic point mutations [14] [13]. A major challenge is that HDR is primarily active in the late S and G2 phases of the cell cycle and is often outcompeted by the more active NHEJ pathway, leading to low efficiencies of precise editing.

Alternative Repair Pathways: MMEJ and SSA

Beyond NHEJ and HDR, alternative pathways significantly contribute to the mosaic of edits.

- Microhomology-Mediated End Joining (MMEJ): This pathway leverages short homologous sequences (5-25 base pairs) flanking the break to facilitate repair, typically resulting in deletions [11].

- Single-Strand Annealing (SSA): SSA requires longer homologous repeats and is particularly relevant in CRISPR knock-in experiments, where it can lead to imprecise donor integration and a specific faulty pattern known as "asymmetric HDR" [11].

The simultaneous activity of these pathways means that a CRISPR-edited sample is rarely a uniform population. Instead, it becomes a complex mixture of unedited cells, NHEJ-mediated indels, HDR-mediated precise edits, and other repair outcomes.

The Critical Challenge of Genetic Mosaicism

Genetic mosaicism occurs when a single edited organism or cell population contains multiple different genotypes [10]. This is a common outcome in CRISPR experiments because the Cas nuclease can remain active through several cell divisions after the initial editing event. Consequently, each cell may be edited differently, leading to a patchwork of genetic variants.

The implications of mosaicism are significant. It can confound the interpretation of phenotypic results in basic research and poses a substantial risk in therapeutic contexts, where unintended edits could persist through generations [10]. A recent study using amplification-free long-read sequencing (PureTarget) characterized CRISPR edits in zebrafish and found that individual founder fish carried 7 to 18 distinct on-target variants, with some large deletions (e.g., a 1,053 bp deletion) being inherited by the next generation [10]. This underscores that mosaicism is not limited to small indels but can include large, complex structural variations that are difficult to detect with standard methods.

Validating the Mosaic: NGS vs. Sanger-Based Tools

Given the complexity of repair outcomes, selecting an appropriate validation method is paramount. The following section compares the gold standard, NGS, with popular Sanger sequencing-based computational tools.

Next-Generation Sequencing (NGS): The Comprehensive Picture

NGS, particularly amplicon sequencing, is widely regarded as the gold standard for CRISPR validation. It involves high-throughput sequencing of PCR-amplicons spanning the target site, providing a deep, quantitative view of all editing events in a sample [15].

- Unbiased Detection: NGS can identify and quantify the entire spectrum of indels resulting from NHEJ, as well as precise HDR events [15].

- High Sensitivity: It can detect rare variants and low-frequency alleles with high accuracy, often down to <1% allele frequency [16].

- Complete Spectrum Analysis: Unlike other methods, NGS reliably characterizes complex outcomes, including large deletions, complex rearrangements, and imprecise knock-in events [10] [11].

- Off-Target Analysis: With techniques like GUIDE-seq or DISCOVER-Seq, NGS can be used to empirically nominate and quantify off-target effects across the genome [15].

Newer long-read sequencing technologies, such as PureTarget with HiFi sequencing, further enhance this by providing amplification-free, single-molecule views of edited loci. This avoids the PCR bias that can skew allele frequencies in standard amplicon sequencing and allows for the accurate detection of large structural variants and precise haplotype phasing [10].

Sanger-Based Computational Tools: A Practical but Limited Alternative

Computational tools like TIDE (Tracking of Indels by Decomposition) and ICE (Inference of CRISPR Edits) analyze Sanger sequencing trace data from edited samples to estimate editing efficiency and indel distribution. They are popular due to their lower cost and user-friendly nature [5] [4].

A systematic comparison of these tools using artificial sequencing templates with predetermined indels revealed critical limitations [5]:

- Variable Accuracy with Complexity: These tools estimate indel frequency with acceptable accuracy only when the indels are simple and involve a few base changes. Their performance becomes more variable with complex indels or knock-in sequences [5].

- Limited Deconvolution Capability: While they can effectively estimate the net size of indels, their ability to deconvolute the exact sequences of complex indels is limited and varies between tools [5].

- Divergent Results: Another study reported that TIDE, ICE, and another tool called DECODR can produce "widely divergent indel frequency data" from the same CRISPR-edited samples [5].

The following table summarizes a quantitative comparison of these validation methods.

Table 1: Comparison of CRISPR Genome Editing Validation Methods

| Method | Principle | Key Advantages | Key Limitations | Best For |

|---|---|---|---|---|

| NGS (Amplicon) [16] [15] | Deep sequencing of PCR amplicons from target site | High sensitivity (<1% AF) [16], comprehensive indel & HDR quantification, detects large/complex variants, enables off-target analysis [15] | Higher cost, more complex data analysis, requires bioinformatics | Definitive validation, characterizing complex mosaicism, low-frequency edits, GxP studies |

| ICE (Synthego) [5] [4] | Decomposes Sanger traces to estimate indel frequency & types | User-friendly, good correlation with NGS for simple indels (R² = 0.96) [4], provides knockout score | Accuracy declines with complex indels/knock-ins [5], limited deconvolution | Rapid, cost-effective screening of NHEJ efficiency for simple edits |

| TIDE [5] [12] | Decomposes Sanger traces to estimate indel frequency & types | Cost-effective, rapid turnaround, good for simple +1 insertions [4] | Poor performance with complex edits, widely divergent results from NGS/other tools [5] [12] | Initial, low-cost assessment of editing success (yes/no) |

| T7E1 Assay [12] | Mismatch-specific cleavage of heteroduplex DNA | Very fast and inexpensive, no sequencing required | Not quantitative, low dynamic range, underestimates high efficiency edits, no sequence information [12] | Preliminary screening during guide RNA optimization |

Detailed Experimental Protocols for Key Validation Methods

Protocol 1: CRISPR Validation by Amplicon NGS

This protocol is ideal for comprehensively characterizing the full spectrum of edits, including mosaicism [15].

- Genomic DNA Extraction: Isolate high-quality genomic DNA from CRISPR-treated and control cells/organisms.

- Target Amplification: Design primers to amplify a 200-400 bp region surrounding the on-target CRISPR cut site. Include Illumina adapter sequences and sample-specific barcodes in the primers to enable multiplexing.

- Library Preparation & Sequencing: Pool the barcoded PCR products and prepare the library according to the sequencing platform's specifications (e.g., Illumina). Sequence on an appropriate platform (e.g., MiSeq).

- Data Analysis: Use a specialized bioinformatics pipeline (e.g., the one provided with the rhAmpSeq CRISPR Analysis System [15]) to:

- Align sequences to the reference genome.

- Quantify the percentage of reads with indels (NHEJ efficiency).

- Quantify the percentage of reads with perfect HDR.

- Identify and quantify specific indel sequences and their frequencies.

- Detect large deletions and complex structural variants.

Protocol 2: Validation Using Sanger Sequencing & ICE Analysis

This protocol provides a faster, more accessible alternative for initial efficiency checks [4].

- Genomic DNA Extraction & PCR: As in Protocol 1, isolate DNA and amplify the target region using standard PCR.

- Sanger Sequencing: Purify the PCR product and submit it for Sanger sequencing in both forward and reverse directions.

- ICE Analysis:

- Access the ICE tool (Synthego) online.

- Upload the Sanger sequencing trace file (.ab1) from the edited sample.

- Upload the trace file from the wild-type control sample.

- Input the sgRNA target sequence and the amplicon sequence.

- The tool will generate an "ICE Score" (indel frequency), a knockout score, and a distribution of the most frequent indel types.

The Scientist's Toolkit: Essential Reagents & Solutions

Table 2: Key Research Reagent Solutions for CRISPR Editing and Validation

| Reagent / Solution | Function | Example Use Case |

|---|---|---|

| Cas9 Nuclease & gRNA [5] | Forms the Ribonucleoprotein (RNP) complex that induces the targeted double-strand break. | Direct delivery of pre-formed RNP complexes for highly efficient editing with reduced off-target effects. |

| HDR Donor Template [14] [11] | Provides the homologous DNA sequence for precise repair. Can be single-stranded (ssODN) or double-stranded (e.g., plasmid). | Inserting an epitope tag (e.g., FLAG) or correcting a specific disease-causing point mutation via HDR. |

| NHEJ Inhibitors [11] | Chemical inhibitors (e.g., Alt-R HDR Enhancer V2) that suppress the NHEJ pathway. | Used to enhance the relative efficiency of HDR by blocking the dominant error-prone repair pathway. |

| rhAmpSeq CRISPR Analysis System [15] | An end-to-end NGS solution for designing and sequencing multiplexed amplicons. | Highly sensitive, targeted sequencing for quantifying on- and off-target editing events across many samples. |

| PureTarget Panels with HiFi Sequencing [10] | An amplification-free, long-read sequencing-based target enrichment method. | Unbiased characterization of the full spectrum of editing outcomes, including large structural variants and accurate haplotype phasing in mosaic samples. |

The inherent competition between the NHEJ and HDR DNA repair pathways ensures that CRISPR genome editing inherently produces a mosaic of genetic outcomes. While Sanger-based tools like ICE and TIDE offer a practical starting point for estimating basic editing efficiency, their limitations in detecting complex mosaicism are well-documented [5] [12]. For research and development where accurate genotyping is critical—such as in functional studies, disease modeling, and the development of gene therapies—next-generation sequencing is the unequivocal gold standard. NGS provides the sensitivity, quantitative power, and comprehensive variant detection required to capture the full biological picture of genome editing, ensuring that researchers can confidently validate their work against the challenging backdrop of genetic mosaicism.

The shift from simple qualitative confirmation of gene editing to precise quantitative analysis marks a significant evolution in CRISPR research. As CRISPR-Cas systems have revolutionized biological research, the accurate quantification of editing outcomes has become paramount for successful experimental outcomes [5]. Defining and understanding key metrics—including indel frequency, indel complexity, and specialized knockout/knock-in scores—enables researchers to properly evaluate the efficiency and precision of their editing experiments, particularly when comparing Next-Generation Sequencing (NGS) validation with more accessible Sanger sequencing methods [4].

The fundamental challenge in CRISPR analysis lies in the random nature of non-homologous end joining (NHEJ) repair, which generates a heterogeneous population of cells harboring various insertions and deletions (indels) at target sites [4]. Computational tools have emerged to deconvolute this complexity from Sanger sequencing data, each employing distinct algorithms to estimate editing efficiency and characterize the spectrum of resulting indels [5]. This guide objectively compares how these tools define, calculate, and report crucial editing metrics, providing researchers with the framework needed to select appropriate analysis methods and accurately interpret their gene editing results.

Computational Tools for CRISPR Analysis: A Comparative Landscape

Various computational tools have been developed to analyze CRISPR editing outcomes from Sanger sequencing data, each with unique algorithmic approaches and output metrics. The table below summarizes the key tools and their primary characteristics.

Table 1: Overview of Computational Tools for CRISPR Analysis from Sanger Data

| Tool Name | Primary Analysis Type | Key Strength | Reported Accuracy vs NGS |

|---|---|---|---|

| TIDE (Tracking of Indels by Decomposition) | Indel frequency and distribution | Established method; provides statistical significance for indels | Variable; struggles with complex indels [5] |

| ICE (Inference of CRISPR Edits) - Synthego | Editing efficiency and indel profiles | User-friendly; batch processing; KO and KI scores | High correlation (R² = 0.96) reported [4] [17] |

| DECODR (Deconvolution of Complex DNA Repair) | Indel frequency and sequence identification | Accurate indel sequence identification | Most accurate for majority of samples in comparative study [5] |

| CRISP-ID | Genotyping of multiple alleles | Can resolve up to three alleles from a single trace | 99.9% identity to single colony method [18] |

| CRISPECTOR2.0 | Allele-specific editing activity | Reference-free, allele-aware quantification | Enables haplotype-dependent activity analysis [19] |

| SeqScreener (Thermo Fisher) | Gene edit confirmation | Integrated in intuitive application; visual results | Robust algorithm for grading editing outcome [20] |

Quantitative Performance Comparison Across Platforms

Recent systematic comparisons reveal significant variability in performance metrics when different computational tools analyze the same sequencing data. The tables below summarize key findings from controlled studies.

Table 2: Performance Comparison Using Artificial Sequencing Templates with Predetermined Indels [5]

| Tool | Simple Indel Accuracy | Complex Indel Performance | Knock-in Analysis | Indel Sequence Identification |

|---|---|---|---|---|

| TIDE | Acceptable | Variable estimates | Specialized version (TIDER) available | Limited capabilities |

| ICE | Acceptable | Variable estimates | Limited capability | Variable with limitations |

| DECODR | Acceptable | Most accurate for majority of samples | Limited capability | Most useful for sequence identification |

| SeqScreener | Acceptable | Variable estimates | Limited capability | Variable with limitations |

Table 3: Variability in Indel Reporting from Somatic CRISPR/Cas9 Tumor Models [21]

| Analysis Platform | Reported Indel Number | Reported Indel Size | Reported Indel Frequency | Consistency Across Platforms |

|---|---|---|---|---|

| TIDE | Variable across platforms | Variable across platforms | Variable across platforms | High variability observed, particularly with larger indels common in somatic in vivo models |

| ICE (Synthego) | Variable across platforms | Variable across platforms | Variable across platforms | High variability observed, particularly with larger indels common in somatic in vivo models |

| DECODR | Variable across platforms | Variable across platforms | Variable across platforms | High variability observed, particularly with larger indels common in somatic in vivo models |

| Indigo | Variable across platforms | Variable across platforms | Variable across platforms | High variability observed, particularly with larger indels common in somatic in vivo models |

Defining and Calculating Key CRISPR Metrics

Indel Frequency

Indel frequency represents the percentage of DNA sequences in an edited sample that contain insertions or deletions compared to the wild-type sequence. This fundamental metric quantifies overall editing efficiency, indicating what proportion of the target genomic sequence has been successfully modified [17]. Different tools calculate this metric through various algorithmic approaches: TIDE uses a decomposition algorithm with non-negative regression, ICE employs a lasso regression model, while DECODR utilizes its own unique decomposition method [5] [21].

The accuracy of indel frequency estimation depends heavily on the complexity of editing outcomes. Studies demonstrate that most tools estimate frequency with acceptable accuracy when indels are simple and contain only a few base changes. However, estimates become more variable among tools when sequencing templates contain complex indels or knock-in sequences [5]. Performance also varies with the range of editing efficiency, showing more consistent results in mid-range frequencies (e.g., 30-70%) compared to very low or very high editing rates [5].

Indel Complexity

Indel complexity refers to the diversity of different insertion and deletion sequences generated at a target site. While not always represented by a single numerical score, this metric captures the heterogeneity of editing outcomes within a sample [5]. Tools represent this complexity differently: some provide detailed distributions of specific indel sequences and their relative abundances, while others may offer entropy-based measurements or visual representations of the editing landscape [19] [17].

Higher complexity samples—those containing multiple different indel sequences—present greater challenges for accurate deconvolution. The capability of computational tools to resolve complex indel sequences exhibits significant variability, with DECODR showing particular strength in identifying specific indel sequences according to comparative studies [5]. The presence of more than three distinct alleles in a single sample often exceeds the resolution capacity of most Sanger-based analysis tools, potentially requiring NGS for complete characterization [18].

Knockout Score (KO Score)

The Knockout Score is a specialized metric that estimates the proportion of editing events likely to result in functional gene knockout. Synthego's ICE tool specifically defines this as "the proportion of cells with either a frameshift or 21+ bp indel" [17]. This metric is particularly valuable for researchers focused on complete gene disruption rather than overall editing rates, as it specifically quantifies edits that are most likely to cause premature stop codons and protein truncation.

Unlike general indel frequency, the KO Score applies biological context to editing outcomes by prioritizing frameshift mutations and large indels that dramatically disrupt coding sequences. This provides researchers with a more functionally relevant assessment of how many cells in their population are likely to have lost gene function [17].

Knock-in Score (KI Score)

The Knock-in Score specifically measures the proportion of sequences containing the desired precise knock-in edit when using donor DNA templates [17]. This metric is crucial for evaluating the success of homology-directed repair (HDR) experiments, where the goal is targeted insertion of specific sequences rather than random indels.

Knock-in efficiency is typically much lower than NHEJ-based editing, and requires specialized analysis approaches. While most general indel analysis tools have limited capability for knock-in quantification, specialized versions like TIDER (based on TIDE) have been developed specifically for this purpose and have been shown to outperform other tools for estimating knock-in efficiency [5].

Experimental Protocols for Tool Validation

Controlled Assessment Using Artificial Sequencing Templates

To quantitatively compare the performance of computational tools under controlled conditions, researchers have developed validation methodologies using artificial sequencing templates with predetermined indels [5].

Protocol Overview:

- CRISPR Editing: Introduce indels in zebrafish gene loci (otx2b, pax2a, pou2, sox2, sox3, sox11a, sox11b, sox19b) using CRISPR-Cas9 or CRISPR-Cas12a RNP complexes microinjected into yolk of 1-cell stage embryos [5]

- Amplification and Cloning: Amplify genomic fragments encompassing target sites via PCR and clone into pUC19 vector using restriction sites in primers [5]

- Template Preparation: Combine cloned alleles with predetermined indels in known ratios to create artificial sequencing templates with defined editing complexities [5]

- Tool Analysis: Analyze resulting Sanger sequencing trace data with multiple computational tools (TIDE, ICE, DECODR, SeqScreener) [5]

- Accuracy Assessment: Compare reported indel frequencies and sequences with known input values to determine tool-specific accuracy [5]

This approach enables direct quantification of performance metrics without the uncertainty of true editing heterogeneity, providing standardized comparison across platforms.

In Vivo Somatic Tumor Model Analysis

For evaluating tool performance in complex biological systems, somatic CRISPR/Cas9 tumor models provide authentic in vivo editing data with inherent complexity [21].

Protocol Overview:

- Model Generation: Generate malignant peripheral nerve sheath tumors (MPNSTs) via adenoviral delivery of Cas9 and gRNAs targeting Nf1 and p53 directly injected into mouse sciatic nerve [21]

- Cell Line Derivation: Establish cell lines from harvested tumors through mechanical dissociation and enzymatic digestion (Collagenase Type IV, dispase) [21]

- Target Amplification: Amplify Nf1 and p53 target regions using Phusion high-fidelity DNA polymerase with specific primers [21]

- Sequencing Preparation: Clean PCR amplicons using Monarch PCR and DNA Cleanup Kit [21]

- Multi-Platform Analysis: Process sequencing chromatograms through TIDE, Synthego ICE, DECODR, and Indigo using identical input files [21]

- Variance Quantification: Compare reported number, size, and frequency of indels across platforms to identify tool-specific variability [21]

This methodology highlights how different software platforms can report widely divergent indel data from the same biological sample, particularly with larger indels common in somatic in vivo models [21].

Experimental Workflow and Pathway Analysis

The following diagram illustrates the key decision points and methodological pathways for selecting and implementing CRISPR analysis tools, from initial editing to final metric interpretation:

Decision Pathway for CRISPR Analysis Tool Selection

Essential Research Reagent Solutions

The table below catalogues essential laboratory reagents and materials required for implementing the experimental protocols and analyses described in this guide.

Table 4: Essential Research Reagents for CRISPR Analysis Workflows

| Reagent/Material | Specific Example | Function in Workflow | Protocol Reference |

|---|---|---|---|

| CRISPR Nucleases | Alt-R S.p. Cas9 Nuclease V3, Alt-R A.s. Cas12a Nuclease Ultra | Generation of DSBs at target genomic loci | [5] |

| Guide RNA Components | Alt-R CRISPR-Cas9 crRNA, Alt-R CRISPR-Cas9 tracrRNA | Target specificity for CRISPR nucleases | [5] [22] |

| High-Fidelity Polymerase | KOD One PCR Master Mix, Phusion high-fidelity DNA polymerase | Accurate amplification of target regions for sequencing | [5] [21] |

| Cloning Vector | pUC19 vector | Molecular cloning of PCR amplicons for sequencing | [5] |

| DNA Cleanup Kits | Monarch PCR and DNA Cleanup Kit | Purification of PCR amplicons before sequencing | [21] |

| Cell Dissociation Enzymes | Collagenase Type IV, dispase | Dissociation of tumor tissue for cell line generation | [21] |

| Electroporation System | Genome Editor electroporator, LF501PT1-10 electrode | Delivery of RNP complexes into cells/embryos | [22] |

| Embryo Culture Media | KSOM medium | In vitro culture of edited embryos | [22] |

The expanding toolkit for CRISPR analysis presents researchers with both opportunities and challenges in accurately quantifying editing outcomes. The evidence demonstrates that while Sanger-based computational tools provide cost-effective alternatives to NGS, their performance varies significantly depending on editing context, with DECODR showing superior accuracy for indel sequence identification and TIDER excelling at knock-in efficiency analysis [5]. The observed variability in reported editing metrics across platforms underscores the importance of selecting analysis tools specific to experimental contexts, particularly for complex in vivo applications where larger indels are common [21].

For researchers operating within the NGS validation paradigm, Sanger-based tools offer practical screening solutions when appropriately calibrated and understood. The key metrics of indel frequency, complexity, and specialized KO/KI scores provide complementary information, with the optimal metric depending on experimental goals—whether assessing overall editing efficiency, characterizing editing heterogeneity, or quantifying functionally relevant disruptions. As CRISPR applications continue evolving toward clinical applications, precise understanding and standardized reporting of these metrics will be essential for comparing editing approaches across studies and advancing the field toward more precise genomic engineering.

Confirming the success of a gene-editing experiment is a critical step in the research workflow. The primary quantitative measure of success is the average editing efficiency, or indel frequency, which informs crucial decisions on whether to proceed with a pool of cells or isolate single-cell clones [23]. The evolution of validation technologies has moved from simple gel-based assays to sophisticated sequencing methods, each with distinct advantages and limitations. Next-Generation Sequencing (NGS) has emerged as a powerful tool, providing comprehensive qualitative and quantitative data [24]. However, Sanger sequencing-based methods remain widely used due to their accessibility and cost-effectiveness, especially when coupled with modern computational decomposition tools [4] [25]. This guide provides an objective comparison of these technologies, offering experimental data and protocols to help researchers select the most appropriate method for their specific application in CRISPR genome editing.

Comparative Analysis of CRISPR Editing Efficiency Methods

The methods for analyzing CRISPR edits can be broadly categorized into gel-based assays, Sanger sequencing with computational decomposition, and high-throughput Next-Generation Sequencing. The table below summarizes the key characteristics of each approach.

Table 1: Overview of Major CRISPR Editing Analysis Methods

| Method | Key Principle | Throughput | Quantitative Capability | Information Depth | Best For |

|---|---|---|---|---|---|

| T7 Endonuclease I (T7E1) Assay | Cleaves heteroduplex DNA formed by wild-type and indel-containing strands [26]. | Low | Semi-quantitative [26] | Low; confirms editing but does not identify specific indels [4]. | Rapid, low-cost initial screening where sequence-level data is not required [4]. |

| TIDE & ICE (Sanger-based) | Computational decomposition of Sanger sequencing chromatograms to estimate indel frequency and types [26] [25]. | Medium | Quantitative (with limitations for complex edits) [25] | Medium; provides indel frequency and, to varying degrees, identifies specific indels [25]. | Cost-effective validation that provides sequence-level detail, suitable for most routine knockout experiments [4]. |

| Next-Generation Sequencing (NGS) | High-throughput sequencing of PCR amplicons to directly sequence every DNA molecule in a sample [23] [24]. | High | Highly quantitative and sensitive [24] | High; provides precise indel frequency, spectrum of all mutations, and can detect large deletions and complex edits [4] [24]. | Gold-standard validation; essential for comprehensive analysis of editing outcomes, off-target assessment, and sensitive detection of rare events [24]. |

A systematic comparison of computational tools for Sanger sequencing data revealed that while tools like TIDE, ICE, and DECODR perform well with simple indels, their accuracy can vary when dealing with more complex editing outcomes or when indel frequencies are very low or high [25]. A key study demonstrated that the ICE tool showed a high correlation with NGS data (R² = 0.96), supporting its use as a credible alternative when NGS is not accessible [4]. In contrast, the T7E1 assay is known to sometimes underrepresent editing efficiency in a non-linear fashion, reducing its predictive value [23].

Table 2: Quantitative Performance Comparison of Sanger-Based Computational Tools

| Tool | Reported Correlation with NGS (when available) | Strengths | Key Limitations |

|---|---|---|---|

| TIDE | Not specified in search results | Good for simple indels; can predict single-base insertions [4] [25]. | Struggles with complex indels and large insertions/deletions without manual parameter adjustment [4] [25]. |

| ICE (Synthego) | R² = 0.96 [4] | User-friendly; detects a wide range of indels including large insertions/deletions; provides a "Knockout Score" [4]. | Accuracy can decrease with highly complex indel mixtures or extreme (very low/high) efficiency samples [25]. |

| DECODR | Not specified in search results | In one study, provided the most accurate estimations of indel frequencies for most samples and was useful for identifying indel sequences [25]. | Performance may vary depending on the nature of the genome editing [25]. |

| ddPCR | Highly precise and quantitative [26] | Excellent for fine discrimination between edit types (e.g., NHEJ vs. HDR) and quantifying edited cell frequencies [26]. | Requires specific fluorescent probes; not suitable for discovering unknown indels [26]. |

Experimental Protocols for Key Assays

T7 Endonuclease I (T7E1) Assay Protocol

The T7E1 assay is a mismatch cleavage method used for the initial assessment of nuclease activity [26].

- PCR Amplification: Amplify the genomic target region (typically 300-1000 bp) from both edited and wild-type control cells. Purify the PCR products using a commercial clean-up kit [26].

- Heteroduplex Formation: Denature and re-anneal the purified PCR amplicons to form heteroduplexes. Use a thermocycler with the following program: 95°C for 5-10 minutes, then ramp down to 25°C at a rate of 0.1-2.0°C per second [26] [3].

- T7E1 Digestion: Incubate the re-annealed DNA with T7 Endonuclease I enzyme. A typical 10 µL reaction contains 8 µL of purified PCR product, 1 µL of the provided NEBuffer, and 1 µL of T7E1 enzyme. Incubate at 37°C for 30-90 minutes [26].

- Analysis via Gel Electrophoresis: Resolve the digestion products on a 1-2% agarose gel. The cleaved fragments will appear as lower molecular weight bands. Editing efficiency can be estimated semi-quantitatively using densitometric analysis of the band intensities with the formula: % Indel = (1 - (1 - (b + c)/(a + b + c))^1/2) * 100, where

ais the integrated intensity of the undigested PCR product band, andbandcare the intensities of the cleavage products [26] [3].

Sanger Sequencing with ICE Analysis Protocol

This protocol uses Sanger sequencing followed by computational analysis for a more quantitative result.

- PCR Amplification and Sample Preparation: Amplify the target region from edited and wild-type control samples. It is critical to use a high-fidelity DNA polymerase to minimize PCR-introduced errors. Purify the PCR products [4].

- Sanger Sequencing: Submit the purified PCR products for Sanger sequencing from a single direction, using one of the PCR primers. The resulting data should be received in .ab1 format (chromatogram files) [4].

- ICE Analysis:

- Access the ICE (Inference of CRISPR Edits) webtool from Synthego.

- Upload the wild-type control sample .ab1 file and the edited sample .ab1 file.

- Input the target amplicon sequence and the specific guide RNA (gRNA) sequence used for the experiment.

- The tool automatically aligns the sequences and performs its decomposition algorithm. No manual adjustment of parameters is typically required.

- The output includes an "ICE Score" (indel frequency), a "Knockout Score" (frequency of frameshift mutations), and a detailed breakdown of the specific types and proportions of indels detected [4].

Targeted Next-Generation Sequencing (NGS) Protocol

NGS is the gold standard for comprehensive editing analysis, from on-target efficiency to off-target effects [24].

- Library Preparation (Amplicon Sequencing):

- Primary PCR: Amplify the target genomic regions from edited and wild-type control samples. Use a high-fidelity polymerase.

- Indexing PCR (Adapter Ligation): In a second PCR step, attach unique dual indices (UDIs) and sequencing adapters to the amplicons from each sample. This allows for multiplexing—pooling dozens of samples into a single sequencing run [24].

- Library Quantification and Normalization: Precisely quantify the final libraries using a method like fluorometry. Normalize libraries to equal concentrations and pool them together.

- Sequencing: Denature the pooled library and load it onto an NGS instrument, such as an Illumina sequencer, for paired-end sequencing. The required read depth depends on the application, but for indel detection, a depth of 50,000x to 100,000x per amplicon is often recommended to ensure sensitivity for low-frequency events.

- Data Analysis:

- Demultiplexing: The sequencer's software separates the sequenced reads back into individual sample files based on their unique indices.

- Quality Control: Assess read quality using tools like FastQC.

- Alignment: Map the sequencing reads to a reference genome (or amplicon reference sequence) using aligners like BWA or Bowtie2.

- Variant Calling: Use specialized genome editing tools (e.g., CRISPResso2, amplicon-indel-analyzer) to compare the aligned reads from the edited sample to the reference and precisely identify and quantify all insertion, deletion, and substitution events at the target site. This provides a complete spectrum of editing outcomes.

Technology Selection Workflow

The following diagram illustrates a decision-making workflow to select the most appropriate CRISPR analysis method based on project goals and constraints.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of the described protocols requires specific reagents and tools. The following table details essential items for a CRISPR analysis workflow.

Table 3: Key Research Reagent Solutions for CRISPR Editing Analysis

| Item | Function / Description | Example Use Case |

|---|---|---|

| High-Fidelity DNA Polymerase | A PCR enzyme with proofreading activity to minimize errors during amplicon generation, crucial for accurate sequencing and cleavage assays. | Amplifying the target genomic locus for all downstream analysis methods (T7E1, Sanger, NGS) [26]. |

| T7 Endonuclease I | An enzyme that recognizes and cleaves mismatched DNA in heteroduplexes, forming the basis of the T7E1 assay. | Detecting the presence of CRISPR-induced indels via gel electrophoresis [26] [3]. |

| Sanger Sequencing Service/Kit | Provides the reagents or service for chain-termination sequencing, generating chromatogram (.ab1) files of the target amplicon. | Generating input data for computational tools like ICE, TIDE, and DECODR [4] [25]. |

| NGS Library Prep Kit | A kit designed for preparing sequencing libraries from amplicons, typically including enzymes for tagmentation or adapter ligation, indexes, and buffers. | Creating multiplexed libraries for targeted sequencing on platforms like Illumina [24]. |

| Computational Analysis Tools (ICE, TIDE) | Web-based or standalone software that deconvolutes Sanger sequencing traces from edited samples to quantify indel frequencies. | Determining editing efficiency and KO scores from Sanger data without the need for NGS [4]. |

| NGS Data Analysis Software | Specialized bioinformatics tools (e.g., CRISPResso2) designed to align NGS reads and call CRISPR-induced mutations from amplicon sequencing data. | Precisely quantifying the full spectrum of indels and their frequencies from high-throughput sequencing data [24]. |

The landscape of technologies for validating CRISPR editing efficiency is diverse, ranging from the simple, cost-effective T7E1 assay to the comprehensive power of NGS. Sanger sequencing-based computational tools like ICE have effectively bridged the gap, offering researchers a balanced option that provides quantitative, sequence-level data at a lower cost than NGS. The choice of method ultimately depends on the specific requirements of the experiment, including the need for quantitative precision, depth of information, throughput, and budget. As the field advances, the integration of AI and automated systems like CRISPR-GPT promises to further streamline experiment design and analysis, but the fundamental understanding of these core validation technologies remains essential for researchers to critically assess and advance their genome editing work [27].

A Deep Dive into CRISPR Validation Methods: Protocols and Analysis Tools

Next-generation sequencing (NGS) has established itself as the gold standard for validating genome editing experiments, offering unparalleled depth and accuracy. This review provides a comprehensive overview of targeted amplicon sequencing, a powerful NGS method for assessing CRISPR editing efficiency. We compare its performance against alternative sequencing and analysis techniques, detailing experimental workflows, key metrics, and reagent solutions. Framed within the broader thesis of NGS validation for CRISPR editing efficiency versus Sanger sequencing, this guide equips researchers with the knowledge to implement robust, data-driven validation protocols for their genome editing programs.

The advent of CRISPR-Cas9 genome editing has revolutionized biological research and therapeutic development. However, the success of any CRISPR experiment hinges on accurately verifying the intended genetic modifications. In the context of a broader thesis comparing validation methods, this article positions targeted amplicon sequencing as the superior technique for comprehensive editing analysis. Unlike methods that merely indicate the presence of edits, NGS provides a complete picture of the editing landscape, including precise indel sequences, their relative frequencies, and potential off-target effects [4].

While Sanger sequencing has been a traditional mainstay for sequence verification, its limit of detection for mixed sequences is only 15-20%, making it poorly suited for analyzing the heterogeneous cell populations typically generated by CRISPR editing [28] [29]. In contrast, targeted amplicon sequencing delivers high sensitivity (down to 1% for low-frequency variants), superior discovery power for novel variants, and the ability to sequence hundreds to thousands of samples simultaneously through multiplexing [30] [28]. This massive parallel sequencing capability, combined with rapidly decreasing costs, has cemented NGS as the gold standard for CRISPR validation in rigorous scientific and drug development applications.

Workflow of Targeted Amplicon Sequencing

Targeted amplicon sequencing is a method that uses polymerase chain reaction (PCR) to amplify specific genomic regions of interest, which are then sequenced on an NGS platform [30] [31]. The streamlined, PCR-based workflow makes it particularly suitable for applications requiring rapid turnaround and high sensitivity, such as verifying CRISPR-Cas9-mediated indels [30] [3].

Step-by-Step Protocol

The following diagram illustrates the core workflow for targeted amplicon sequencing in CRISPR validation:

Detailed Workflow Description:

- Genomic DNA Extraction: Extract high-quality genomic DNA from CRISPR-edited cells and appropriate control cells (e.g., non-edited or mock-treated) [3].

- Primary Target Amplification (PCR 1): Perform the first PCR using primers specifically designed to flank the CRISPR target site. These primers include a locus-specific sequence (usually 20-25 bp) and a universal adapter sequence (e.g., 21 bp "GAA GGT GAC CAA GTT CAT GCT") [32]. This step enriches the specific region of interest from the complex genomic background.

- PCR Product Cleanup: Purify the amplified products to remove excess primers, dNTPs, and enzymes using methods like ExoSAP-IT or Sephadex columns [32].

- Library Construction (PCR 2): Use a second, limited-cycle PCR to attach platform-specific sequencing adapters and sample-specific barcodes (Multiplexing Identifiers, MIDs). This step uses primers containing the universal adapter sequence, a unique 10-bp barcode, a 4-bp key, and the sequencer-specific primer (e.g., Titanium 454 primer) [32]. This crucial step allows for the pooling and simultaneous sequencing of hundreds of samples.

- Library Cleanup and Quantification: Purify the final amplicon libraries and quantify them using a fluorescence-based method like the Quant-iT PicoGreen assay to ensure accurate molarity for pooling [32].

- Library Pooling and Sequencing: Normalize and pool the barcoded libraries into a single tube for a single sequencing run. The pool is then loaded onto an NGS platform (e.g., Illumina, Ion Torrent) for massively parallel sequencing [30] [3].

- Bioinformatic Analysis: Process the raw sequencing data through a bioinformatics pipeline. This includes demultiplexing (separating samples by barcode), alignment to a reference sequence, and variant calling to identify and quantify the spectrum and frequency of indels at the target site [30] [4].

Comparative Analysis of CRISPR Analysis Methods

Selecting the appropriate method to validate CRISPR editing depends on the required level of detail, sample throughput, and available resources. The table below provides a direct comparison of the most common techniques.

Table 1: Comparison of Methods for Analyzing CRISPR Editing Efficiency

| Method | Principle | Sensitivity/LOD | Key Advantages | Key Limitations | Ideal Use Case |

|---|---|---|---|---|---|

| Targeted Amplicon Sequencing (NGS) [3] [28] [4] | Massively parallel sequencing of PCR-amplified target sites | ~1% [28] | Gold standard; comprehensive variant data; high sensitivity; high-throughput | Higher cost & complexity; requires bioinformatics | Validating heterogeneous edits; detecting low-frequency variants; research requiring publication-quality data |

| Sanger Sequencing + ICE Analysis [4] | Sanger sequencing analyzed with Inference of CRISPR Edits (ICE) software | ~5% (Inferred) | Cost-effective; high correlation with NGS (R² = 0.96) [4]; user-friendly | Less accurate for complex editing landscapes; indirect quantification | Rapid screening and validation for labs without NGS access |

| T7 Endonuclease 1 (T7E1) Assay [4] | Enzyme cleavage of heteroduplex DNA formed by wild-type and edited sequences | ~5-10% (Estimated) | Rapid and inexpensive; no sequencing required | Not quantitative; no sequence-level information | Initial, low-cost screening during guide RNA optimization |

Beyond the methods in Table 1, hybridization capture is another targeted NGS approach. While amplicon sequencing uses PCR for target enrichment, hybridization capture uses complementary DNA or RNA probes to "pull-down" regions of interest [33] [34]. This makes it more suitable for sequencing very large genomic regions (e.g., whole exomes or panels spanning megabases) but typically with a more complex workflow, longer hands-on time, and higher cost per sample than amplicon sequencing [33]. For focused analysis of specific CRISPR target sites, amplicon sequencing is generally the more efficient and cost-effective NGS method.

Key Metrics for Evaluating Targeted NGS Experiments

To ensure the quality and reliability of amplicon sequencing data, researchers must evaluate key performance metrics post-sequencing.

Table 2: Essential NGS Metrics for CRISPR Validation QC

| Metric | Definition | Impact on Data Quality | Target for CRISPR QC |

|---|---|---|---|

| Depth of Coverage [35] | The average number of times each base in the target region is sequenced. | Higher depth increases confidence in variant calling, essential for detecting low-frequency indels. | >1000X for confident detection of low-frequency (<1%) variants [35]. |

| On-Target Rate [35] | The percentage of sequencing reads that map to the intended target regions. | Indicates enrichment specificity; a high rate means efficient use of sequencing capacity. | Typically very high (>90%) for amplicon sequencing due to PCR enrichment [31]. |

| Uniformity of Coverage [35] | The evenness of sequence coverage across all target bases. | Poor uniformity can lead to "dropouts" where some regions have insufficient coverage. | Aim for high uniformity (low Fold-80 penalty, ideally close to 1) [35]. |

| Duplicate Read Rate [35] | The fraction of reads that are exact copies, often from PCR over-amplification. | High rates can inflate coverage estimates and introduce PCR bias. | Minimize through optimized PCR cycles and sufficient starting material. |

The Scientist's Toolkit: Essential Reagent Solutions

Successful implementation of a targeted amplicon sequencing workflow requires several key reagents and tools.

Table 3: Essential Research Reagents for Amplicon Sequencing Workflows

| Reagent / Solution | Function | Considerations for CRISPR Validation |

|---|---|---|

| Locus-Specific Primers [30] [32] | Amplify the specific genomic region containing the CRISPR target site. | Must be designed to flank the cut site; require high specificity and efficiency. |

| High-Fidelity DNA Polymerase [32] | Catalyzes the PCR amplification with minimal error rates. | Critical to avoid introducing sequencing errors that could be mistaken for real variants. |

| Library Preparation Kit [31] [34] | Provides enzymes and buffers for adding barcodes and sequencing adapters. | Kits with streamlined, transposase-based (e.g., seqWell plexWell) can reduce time and cost [34]. |

| Barcoded Adapters (MIDs) [32] | Unique DNA sequences added to each sample to enable multiplexing. | Allow pooling of dozens to hundreds of samples in one sequencing run, reducing cost per sample. |

| Sequence Capture Panels | Pre-designed sets of probes for specific applications. | e.g., xGen SARS-CoV-2 Amplicon Panel for pathogen tracking [31]; custom panels can be designed for any target. |

| Bioinformatics Software [30] [4] | Tools for demultiplexing, alignment, and variant calling. | Options range from commercial suites to open-source tools (e.g., BWA, GATK); ease-of-use varies. |

Targeted amplicon sequencing stands as the unequivocal gold standard for the validation of CRISPR genome editing. Its unparalleled sensitivity, capacity to deliver quantitative and qualitative data on the full spectrum of editing outcomes, and its scalable nature make it an indispensable tool for rigorous research and therapeutic development. While simpler methods like T7E1 or Sanger sequencing with ICE analysis have their place in initial screening, the comprehensive data generated by NGS is fundamental for characterizing heterogeneous editing populations and detecting rare off-target events. As NGS technologies continue to advance and costs decrease, targeted amplicon sequencing will undoubtedly remain the cornerstone of robust, data-driven CRISPR validation.

The validation of CRISPR-Cas gene editing experiments represents a critical bottleneck in the research workflow, with accurate quantification of insertion and deletion (indel) efficiencies being paramount for experimental success. While next-generation sequencing (NGS) provides the gold standard for comprehensive editing analysis, its cost and bioinformatics requirements often render it impractical for routine validation [4]. In response, computational tools that deconvolute Sanger sequencing trace data have emerged as a popular alternative, offering a user-friendly and cost-effective approach for researchers [5]. These tools estimate indel frequencies by computationally analyzing sequencing chromatograms from polymerase chain reaction (PCR) amplicons of the target site, comparing edited samples against wild-type controls.

Among the numerous platforms available, Tracking of Indels by Decomposition (TIDE), Inference of CRISPR Edits (ICE), DECODR (Deconvolution of Complex DNA Repair), and SeqScreener (Thermo Fisher Scientific) have gained significant traction in the scientific community [5]. Although these tools share conceptual similarities, each employs distinct algorithms and modifications that can yield divergent outputs from the same sequencing data [21]. This guide provides a systematic comparison of these four prominent analysis tools, synthesizing performance data from controlled studies to equip researchers with the evidence necessary to select the most appropriate platform for their specific experimental context within the broader framework of CRISPR validation methodologies.

Performance Comparison: Quantitative Analysis of Tool Accuracy

A systematic comparison of computational tools using artificial sequencing templates with predetermined indels revealed significant performance variations [5]. When indels were simple and contained only a few base changes, all tools estimated indel frequency with reasonable accuracy. However, the estimated values became more variable among tools when sequencing templates contained complex indels or knock-in sequences [5].

Table 1: Overall Performance Characteristics of Sanger Deconvolution Tools

| Tool | Best Application Context | Strengths | Key Limitations |

|---|---|---|---|

| DECODR | Complex indel patterns, research requiring precise sequence identification | Most accurate indel frequency estimation for majority of samples; effective net indel size estimation [5] | Variable performance with highly complex editing patterns |

| ICE (Synthego) | High-throughput knockout screening, multi-guide experiments | High correlation with NGS (R² = 0.96); batch processing capability; detects large indels [4] [17] | May struggle with precise sequence deconvolution of complex mixtures |

| TIDE | Basic editing efficiency assessment, simple indel profiles | User-friendly interface; established protocol; TIDER variant for knock-in analysis [2] | Limited capability for complex edits; decreasing developer support [4] |

| SeqScreener | Routine efficiency checks, Thermo Fisher sequencing platforms | Integration with commercial sequencing services; user-friendly interface [5] | Less accurate with complex indels [5] |

Table 2: Performance Metrics from Controlled Comparative Studies

| Tool | Accuracy with Simple Indels | Accuracy with Complex Indels | Knock-in Analysis Capability | Indel Sequence Deconvolution Capability |

|---|---|---|---|---|

| DECODR | High | Moderate-High (Best in class) | Limited | High |

| ICE | High | Moderate | Available via Knock-in Score | Moderate |

| TIDE | High | Low-Moderate | Available via TIDER | Low-Moderate |

| SeqScreener | High | Low-Moderate | Not specifically reported | Low-Moderate |

DECODR provided the most accurate estimations of indel frequencies for the majority of samples in controlled comparisons [5]. While all four tools accurately estimated net indel sizes, DECODR demonstrated superior capability for identifying specific indel sequences [5]. For knock-in efficiency quantification of short epitope tag sequences, TIDE-based TIDER outperformed the other tools [5].

Discrepancies become particularly pronounced in complex editing environments. A 2023 study analyzing somatic CRISPR/Cas9 tumorigenesis models reported high variability in the reported number, size, and frequency of indels across software platforms, especially when larger indels were present [21]. This highlights the critical importance of selecting analysis platforms specific to the biological context and editing complexity.

Experimental Protocols and Methodologies

Benchmarking Experimental Design

The foundational comparative data referenced in this guide were derived from carefully controlled experiments using artificial sequencing templates with predetermined indels [5]. The methodology can be summarized as follows:

CRISPR Editing and Sample Collection: CRISPR–Cas9 or CRISPR–Cas12a ribonucleoprotein (RNP) complexes were assembled using commercial components and microinjected into zebrafish embryos at the 1-cell stage [5].

DNA Extraction and Amplification: Embryos were lysed at 1 day post-fertilization, and genomic DNA fragments encompassing the target sites were amplified using PCR with specific primers [5].

Cloning and Sequence Verification: The PCR amplicons were cloned into plasmids, and Sanger sequencing was performed to identify specific indel sequences, creating a library of known variants [5].

Artificial Template Preparation: Sequencing trace data were generated from various combinations of these predetermined indels, mixed at known ratios to simulate heterogeneous editing outcomes [5].

Tool Analysis and Comparison: These artificial trace files were analyzed using TIDE, ICE, DECODR, and SeqScreener with standard parameters. The output indel frequencies and types from each tool were compared against the known values to quantify accuracy and performance [5].

Standard Workflow for Tool Utilization

The generalized workflow for utilizing these deconvolution tools follows a consistent pattern, regardless of the specific platform chosen:

Diagram 1: Sanger Deconvolution Analysis Workflow

Critical Experimental Considerations:

- Control Requirements: All tools require a wild-type (unmodified) control sequence for accurate decomposition of editing traces [5] [21].

- PCR Amplification: Use high-fidelity DNA polymerase to minimize amplification errors during target region amplification [5] [21].

- Sequencing Quality: Ensure high-quality chromatogram data with low background signal and clear peaks for optimal deconvolution [17].

- Guide RNA Specification: Input the correct guide RNA sequence (excluding PAM) for proper alignment, as this parameter directs the tool to the expected cleavage site [17].

Implementation Guide: Selecting and Using the Appropriate Tool

Tool Selection Framework

Choosing the optimal deconvolution tool requires consideration of multiple experimental factors:

Diagram 2: Tool Selection Decision Tree

Research Reagent Solutions

Table 3: Essential Reagents and Materials for Sanger-Based CRISPR Validation

| Reagent/Material | Function in Workflow | Implementation Notes |

|---|---|---|

| High-Fidelity DNA Polymerase | PCR amplification of target region | Critical for minimizing amplification errors; examples include KOD One [5] |

| Genomic DNA Extraction Kit | Isolation of high-quality DNA from edited cells | Ensure compatibility with your cell type; proteinase K-based lysis used in reference studies [5] |

| Sanger Sequencing Services | Generation of chromatogram trace files | Commercial services typically provide .ab1 or .scf files required by all tools [5] |

| Control gRNAs | Positive controls for editing efficiency | Target standard loci like human AAVS1, HPRT, or mouse Rosa26 [3] |

| Cloning Vectors | Creation of artificial templates for validation | pUC19 used in reference studies for generating predetermined indels [5] |

Practical Implementation Tips

- Multi-Tool Validation: For critical experiments, consider using two different tools to confirm results, particularly given the variability reported across platforms [21].

- Data Quality Assessment: Pay attention to quality metrics provided by each tool (e.g., ICE's R² value), as these indicate confidence in the analysis [17].

- Knock-in Specific Tools: For precise quantification of homology-directed repair, specialized tools like TIDER (for TIDE) or ICE's Knock-in Score may provide more accurate quantification than general indel tools [5] [17].

- Sample Tracking: Implement consistent naming conventions, especially when using batch processing capabilities in ICE, to maintain sample integrity throughout analysis [17].

The deconvolution of Sanger sequencing data through computational tools represents a balanced approach between the qualitative simplicity of enzyme-based assays and the comprehensive but costly nature of NGS validation. The evidence from comparative studies indicates that while all four tools perform adequately with simple indel patterns, DECODR currently provides the most accurate estimation of editing efficiency and indel sequences for complex editing outcomes [5]. ICE remains highly valuable for high-throughput screening applications and demonstrates excellent correlation with NGS data [4].

Researchers should view these tools not as interchangeable alternatives but as specialized instruments for specific experimental contexts. The integration of multiple tools or secondary validation through protein-level assessment (e.g., western blot or flow cytometry) provides the most robust approach for confirming CRISPR editing outcomes [17]. As CRISPR applications continue to evolve in complexity, from simple knockouts to base editing and prime editing, the corresponding validation methodologies must similarly advance, with Sanger deconvolution tools maintaining their position as accessible, cost-effective options for the research community.

The advent of CRISPR-Cas9 technology has revolutionized genetic engineering, enabling precise genome modifications across diverse biological systems. A critical step in any CRISPR experiment is the validation of editing efficiency, which ensures that the designed guide RNAs (gRNAs) successfully direct the Cas9 nuclease to create targeted double-strand breaks. While next-generation sequencing (NGS) provides comprehensive data on editing outcomes and Sanger sequencing offers a reliable intermediate approach, the T7 Endonuclease I (T7E1) assay remains a widely used method for preliminary, rapid screening of editing efficiency [26] [12]. This guide objectively evaluates the role of T7E1 and gel electrophoresis within the broader context of CRISPR validation methodologies, comparing its performance against sequencing-based alternatives to help researchers select appropriate strategies for their specific applications.

The T7E1 assay functions as a cost-effective, rapid initial screen that can identify promising gRNA constructs before committing to more resource-intensive sequencing methods [4]. Its continued relevance in molecular biology labs stems from its technical simplicity and minimal equipment requirements, positioning it as a valuable tool for initial efficiency assessments despite the emergence of more sophisticated quantification technologies. Understanding the capabilities and limitations of this legacy method is essential for designing efficient CRISPR screening workflows, particularly in resource-limited settings or during large-scale preliminary gRNA validation.

Methodological Principles: How T7E1 Functions in CRISPR Assessment

Core Mechanism of the T7E1 Assay